AI and Quantum Chemistry: Computational Approaches for Mapping Chemical Reaction Mechanisms in Drug Discovery

This article explores the transformative role of computational chemistry in elucidating chemical reaction mechanisms, a cornerstone of modern drug discovery and development.

AI and Quantum Chemistry: Computational Approaches for Mapping Chemical Reaction Mechanisms in Drug Discovery

Abstract

This article explores the transformative role of computational chemistry in elucidating chemical reaction mechanisms, a cornerstone of modern drug discovery and development. We examine foundational principles, from quantum mechanics to generative AI, that enable the prediction of reaction pathways and transition states. The scope encompasses a detailed review of cutting-edge methodologies—including machine learning potentials, large language model-guided exploration, and ultra-large virtual screening—and their practical applications in pharmaceutical research. The content further addresses troubleshooting computational limitations and provides a comparative analysis of tool validation, offering researchers and drug development professionals a comprehensive guide to leveraging these technologies for accelerating the design of novel therapeutics.

From Electrons to Outcomes: The Physical Principles of Reaction Prediction

The discovery and optimisation of novel small-molecule drug candidates critically hinges on the efficiency of the iterative Design-Make-Test-Analyse (DMTA) cycle [1]. Within this framework, the synthesis ("Make") phase consistently represents the most costly and time-consuming element, often creating a significant bottleneck that slows drug development pipelines [1]. This challenge intensifies when targeting complex biological systems, which frequently demand intricate chemical structures that require multi-step synthetic routes. These routes are inherently labour-intensive, involving numerous variables that must be scouted and optimised before a successful pathway is identified [1]. The core of this "Make" bottleneck lies in the fundamental difficulty of accurately predicting reaction outcomes—including yield, regioselectivity, and stereochemistry—before compounds are ever synthesised in the laboratory. Overcoming this predictive challenge is not merely a technical improvement but a crucial requirement for accelerating the delivery of new therapeutics to patients.

The High Stakes of Reaction Prediction

Impact on the Drug Discovery Workflow

Inaccurate reaction prediction has direct and severe consequences on drug discovery efficiency. When synthesis fails or yields an unexpected product, the result is wasted resources, extended timelines, and ultimately, a limitation on the chemical space that can be feasibly explored for potential drug candidates [1]. The DMTA cycle relies on the rapid and reliable synthesis of compound series for biological evaluation. Any failure to obtain the desired chemical matter for testing invalidates the entire iterative process, stalling projects and consuming substantial financial and human resources that could be allocated elsewhere [1]. Furthermore, the explorable chemical space is directly dictated by the available building blocks and the confidence with which they can be combined into novel molecular architectures [1]. Without reliable prediction, chemists must resort to conservative, well-established reactions, potentially missing superior drug candidates that reside in less-charted chemical territory.

Quantitative Evidence of the Prediction Challenge

Experimental data and model performance metrics underscore the magnitude of the prediction challenge. The following table summarises key quantitative evidence from recent studies:

Table 1: Quantitative Evidence of Reaction Prediction Challenges

| Evidence Type | Description | Impact/Performance | Source |

|---|---|---|---|

| Model Accuracy | Molecular Transformer Top-1 accuracy on standard USPTO dataset | 90% (biased split) | [2] |

| Model Accuracy | Molecular Transformer Top-1 accuracy on debiased dataset | Significant decrease (exact % not stated) | [2] |

| Reaction Class Failure | Diels–Alder reaction prediction | Inability to predict regioselectivity; wrong product predicted | [2] |

| Data Scarcity | Diels–Alder reactions in USPTO training data | Very few instances, explaining poor performance | [2] |

| Condition Prediction | GraphRXN model on in-house HTE Buchwald-Hartwig data | R² = 0.712 for yield prediction | [3] |

The performance drop observed when moving from a standard to a debiased dataset is particularly revealing. It indicates that reported high accuracies can be inflated by "Clever Hans" predictions, where models make correct predictions for the wrong reasons due to hidden biases in the data, such as spurious correlations between specific substituents and outcomes [2]. This phenomenon masks fundamental shortcomings in model generalisability.

Technical Hurdles in Reaction Outcome Prediction

Data Limitations and Biases

The development of robust predictive models is severely constrained by data availability and quality. Most reaction data available in public databases and literature suffer from a pronounced positive results bias, where failed reactions are systematically underreported [3]. This creates an incomplete picture of chemical reactivity, as models never learn what does not work. Furthermore, dataset scaffold bias—where certain molecular frameworks are overrepresented—leads to models that perform well on familiar scaffolds but fail on novel ones [2]. Finally, there is a pervasive issue of incomplete annotation, where crucial contextual metadata like reaction temperature, scale, or the scientific focus of the project (e.g., medicinal chemistry vs. total synthesis) is omitted [2]. Without this context, which a skilled chemist intuitively uses to interpret reactions, models struggle to make reliable predictions.

Mechanistic Complexity and Computational Cost

The fundamental challenge in reaction prediction lies in accurately modelling the Potential Energy Surface (PES), which depicts the energy states associated with atomic positions during a chemical transformation [4]. Understanding reaction kinetics and feasibility hinges on identifying key points on this surface: reactants and intermediates (energy minima) and transition states (first-order saddle points connecting them) [4]. Transition states are particularly elusive, as they are transient and typically must be revealed through theoretical simulations. Exploring the PES for complex, multi-step reactions presents a combinatorial explosion of possible pathways, making exhaustive searches computationally prohibitive [4]. While high-level quantum mechanical (QM) methods like Density Functional Theory (DFT) offer accuracy, they are notoriously time-intensive and resource-heavy, limiting their application for high-throughput screening in large reaction spaces [4] [3].

The Interpretability Gap in Machine Learning Models

While machine learning models, particularly deep learning architectures, show promise in reaction prediction, their "black-box" nature presents a significant hurdle to adoption by chemists. For model users, the inability to understand why a model predicts a particular outcome is problematic because chemical reactions are highly contextual [2]. A prediction lacking a chemically rational explanation is of limited utility for making strategic decisions in a synthesis campaign. For model developers, this opaqueness makes it difficult to diagnose failure modes and improve model design. It remains unclear whether state-of-the-art models like the Molecular Transformer are learning true physicochemical principles or merely exploiting superficial statistical patterns in the training data [2]. This interpretability gap hinders trust and the effective integration of AI tools into the medicinal chemist's workflow.

Methodologies and Experimental Protocols

AI and Machine Learning Approaches

A. Transformer-Based Architectures: The Molecular Transformer adapts the neural machine translation architecture to chemistry, treating reaction prediction as a translation task where reactant and reagent SMILES strings are "translated" into product SMILES strings [2]. The model relies on a self-attention mechanism to weigh the importance of different parts of the input molecules when generating the output.

Protocol for Training a Molecular Transformer Model

- Data Preprocessing: A large dataset of reactions (e.g., the USPTO dataset text-mined from patents) is collected. SMILES strings of reactants, reagents, and products are canonicalised and combined into a single sequence using a special token (e.g., ">") to separate reactants from reagents.

- Data Augmentation: The model's robustness is improved by employing SMILES augmentation, where each reaction is represented multiple times using different, equivalent SMILES string permutations [2].

- Model Training: The transformer model, comprising an encoder and decoder stack, is trained to learn the mapping from input sequences (reactants + reagents) to output sequences (products). Training involves minimising the cross-entropy loss between the predicted and actual product sequences.

- Interpretation: Post-training, interpretation techniques like Integrated Gradients (IG) are used to attribute the model's predictions to specific substructures in the input. This helps validate whether the model is learning chemically sensible features [2].

B. Graph Neural Networks (GNNs): Frameworks like GraphRXN represent molecules as graphs, where atoms are nodes and bonds are edges [3]. These models directly learn from 2D molecular structures.

Protocol for the GraphRXN Framework

- Graph Representation: Each reaction component (reactant, reagent, solvent) is converted into a directed molecular graph G(V, E), where V is the set of nodes (atoms) and E is the set of edges (bonds). Node and edge features are initialised based on atom and bond types.

- Message Passing: A modified communicative message passing neural network is used. Over K steps, nodes and edges iteratively update their hidden states by aggregating information from their local neighbourhoods.

- For a node v, its message vector is aggregated from its connected edges.

- For an edge e_{v,w}, its message is derived from the hidden states of its source node and the edge itself.

- Readout: After K iterations, a Gated Recurrent Unit (GRU) aggregates the final node embeddings into a single, fixed-length molecular feature vector for each reaction component.

- Reaction-Level Prediction: The molecular vectors of all components are aggregated (e.g., via summation or concatenation) into a single reaction vector. This vector is fed into a dense neural network to predict the reaction output, such as reaction success or yield [3].

C. Hybrid and Rule-Guided Approaches: Tools like ARplorer and RxnNet integrate quantum mechanics with rule-based methodologies or heuristic chemical knowledge to explore reaction pathways more efficiently [4] [5].

Protocol for ARplorer's Reaction Pathway Exploration

- Active Site Identification: The program identifies potential reactive sites and bond-breaking locations in the input molecular structures.

- LLM-Guided Chemical Logic: A Large Language Model (LLM), primed with general chemical knowledge from literature and system-specific rules based on functional groups, generates chemical logic and SMARTS patterns to guide the search and filter unlikely pathways [4].

- Structure Optimisation & TS Search: An iterative process optimises molecular structures and searches for transition states. This employs an active-learning sampling method to enhance efficiency.

- IRC Analysis & Pathway Finalisation: Intrinsic Reaction Coordinate (IRC) calculations are performed from located transition states to connect reactants, intermediates, and products. Duplicate pathways are removed, and the final reaction network is constructed [4].

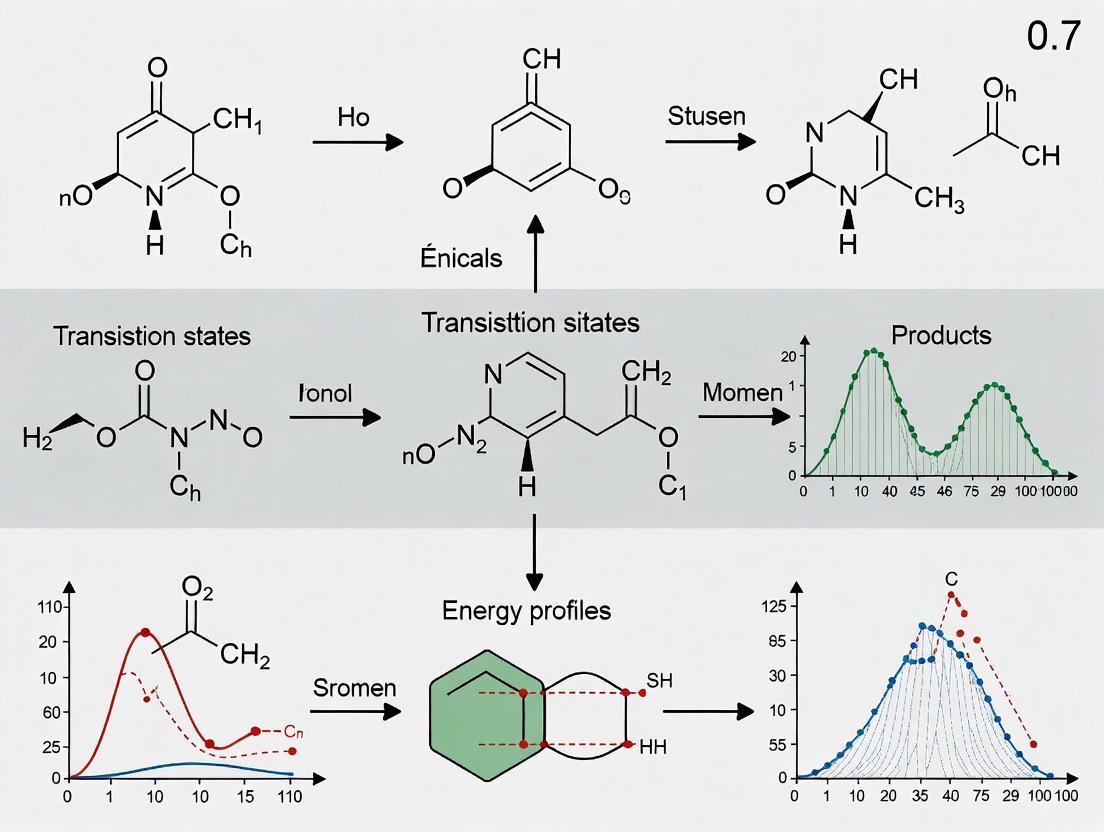

The following diagram illustrates the hybrid workflow of the ARplorer program, which combines quantum mechanics with LLM-guided chemical logic for efficient reaction pathway exploration.

High-Throughput Experimentation (HTE) for Data Generation

To address the critical lack of high-quality, unbiased reaction data, High-Throughput Experimentation (HTE) has emerged as a powerful experimental protocol [3]. HTE enables the systematic and parallel execution of hundreds or thousands of reactions under varying conditions.

Protocol for Generating HTE Datasets

- Reaction Selection: A reaction of interest (e.g., Buchwald-Hartwig amination, Suzuki coupling) is selected.

- Experimental Design: A diverse set of substrates, catalysts, ligands, solvents, and bases is chosen to create a broad matrix of reaction conditions.

- Automated Execution: Reactions are set up robotically in miniaturised format (e.g., in well-plates) to ensure consistency and reproducibility.

- Analysis & Data Curation: Reaction outcomes (e.g., yield, conversion) are analysed using high-throughput analytics (e.g., UPLC-MS). All results—both successful and failed—are recorded in a standardised format with complete metadata. This creates a balanced dataset ideal for training machine learning models [3].

The following table details key computational and experimental reagents essential for research in reaction outcome prediction.

Table 2: Research Reagent Solutions for Predictive Synthesis

| Tool/Resource | Type | Primary Function | Example/Standard |

|---|---|---|---|

| CASP Tools | Software | Computer-Assisted Synthesis Planning for retrosynthetic analysis and route design | AI-powered platforms (e.g., from Roche, Molecular Transformer) [1] |

| HTE Platforms | Experimental | Robotic systems for high-throughput, parallelised reaction execution and data generation | Custom/in-house platforms; commercial systems [3] |

| FAIR Data Repositories | Data | Stores for Findable, Accessible, Interoperable, and Reusable reaction data | Internal corporate databases; public databases (e.g., USPTO) [1] |

| Building Block Catalogues | Chemical | Sources of diverse starting materials (BBs) for synthesis | Enamine, eMolecules, Sigma-Aldrich; virtual MADE catalogue [1] |

| QM Software | Software | Performs quantum chemical calculations to explore Potential Energy Surfaces | Gaussian, GFN2-xTB [4] |

| Reaction Fingerprints | Computational | Numerical representation of reactions for machine learning modelling | DRFP, MFFs, Graph-based learned representations (GraphRXN) [3] |

Integrated Workflows and Future Directions

The true power of predictive synthesis is realised when these methodologies are combined into integrated, data-driven workflows. The future lies in closing the loop between in-silico prediction and automated experimental validation. A promising workflow begins with AI-powered synthesis planning, which generates proposed routes for a target molecule [1]. These proposals are then vetted by a medicinal chemist, potentially interacting with a "Chemical ChatBot" in an iterative dialogue to refine the plan [1]. The most promising routes are executed on automated synthesis platforms, which generate high-quality FAIR (Findable, Accessible, Interoperable, and Reusable) data [1]. This data is fed back into the AI models, continuously refining their predictive capabilities and creating a self-improving cycle.

The diagram below illustrates this envisioned, fully integrated workflow for data-driven synthesis, highlighting the seamless connection between design, AI planning, automated execution, and data analysis.

Key future developments include the deeper integration of LLMs as interfaces to complex models and data, the move towards unified models that simultaneously predict retrosynthetic pathways and reaction conditions, and a cultural shift towards treating high-quality data stewardship as a central pillar of chemical research [1] [4]. As these trends converge, the ability to predict reaction outcomes with high fidelity will cease to be a central challenge and instead become a cornerstone of a accelerated, more efficient drug discovery process.

The computational exploration of chemical reaction mechanisms represents a cornerstone of modern research, driving advances in fields from drug development to materials science. As artificial intelligence (AI) and machine learning (ML) models assume increasingly prominent roles in these explorations, their predictions must adhere to the fundamental laws of physics to be scientifically trustworthy. Among these laws, the principles of mass conservation and electron conservation are non-negotiable; they form the foundational reality upon which all chemical processes occur. Mass conservation states that for any system closed to matter transfer, the mass must remain constant over time, meaning atoms can be rearranged but neither created nor destroyed [6]. Similarly, electron conservation is critical for modeling redox processes and electronic interactions accurately. Unfortunately, many data-driven models, including sophisticated large language models, often violate these core tenets, producing predictions that are physically impossible and thus of limited utility for rigorous scientific inquiry [7] [8]. This whitepaper details the critical importance of embedding these conservation laws as hard constraints within AI frameworks, surveys current methodological approaches, and provides practical protocols for researchers seeking to develop physically-grounded models for computational chemistry.

Mathematical and Physical Foundations of Conservation Laws

The Principle of Mass Conservation

The law of conservation of mass is a bedrock principle in chemistry and physics. Formally, for a closed system, the total mass of reactants must equal the total mass of products in any chemical reaction [9] [6]. This principle emerged from centuries of scientific inquiry, with Antoine Lavoisier's meticulous experiments in the late 18th century definitively demonstrating that although substances may change form during reactions, their total mass remains invariant [6]. Mathematically, for a chemical system with m compounds formed from p elements, this conservation can be expressed as:

M^T ΔC = 0_p

where M is the m × p composition matrix (containing the atomic composition of each species), ΔC is the vector of concentration changes for each species, and 0_p is a zero vector of length p [7]. This equation ensures that the total number of atoms of each element remains constant throughout any transformation.

The Critical Need for Electron Conservation

While mass conservation provides a macroscopic constraint, electron conservation operates at the quantum mechanical level and is equally vital for modeling chemical reactivity accurately. Electrons are the currency of chemical bonds, and their redistribution dictates reaction pathways. The challenge of electron conservation is particularly acute in AI models that attempt to predict reaction outcomes, as standard models may artificially create or annihilate electrons, leading to unrealistic predictions [8]. One promising approach to this challenge utilizes a bond-electron matrix, a concept dating back to Ivar Ugi's work in the 1970s, which represents the electrons involved in a reaction explicitly. This matrix uses nonzero values to represent bonds or lone electron pairs and zeros elsewhere, providing a framework that simultaneously conserves both atoms and electrons [8].

Current AI Approaches and Their Limitations

The Conservation Challenge in Machine Learning

Many machine learning applications in chemistry operate as "black boxes" that learn patterns from data but lack built-in mechanisms to enforce physical laws. This is particularly true for large language models (LLMs) adapted for chemical prediction tasks. As noted by MIT researchers, when these models use computational "tokens" representing individual atoms without conservation constraints, "the LLM model starts to make new atoms, or deletes atoms in the reaction," resulting in predictions that resemble "a kind of alchemy" rather than scientifically grounded chemistry [8]. Similar issues plague models predicting atmospheric composition, where unphysical deviations from mass conservation, though sometimes minor, undermine the models' scientific credibility [7].

Promising Approaches for Physically-Grounded AI

Several innovative approaches are emerging to address these limitations by embedding physical constraints directly into AI frameworks:

Projection-Based Nudging: This method takes the output of any numerical model and minimally adjusts the predicted concentrations to the nearest physically consistent solution that respects atomic conservation laws. The correction uses a single matrix operation derived from constrained optimization theory, projecting predictions onto the null space of the composition matrix

M^Tto ensure mass conservation to machine precision [7].Flow Matching for Electron Redistribution (FlowER): Developed at MIT, this generative AI approach uses a bond-electron matrix to explicitly track all electrons in a reaction, ensuring none are spuriously added or deleted. The system shows promising results for predicting realistic mechanistic pathways while maintaining real-world physical constraints [8].

Heuristics-Guided Exploration: This computational protocol constructs reaction networks using heuristic rules derived from conceptual electronic-structure theory while ensuring conservation through quantum chemical optimization of generated structures [10].

Table 1: Comparison of AI Approaches with Physical Constraints

| Method | Conservation Principle | Key Mechanism | Reported Advantages |

|---|---|---|---|

| Projection-Based Nudging [7] | Mass/Atom Conservation | Matrix-based projection to nearest physical solution | Model-agnostic, minimal perturbation, closed-form solution |

| FlowER [8] | Mass & Electron Conservation | Bond-electron matrix representation | Realistic reaction predictions, maintains electronic constraints |

| Heuristics-Guided Exploration [10] | Implicit via QM optimization | Structure generation based on chemical rules | Automated discovery of reaction pathways |

| Trajectory-Based Methods (tsscds) [11] | Implicit via QM methods | Accelerated molecular dynamics and graph theory | Discovers mechanisms with minimal human intervention |

Practical Implementation and Experimental Protocols

Implementing Mass Conservation as a Hard Constraint

For researchers implementing mass conservation in existing models, the projection-based nudging method provides a practical, post-hoc correction. The protocol involves:

Define the Composition Matrix: Construct the

m × pcomposition matrixMwhere entries represent the number of atoms of each elementpin each chemical speciesm.Compute the Correction Matrix: Calculate the projection matrix

M_corrusing the formula:M_corr = I - M(M^T M)^-1 M^TwhereIis the identity matrix [7].Apply the Correction: For any model prediction

ΔC'(representing concentration changes or tendencies), the mass-conserving solution is obtained as:ΔC = M_corr × ΔC'Species-Weighted Extension: For systems with varying uncertainty across species, implement a weighted version that considers the uncertainty and magnitude of each species, preferentially adjusting species with lower predicted accuracy [7].

Workflow for Electron-Conserving Reaction Prediction

The FlowER protocol for predicting chemical reactions while conserving electrons involves:

Representation: Convert molecular structures into a bond-electron matrix that explicitly represents bonds and lone electron pairs.

Flow Matching: Employ flow matching techniques to model the redistribution of electrons throughout the reaction process.

Constraint Enforcement: Maintain nonzero values in the matrix to represent bonds or lone electron pairs and zeros to represent their absence, ensuring conservation of both atoms and electrons throughout the transformation [8].

The following diagram illustrates a comprehensive workflow for implementing these conservation principles in AI-driven reaction exploration:

Research Reagents and Computational Tools

Table 2: Essential Research Reagents and Computational Tools for Conservation-Grounded AI

| Tool/Reagent | Type | Function/Purpose | Implementation Notes |

|---|---|---|---|

| Composition Matrix (M) [7] | Mathematical Framework | Encodes elemental composition of all species | Foundation for mass conservation constraints |

| Bond-Electron Matrix [8] | Representation Scheme | Tracks electrons and bonds explicitly | Ensures electron conservation in reactions |

| Projection Matrix (M_corr) [7] | Computational Operator | Nudges predictions to mass-conserving solutions | Can be weighted by species uncertainty |

| Graph Theory Algorithms [11] | Analysis Tool | Identifies reaction pathways and connectivity | Uses adjacency matrices to track bond changes |

| Quantum Chemistry Codes [12] | Validation Tool | Provides benchmark energies and structures | DFT, coupled-cluster, or semiempirical methods |

| Automated Exploration Software [13] | Discovery Platform | Systematically explores reaction mechanisms | Tools like CHEMOTON, SCINE, tsscds |

Advanced Exploration and Steering of Chemical Reaction Networks

For complex chemical systems, particularly in catalysis and drug discovery, merely predicting single reactions is insufficient. Researchers need tools to explore entire reaction networks while maintaining physical constraints. The STEERING WHEEL algorithm addresses this challenge by providing human-machine collaboration for exploring chemical reaction networks [13]. This approach alternates between network expansion steps (which add new calculations and results to a growing reaction network) and selection steps (which choose subsets of structures to limit combinatorial explosion) [13]. The following diagram illustrates this interactive exploration process:

This guided approach is particularly valuable for transition metal catalysis and complex organic transformations, where the reaction space is vast and a brute-force exploration is computationally unfeasible [13]. By combining human chemical intuition with automated exploration, researchers can efficiently map out relevant regions of chemical space while maintaining physical constraints throughout the process.

The integration of mass and electron conservation principles into AI frameworks for chemical prediction is not merely a theoretical enhancement but a practical necessity for producing scientifically valid results. As computational chemistry continues to embrace data-driven methods, the fundamental laws of physics must serve as the immutable foundation upon which these models are built. The methodologies surveyed here—from projection-based nudging to electron-conserving generative models and guided network exploration—provide researchers with powerful tools to ensure their AI systems remain grounded in physical reality. For drug development professionals, materials scientists, and chemical researchers, adopting these constraint-based approaches is critical for accelerating discovery while maintaining scientific rigor in the computational exploration of chemical reaction mechanisms.

The accurate prediction of chemical reaction outcomes represents a cornerstone of modern chemical research, with profound implications for drug discovery, materials science, and sustainable chemical synthesis. For decades, computational chemists have sought to develop models that can reliably forecast the products and pathways of chemical transformations. However, many data-driven approaches have struggled with a fundamental limitation: their inability to consistently obey the laws of physics, particularly the conservation of mass and electrons. This violation of physical constraints has resulted in what researchers term "hallucinatory failure modes," where models predict chemically impossible structures with atoms appearing or disappearing spontaneously [14] [8]. Such limitations have restricted the practical utility of computational tools in real-world discovery pipelines.

The recent introduction of FlowER (Flow matching for Electron Redistribution) by MIT researchers represents a paradigm shift in this landscape. By grounding predictions in the physical reality of electron movement through bond-electron matrices, this approach enforces strict conservation laws while maintaining predictive accuracy [14] [8] [15]. This technical guide examines the core innovations of this methodology, its experimental validation, and its implications for computational exploration of chemical reaction mechanisms.

Core Innovation: Bond-Electron Matrix Representation

Theoretical Foundation and Historical Context

The bond-electron (BE) matrix framework employed in FlowER has its roots in work from the 1970s by chemist Ivar Ugi, who developed this representation to systematically track electrons in chemical systems [8] [16]. This approach encodes atomic identities and their electron configurations in a compact matrix format where:

- Nonzero values represent bonds or lone electron pairs

- Zeros indicate the absence of bonding or lone pair electrons [8] [17]

This mathematical representation naturally embeds two critical conservation principles directly into the model's architecture: (1) conservation of all atoms and (2) conservation of all electrons [14]. The BE matrix directly reflects the conventions of arrow-pushing diagrams that chemists have used for generations to visualize reaction mechanisms, creating a bridge between traditional chemical intuition and modern machine learning approaches [14].

From String Transformation to Electron Redistribution

Traditional sequence-based models treat chemical reactions as string transformations—converting reactant SMILES strings to product SMILES strings through pattern recognition [16] [15]. This approach fundamentally disregards the physical entities underlying the transformations. As researchers noted, "if you don't conserve the tokens, the LLM model starts to make new atoms, or deletes atoms in the reaction," resulting in predictions that resemble "alchemy" rather than scientifically grounded chemistry [8].

FlowER recasts this problem entirely by modeling chemical reactivity as "a generative process of electron redistribution" [14] [18]. Instead of treating atoms as tokens in a string, the system explicitly tracks electron movement throughout the reaction process, ensuring that predictions align with physical reality [14]. The matrix representation enables this by providing a complete description of covalent bonding and lone pairs at any pseudo-timepoint between reactants (t=0) and products (t=1) [14].

Table 1: Comparison of Chemical Reaction Representation Approaches

| Representation | Fundamental Unit | Conservation Enforcement | Interpretability |

|---|---|---|---|

| SMILES Strings | Character tokens | None inherent; frequently violated | Low; black-box transformation |

| Graph Edits | Bond changes | Partial; often atom-only | Medium; shows bond changes but not electron movement |

| Bond-Electron Matrix | Electrons and atoms | Built-in to architecture; exact conservation | High; aligns with arrow-pushing mechanisms |

FlowER Architecture: Implementing Physical Constraints

Flow Matching Framework

FlowER employs a modern deep generative framework called flow matching, which generalizes diffusion-based approaches while offering faster inference [14]. This framework formalizes electron movement as the transformation of a probability distribution of electron localization from the reactants' state to the products' state [14]. The model learns to analyze any intermediate state between reactants and products by featurizing the BE matrix and atom identities at pseudo-timepoints between t=0 (reactants) and t=1 (products) [14].

At its core, FlowER utilizes a graph transformer architecture with a multi-headed attention mechanism that operates on the BE matrix representation [14]. The model predicts electron movements analogous to partial arrow-pushing diagrams, which are then applied to update the BE matrix for subsequent timepoints. This recursive prediction yields a complete reaction mechanism step-by-step while ensuring each intermediate state adheres to strict conservation principles [14].

The ΔBE Matrix and Electron Conservation

The central prediction of FlowER is the ΔBE matrix, which captures changes in electron configurations with a net sum of zero, thereby enforcing exact electron conservation [14]. This approach directly reflects the conventions of arrow-pushing diagrams, providing predictions that align with how chemists visualize and rationalize reaction mechanisms [14]. The model further distinguishes between lone pair and bond electron distributions, capturing the nuanced roles of electrons in chemical bonding and reactivity [14].

The following diagram illustrates the core workflow of the FlowER model for predicting reaction mechanisms through electron redistribution:

Diagram 1: FlowER model workflow for electron-conserving reaction prediction

Experimental Protocol and Validation

Training Methodology and Dataset Curation

To train FlowER, the research team imputed mechanistic pathways for a subset of the USPTO-Full dataset containing approximately 1.1 million experimentally-demonstrated reactions from United States Patent Office patents [14]. This comprehensive dataset was processed using 1,220 expert-curated reaction templates constructed for 252 well-described reaction classes, resulting in a total of 1.4 million elementary reaction steps [14].

Following the standard training procedure for conditional flow matching, the team used interpolative trajectories sampled between reactant and product BE matrices as input, with the difference in reactant-product BE matrices serving as ground truth during model training [14]. This approach allowed the model to learn the continuous process of electron redistribution while maintaining physical constraints throughout the transformation.

Research Reagent Solutions

Table 2: Essential Research Components for FlowER Implementation

| Component | Function | Implementation Details |

|---|---|---|

| USPTO-Full Dataset | Training data source | ~1.1 million patented reactions providing experimental validation [14] |

| Bond-Electron Matrix | Physical representation | Encodes atoms, bonds, and lone pairs while enforcing conservation [14] [8] |

| Graph Transformer | Neural architecture | Processes BE matrix with multi-headed attention [14] |

| Flow Matching | Generative framework | Models probability path from reactants to products [14] |

| Mechanistic Templates | Reaction classification | 1,220 expert-curated templates across 252 reaction classes [14] |

Quantitative Performance Analysis

Conservation Law Adherence

The most significant advantage of FlowER's bond-electron matrix approach appears in its strict adherence to conservation laws. When evaluated at the single elementary step level, FlowER demonstrated remarkable performance compared to sequence-based models:

Table 3: Conservation Law Adherence in Reaction Prediction Models

| Model | Valid SMILES | Heavy Atom Conservation | Full Mass & Electron Conservation |

|---|---|---|---|

| FlowER | ~95% | Enforced by architecture | Enforced by architecture |

| Graph2SMILES (G2S) | 68.9% | 31.4% | 14.3% |

| Graph2SMILES+H | 77.28% | 30.1% | 17.3% |

The data reveal that despite being trained on balanced mechanistic datasets, sequence generative models violate fundamental conservation laws for the majority of predictions [14]. Only 14.3-17.3% of sequence-based predictions maintained complete conservation of heavy atoms, protons, and electrons, compared to FlowER's architectural enforcement of these fundamental constraints [14].

Generalization and Data Efficiency

Beyond conservation, FlowER demonstrates impressive generalization capabilities, recovering complete mechanistic sequences with strict mass conservation and learning fundamental chemical principles that connect to expert intuition [14]. The model's physical grounding enables downstream thermodynamic evaluations of reaction feasibility, providing insights beyond mere structural prediction [14].

Perhaps most notably, FlowER achieves remarkable fine-tuning performance on unseen reaction classes with only 32 reaction examples, demonstrating unprecedented sample efficiency for a chemical prediction model [14]. This data-efficient generalization suggests that the model internalizes chemical principles rather than merely memorizing reaction patterns.

Implications for Computational Reaction Mechanism Research

Bridging Mechanistic Understanding and Prediction

FlowER represents a significant advancement in bridging the gap between predictive accuracy and mechanistic understanding in data-driven reaction outcome prediction [14]. By providing explicit electron redistribution pathways alongside product predictions, the model offers interpretable insights that align with chemical intuition [15]. This dual capability addresses a longstanding criticism of "black box" AI models in chemistry, which often fail to explain why a particular product is predicted [14].

The model's probabilistic nature also enables exploration of branching mechanistic pathways, side products, and potential impurities through repeated sampling—a capability that mirrors the reality of chemical systems where multiple pathways often compete [14]. This represents a departure from deterministic prediction models that typically identify only the single most likely outcome.

Limitations and Future Directions

The MIT team has been transparent about FlowER's current limitations, particularly its restricted coverage of reactions involving metals and catalytic cycles [8] [17]. These gaps stem from the training data sourced from patent literature, which contains limited examples of these chemistries [8]. Expansion to encompass organometallic chemistry, catalysis, and electrochemical systems represents an important direction for future development.

Additionally, while the physics-grounded approach is elegant, it also increases model complexity compared to simpler pattern-matching approaches [16]. The scalability of this approach to the vastness of chemical space remains an open question, though the demonstrated sample efficiency in fine-tuning suggests promising extensibility [14] [16].

The bond-electron matrix approach implemented in FlowER represents a fundamental shift in how computational models conceptualize and predict chemical reactivity. By embedding physical constraints directly into the model architecture rather than treating them as optional guidelines, this methodology addresses core limitations that have plagued data-driven chemistry models for decades. The result is a system that not only predicts reaction outcomes but does so through mechanistically interpretable pathways that obey the fundamental laws of chemistry and physics.

As the field progresses toward more sophisticated AI-assisted chemical discovery, approaches like FlowER that prioritize physical realism alongside predictive accuracy will be essential for building trust and utility in real-world applications. The integration of physical principles with data-driven learning represents a promising path toward computational tools that truly understand chemistry rather than merely mimicking its patterns.

The Schrödinger equation serves as the fundamental cornerstone of quantum mechanics, providing the mathematical framework necessary for describing and predicting the behavior of particles at the atomic and subatomic levels. In computational chemistry, this equation enables researchers to move beyond observational chemistry to predictive, first-principles calculations of molecular structure, properties, and reactivity. This technical guide explores the essential role of the Schrödinger equation in the computational exploration of chemical reaction mechanisms, with particular relevance to pharmaceutical research and drug development. By establishing the theoretical foundation and presenting practical methodologies, this work aims to equip researchers with the knowledge to leverage quantum chemical computations in mechanistic studies.

The Schrödinger equation is a partial differential equation that forms the quantum counterpart to Newton's second law in classical mechanics [19]. Named after Erwin Schrödinger who postulated it in 1926, this equation describes how the quantum state of a physical system changes over time [20] [19]. Unlike Newtonian mechanics which predicts definite paths for particles, the Schrödinger equation operates on the wave function, denoted as |Ψ⟩, which contains all the information about a quantum system [19].

In the context of computational chemistry, the time-independent Schrödinger equation is of particular importance for determining the stable states of molecular systems [20]. This formulation appears as an eigenvalue equation:

Ĥ|ψ⟩ = E|ψ⟩

Where Ĥ is the Hamiltonian operator representing the total energy of the system, |ψ⟩ is the wave function of the system, and E is the energy eigenvalue corresponding to that particular state [20]. Solving this equation for a chemical system provides the allowable energy states and electron distributions, which directly determine molecular properties and reactivity [20].

The linearity of the Schrödinger equation is a crucial mathematical property with profound physical implications [19]. If |ψ₁⟩ and |ψ₂⟩ are both possible states of a system, then any linear combination |ψ⟩ = a|ψ₁⟩ + b|ψ₂⟩ is also a valid state [20] [19]. This principle of superposition enables quantum systems to exist in multiple states simultaneously, a phenomenon with significant consequences for molecular behavior and quantum computing applications in chemistry [20].

Mathematical Foundation

Fundamental Forms and Operators

The Schrödinger equation exists in two primary forms: time-dependent and time-independent. The time-dependent Schrödinger equation governs the evolution of quantum systems:

iℏ(∂/∂t)|Ψ(t)⟩ = Ĥ|Ψ(t)⟩

Here, i is the imaginary unit (√-1), ℏ is the reduced Planck constant, and Ĥ is the Hamiltonian operator [19]. For many practical applications in computational chemistry, the time-independent form is more directly useful:

Ĥ|ψₙ⟩ = Eₙ|ψₙ⟩

This eigenvalue equation provides stationary states of the system, where |ψₙ⟩ represents the wave function of the nth stationary state and Eₙ is its corresponding energy [19].

The Hamiltonian operator Ĥ encapsulates the total energy of the system and consists of two fundamental components [20]:

Ĥ = T̂ + V̂

Where T̂ represents the kinetic energy operator and V̂ represents the potential energy operator. For a single particle in one dimension, the kinetic energy operator takes the form -ℏ²/2m(∂²/∂x²), while the potential energy operator V(x) depends on the specific system being studied [19].

Table 1: Key Components of the Schrödinger Equation

| Component | Mathematical Representation | Physical Significance | ||

|---|---|---|---|---|

| Wave Function | Ψ⟩ or Ψ(x,t) | Complete description of quantum state; contains all measurable information | ||

| Hamiltonian Operator | Ĥ = T̂ + V̂ | Total energy of the system | ||

| Kinetic Energy Operator | -ℏ²/2m(∂²/∂x²) | Energy due to particle motion | ||

| Potential Energy Operator | V(x,t) | Energy from interactions and external fields | ||

| Probability Density | Ψ(x,t) | ² | Probability of finding particle at position x at time t |

Physical Interpretation and Observables

The wave function solution to the Schrödinger equation, Ψ(x,t), has a probabilistic interpretation first proposed by Max Born [19]. Specifically, the square of the absolute value of the wave function, |Ψ(x,t)|², defines a probability density function. For a wave function in position space, this means:

Pr(x,t) = |Ψ(x,t)|²

This equation indicates the probability of finding the particle at position x at time t [19]. This probabilistic nature fundamentally distinguishes quantum mechanics from classical physics.

When the Schrödinger equation is solved for a system, the resulting wave functions represent stationary states with precisely defined energies [20]. These solutions represent the only allowed energy states for the system, which has profound implications for molecular structure and spectroscopy. The quantum superposition principle allows general states to be constructed as linear combinations of these energy eigenstates [20] [19].

The Schrödinger Equation in Computational Chemistry

From Mathematical Formalism to Chemical Insight

In computational chemistry, the Schrödinger equation provides the theoretical foundation for understanding and predicting chemical phenomena at the most fundamental level. The process begins with constructing the molecular Hamiltonian, which incorporates the kinetic energies of all electrons and nuclei, as well as the potential energy terms describing all Coulombic interactions between these charged particles [20].

The complexity of solving the Schrödinger equation increases dramatically with system size [20]. For a hydrogen atom (one electron), an exact solution is possible, but for larger atoms and molecules, the electron-electron repulsion terms make analytical solutions intractable [20]. This challenge has driven the development of sophisticated computational methods, including the Hartree-Fock approach, density functional theory, and quantum Monte Carlo techniques, all of which represent different strategies for approximating solutions to the Schrödinger equation for many-electron systems.

The power of these computational approaches lies in their ability to extract chemically meaningful information from the wave function. For example, the electron density derived from the wave function can be visualized to reveal molecular orbitals, bond critical points, and reaction pathways. Additionally, the energy eigenvalues provide access to thermodynamic properties, while the response of the wave function to external perturbations enables prediction of spectroscopic parameters.

Computational Workflow for Reaction Mechanism Exploration

The application of quantum chemistry to reaction mechanism exploration follows a systematic workflow that transforms molecular structures into mechanistic insights. The diagram below illustrates this process:

Diagram 1: Quantum Chemistry Workflow

Key Methodologies and Protocols

Computational exploration of reaction mechanisms relies on several well-established protocols built upon the Schrödinger equation foundation. The table below summarizes the primary computational methodologies:

Table 2: Computational Methodologies for Reaction Mechanism Studies

| Methodology | Theoretical Basis | Key Applications in Mechanism Research | Computational Cost |

|---|---|---|---|

| Ab Initio Methods | Direct solution of electronic Schrödinger equation with approximate wave function | High-accuracy energy calculations; small system validation | Very High |

| Density Functional Theory (DFT) | Uses electron density rather than wave function as fundamental variable | Geometry optimization; transition state searching; medium-sized systems | Moderate |

| Semi-empirical Methods | Simplified quantum mechanics with empirical parameters | Large system screening; conformational analysis | Low |

| Molecular Mechanics | Classical force fields without electronic structure | Very large systems; protein-ligand interactions | Very Low |

Protocol 1: Transition State Optimization

Initial Structure Preparation: Generate reasonable guess structures for reactants, products, and putative transition state using chemical intuition and analogous systems.

Geometry Optimization: Employ computational methods (typically DFT) to locate stationary points on the potential energy surface through iterative solution of the Schrödinger equation.

Frequency Calculation: Perform vibrational analysis to confirm transition state (one imaginary frequency) versus minimum (all real frequencies).

Intrinsic Reaction Coordinate (IRC) Analysis: Follow the reaction path from the transition state forward to products and backward to reactants to confirm the mechanism.

Energy Calculation: Compute accurate electronic energies for all stationary points using high-level quantum chemical methods.

Protocol 2: Reaction Pathway Mapping

Reaction Center Identification: Define the atoms directly involved in bond formation and cleavage using techniques such as reaction template extraction [21].

Coordinate Definition: Establish a reaction coordinate describing the progression from reactants to products.

Potential Energy Surface Scan: Calculate energies for structures at regular intervals along the reaction coordinate.

Mechanistic Template Application: Apply expert-coded mechanistic templates to interpret electron movements in chemically meaningful terms [21].

Kinetic Parameter Extraction: Calculate activation energies and rate constants from energy barriers between stationary points.

Applications in Chemical Reaction Mechanisms Research

Mechanistic Pathway Elucidation

The Schrödinger equation enables researchers to move beyond product identification to detailed understanding of reaction mechanisms at the electronic level. Recent advances in large-scale reaction datasets, such as the mech-USPTO-31K dataset containing chemically reasonable arrow-pushing diagrams validated by synthetic chemists, have created new opportunities for mechanism-based reaction prediction [21]. These developments address the critical need for sophisticated chemical models that explicitly capture underlying reaction mechanisms, including step-by-step sequences of electron movements and reactive intermediates [21].

The process of automated mechanistic pathway labeling involves two key steps: reaction template (RT) extraction and mechanistic template (MT) application [21]. Reaction templates are obtained by identifying changed atoms through comparison of chemical environments before and after reactions, then extending to include π-conjugated systems and mechanistically important special groups [21]. Mechanistic templates then describe the actual electron movements in the form of arrow-pushing diagrams, representing attacking and electron-receiving moieties based on chemistry knowledge [21].

Table 3: Key Research Reagent Solutions in Computational Mechanism Studies

| Research Tool | Function | Application Example |

|---|---|---|

| Reaction Template (RT) Libraries | Encodes reaction transformation patterns as computable rules | Automated identification of reaction centers from experimental data [21] |

| Mechanistic Template (MT) Databases | Expert-coded electron movement patterns for common reaction classes | Distinguishing between SN1 and SN2 mechanisms based on chemical environment [21] |

| Quantum Chemistry Software Packages | Numerical solvers for the electronic Schrödinger equation | Calculating transition state geometries and energies for barrier determination |

| Reaction Mechanism Datasets | Curated collections of validated mechanistic pathways | Training machine learning models for reaction outcome prediction [21] |

| Atom-Mapping Algorithms | Automated identification of atom correspondence between reactants and products | Preparing reaction data for mechanistic analysis [21] |

Data Generation and Analysis Framework

The integration of quantum chemistry calculations with automated mechanism generation creates a powerful framework for high-throughput mechanistic studies. The following diagram illustrates this data generation and analysis pipeline:

Diagram 2: Mechanism Analysis Pipeline

This framework addresses significant challenges in computational reaction mechanism research, including the frequent absence of necessary reagents in recorded reaction data [21]. For approximately 60% of reactions in curated datasets, necessary reagents must be added to complete the mechanistic picture [21]. Additionally, the framework incorporates technical maneuverability to capture important mechanistic elements beyond the immediate reaction center, addressing limitations associated with locality constraints [21].

Implications for Drug Development

The application of Schrödinger equation-based computational methods has transformed early-stage drug discovery by providing atomic-level insights into molecular interactions and reactivity. Quantum chemical calculations enable researchers to predict metabolic pathways, assess potential toxicity, and optimize synthetic routes before undertaking costly experimental work.

In pharmaceutical research, understanding reaction mechanisms is crucial for interpreting product formation at the atomic and electronic level [21]. Computational models that explicitly capture underlying reaction mechanisms provide valuable insights into stereochemistry, reaction kinetics, byproduct formation, and other important reaction details [21]. This mechanistic understanding is particularly valuable for predicting drug metabolism and identifying potential reactive metabolites that could cause toxicity.

The development of reliable reaction outcome prediction models based on mechanistic understanding represents an active research frontier [21]. Such models aim to predict the same arrow-pushing diagrams that human chemists would draw, capturing the finer details of electron movements and reactive intermediates that are crucial for comprehensive reaction understanding [21]. As these models improve, they will increasingly support synthetic planning in drug development by identifying viable synthetic pathways to target compounds [21].

The Schrödinger equation provides the essential theoretical foundation for computational chemistry and its application to reaction mechanism research. By enabling first-principles calculations of molecular structure, properties, and reactivity, this fundamental equation has transformed our ability to understand and predict chemical behavior at the most detailed level. The continuing development of computational methods, coupled with growing mechanistic datasets and more accurate quantum chemical models, promises to further enhance our capability to explore chemical reaction spaces and accelerate drug development processes. As computational power increases and algorithms become more sophisticated, the role of Schrödinger equation-based calculations in pharmaceutical research will continue to expand, ultimately enabling more efficient and predictive drug discovery.

A Toolbox for the Digital Chemist: AI, QM, and Hybrid Methods in Action

The computational exploration of chemical reaction mechanisms represents a cornerstone of modern chemical research, with profound implications for drug development, materials science, and synthetic chemistry. Traditional approaches to reaction prediction have often relied on quantum chemical calculations, which are computationally prohibitive for large-scale exploration, or data-driven models that frequently violate fundamental physical laws. The core challenge has been bridging the gap between predictive accuracy and mechanistic understanding. Recent generative artificial intelligence (AI) breakthroughs, specifically Flow Matching for Electron Redistribution (FlowER) and Equivariant Consistency Models (ECTS), are redefining this landscape. These approaches integrate physical constraints directly into their architectures, enabling not only accurate prediction of reaction outcomes but also providing unprecedented insight into the electron-level pathways that govern chemical reactivity.

FlowER: Electron-Conscious Reaction Prediction

Core Principle and Architecture

FlowER recasts reaction prediction as a problem of electron redistribution within the deep generative framework of flow matching [22] [23]. Its foundational innovation lies in explicitly conserving both mass and electrons through the bond-electron (BE) matrix representation, a concept originally developed by chemist Ivar Ugi in the 1970s [8] [24]. This representation uses a matrix where nonzero values represent bonds or lone electron pairs and zeros represent their absence, providing a direct computational analogue for tracking electron movement during reactions [8].

The model employs flow matching to learn a probability path between the electron distribution of reactants and that of products [22]. This approach conceptually aligns with the "arrow-pushing" formalism taught to chemists, where curved arrows show the movement of electrons during bond formation and breakage [25]. By operating directly on the BE matrix, FlowER inherently respects conservation laws that previous models based on SMILES strings or molecular graphs often violated, eliminating "hallucinatory" failure modes where atoms spontaneously appear or disappear [8] [23].

Detailed Methodology and Workflow

The experimental implementation of FlowER involves a carefully designed pipeline that transforms chemical structures into a flow-matched generative process. The following diagram illustrates the core workflow of the FlowER framework for predicting reaction mechanisms.

Input Representation and Training:

- Input Format: FlowER requires atom-mapped reactions for training, validation, and testing. Each elementary reaction step follows the format:

mapped_reaction|sequence_idx[25]. - Training Data: The model is trained on a combination of subsets from USPTO-FULL, RmechDB, and PmechDB, totaling over a million chemical reactions primarily from U.S. Patent Office databases [25] [8].

- Key Hyperparameters: Critical configuration includes embedding dimension size (

emb_dim), number of transformer layers (enc_num_layers), attention heads (enc_heads), and radial basis function parameters for the BE matrix representation (rbf_low,rbf_high,rbf_gap) [25].

Inference and Search Protocol:

- Beam Search: For mechanistic exploration, FlowER employs beam search to identify plausible reaction pathways. Users input reactions in the format

reactant>>product1|product2|...in a specified text file [25]. - Search Parameters: Key beam search configurations include

beam_size(top-k candidate selection),nbest(cutoff for top-k outcomes),max_depth(maximum exploration depth), andchunk_size(concurrent processing of reactant sets) [25]. - Pathway Visualization: The generated routes can be visualized using the provided

vis_network.ipynbJupyter notebook [25].

Research Reagent Solutions

Table 1: Essential Computational Resources for FlowER Implementation

| Research Reagent | Function | Specifications |

|---|---|---|

| FlowER Codebase | Core model architecture and training pipelines | Available via GitHub [25] |

| Mechanistic Dataset | Training data with elementary reaction steps | Includes USPTO-FULL, RmechDB, PmechDB [25] |

| Computational Environment | Hardware/software infrastructure | Ubuntu ≥16.04, Conda ≥4.0, GPU with 25GB+ Memory, CUDA ≥12.2 [25] |

| Bond-Electron Matrix | Physical representation of molecules | Encodes bonds and lone electron pairs; ensures mass/electron conservation [8] |

ECTS: Ultra-Fast Exploration of Transition States

Core Principle and Architecture

The Equivariant Consistency Model for Transition State (ECTS) represents a complementary breakthrough focused on the critical structures governing reaction kinetics: transition states (TS) [26]. Traditional transition state exploration requires extensive quantum chemistry calculations, making mechanistic studies computationally prohibitive for complex systems. ECTS addresses this bottleneck by unifying TS generation, energy barrier prediction, and reaction pathway mapping within a single, ultra-fast diffusion framework [26].

The model builds upon consistency model principles, which enable direct mapping from noise to data instead of iterative reverse-time differential equation solving [26]. By incorporating equivariant constraints, ECTS respects the geometric symmetries of molecular systems, ensuring generated structures are physically meaningful. This approach achieves an efficiency at least two orders of magnitude higher than conventional diffusion models while maintaining remarkable accuracy [26].

Detailed Methodology and Workflow

ECTS operates through a streamlined diffusion process that directly generates transition state geometries and associated energy barriers. The following diagram illustrates its efficient single-step or few-step denoising process.

Consistency Diffusion Process:

- Mathematical Foundation: ECTS employs a modified probability flow ordinary differential equation (ODE) that transforms the molecular conformation distribution into a tractable noise distribution while maintaining SE(3)-equivariance [26]. This allows direct mapping from noise vectors to transition state structures without iterative reverse-time solving.

- Sampling Efficiency: Where traditional diffusion models require thousands of denoising steps, ECTS achieves comparable quality with only one sampling step, with performance improving further with a few additional iterations [26].

Performance Metrics:

- Structural Accuracy: Generated TS structures exhibit an exceptionally small error margin of just 0.12 Å root mean square deviation compared to ground truth quantum chemical calculations [26].

- Energy Prediction: Through continuous refinement during denoising, ECTS predicts energy barriers with a median error of merely 2.4 kcal/mol without post-DFT calculations [26].

- Pathway Generation: The model generates complete reaction paths that show strong agreement with true reaction coordinates, enabling mechanistic exploration [26].

Research Reagent Solutions

Table 2: Essential Computational Resources for ECTS Implementation

| Research Reagent | Function | Specifications |

|---|---|---|

| ECTS Framework | Transition state generation and pathway exploration | Implements equivariant consistency diffusion [26] |

| Quantum Chemistry Data | Training data with validated transition states | Structures and energies from ab initio calculations [26] |

| Equivariant Networks | SE(3)-equivariant transformer architecture | Encodes Cartesian molecular conformations [26] |

| Consistency Sampler | Ultra-fast sampling from noise to data | Enables single-step generation [26] |

Comparative Analysis and Performance Benchmarks

Quantitative Performance Metrics

Table 3: Performance Comparison of FlowER and ECTS Against Traditional Methods

| Model | Key Innovation | Accuracy Metric | Efficiency Gain | Physical Constraints |

|---|---|---|---|---|

| FlowER | Electron flow matching | Matches/exceeds existing approaches in finding standard mechanistic pathways [22] | Enables rapid pathway search via beam search [25] | Explicit mass and electron conservation [8] |

| ECTS | Consistency diffusion | 0.12 Å RMSD for TS structures; 2.4 kcal/mol median error for barriers [26] | 100x faster than conventional diffusion models [26] | SE(3)-equivariance for geometrically valid structures [26] |

| Traditional AI Models | SMILES-based or graph-based | Limited by hallucinatory failure modes [8] | Varies by approach | Often violate conservation laws [8] |

| Quantum Chemistry | First-principles calculations | Ground truth but computationally limited | Computationally prohibitive for large systems | Physically rigorous but resource-intensive |

Integration Potential for Reaction Discovery

The complementary strengths of FlowER and ECTS suggest powerful integration potential. FlowER provides the electron-level understanding of reaction sequences, while ECTS delivers ultra-fast transition state characterization with kinetic parameters. Together, they form a comprehensive framework for computational reaction exploration:

- Mechanism Elucidation: FlowER can propose plausible electron redistribution pathways, which ECTS can then validate through transition state analysis [22] [26].

- Kinetic Profiling: The energy barriers predicted by ECTS provide critical information about reaction feasibility and rates that complement FlowER's mechanistic insights [26].

- Pathway Optimization: Researchers can use these tools iteratively to explore reaction networks, identify rate-limiting steps, and design improved synthetic routes.

Implementation Guide for Research Applications

Practical Deployment Considerations

For FlowER Implementation:

- Environment Setup: Begin with the GitHub repository

FongMunHong/FlowERand follow the system requirements (Ubuntu ≥16.04, Conda ≥4.0, GPU with 25GB+ memory, CUDA ≥12.2) [25]. - Data Preparation: Structure input data according to the required format for elementary reaction steps (

mapped_reaction|sequence_idx) [25]. - Beam Search Configuration: For reaction exploration, modify

beam_size,nbest, andmax_depthparameters insettings.pyto balance comprehensiveness and computational cost [25].

For ECTS Application:

- Transition State Generation: Input molecular graphs to generate candidate transition state structures with associated energy barriers [26].

- Pathway Mapping: Use the generated reaction paths to visualize complete reaction coordinates and identify key intermediates [26].

- Validation: Despite the high accuracy, critical reactions should be validated with targeted quantum chemical calculations [26].

Current Limitations and Development Trajectory

Both technologies represent rapidly evolving frontiers with identifiable growth paths:

FlowER Limitations:

- Chemistry Coverage: The current model has limited exposure to reactions involving certain metals and catalytic cycles, as these are underrepresented in its training data [8] [24].

- Scalability: While efficient for single-step reactions, multi-step pathway prediction remains computationally intensive [25].

ECTS Limitations:

- System Complexity: Performance on large, flexible molecular systems with multiple rotatable bonds requires further validation [26].

- Accuracy Boundaries: While impressive, the 2.4 kcal/mol median error may still be significant for reactions with very small energy differences [26].

Development Roadmap:

- Near-term: Expansion to organometallic and catalytic reactions, improved handling of complex reaction networks [8].

- Long-term: Full integration with automated synthesis planning and experimental validation platforms [8] [24].

FlowER and ECTS represent paradigm shifts in the computational exploration of chemical reaction mechanisms. By embedding physical principles directly into generative AI architectures—electron conservation through BE matrices in FlowER and geometric equivariance in ECTS—these approaches overcome fundamental limitations of previous data-driven models. They provide researchers with unprecedented capabilities to map reaction pathways, predict products with high accuracy, characterize transition states, and estimate kinetic parameters at computational speeds previously unimaginable.

For the drug development professionals and research scientists comprising the target audience, these tools offer practical solutions for accelerating reaction discovery and optimization. The open-source nature of FlowER ensures accessibility, while the demonstrated performance of both technologies provides confidence in their application to challenging problems in synthetic chemistry, medicinal chemistry, and materials science. As these frameworks continue to evolve and integrate, they promise to significantly advance our fundamental understanding of chemical reactivity while dramatically accelerating the design and discovery of new molecules with tailored properties.

The computational exploration of chemical reaction mechanisms is a cornerstone of modern chemistry, crucial for advancing catalyst design, understanding reaction kinetics, and accelerating drug development. Traditional methods for mapping Potential Energy Surfaces (PES) to identify intermediates and transition states are often hampered by exponential pathway complexity and substantial computational costs. The integration of Large Language Models (LLMs) into specialized computational programs is emerging as a transformative solution to these challenges. This whitepaper examines the pioneering role of LLMs in guiding automated reaction pathway exploration, with a focused analysis on the ARplorer program. We detail its architecture, the LLM-guided chemical logic that powers its efficiency, and the experimental protocols that enable its application in studying complex multi-step reactions.

ARplorer is an automated computational program, built using Python and Fortran, designed to conduct fast and efficient exploration of reaction pathways for PES studies [4]. Its development addresses a critical limitation in conventional quantum mechanics (QM) and molecular dynamics (MD) approaches: the absence of chemical logic implementation based on existing literature and the need for system-specific modifications [4].

The program operates on a recursive algorithm, with each iteration involving three core steps [4]:

- Active Site Identification: The program identifies active sites and potential bond-breaking locations to set up multiple input molecular structures for analysis.

- Transition State Search & Optimization: It employs a blend of active-learning sampling and potential energy assessments to optimize molecular structures and hone in on potential intermediates.

- Pathway Validation via IRC: Intrinsic Reaction Coordinate (IRC) analysis is performed to derive new reaction pathways from optimized structures, eliminate duplicates, and finalize structures for subsequent input [4].

For efficiency, ARplorer combines faster semi-empirical methods (GFN2-xTB) for PES generation with more precise algorithms (e.g., from Gaussian 09) for TS searches, though it maintains the flexibility to switch to Density Functional Theory (DFT) for higher precision when necessary [4].

Workflow Visualization

The following diagram illustrates the core recursive workflow of the ARplorer program:

The Core Innovation: LLM-Guided Chemical Logic

The pivotal innovation within ARplorer is its LLM-guided chemical logic, which moves beyond unfiltered PES searches by applying predetermined, chemically plausible biases to refine the search process [4]. This logic is built from two complementary knowledge sources, creating a powerful hybrid system for pathway prediction.

The Scientist's Toolkit: Key Research Reagent Solutions

| Component | Function & Explanation |

|---|---|

| General Chemical Knowledge Base | A curated collection of indexed data from textbooks, databases, and research articles. Serves as the foundational source of established chemical rules for the LLM [4]. |

| Specialized LLM | A fine-tuned large language model that processes the general knowledge base and system-specific SMILES strings to generate targeted chemical logic and reaction patterns [4]. |

| SMILES Strings | Simplified Molecular-Input Line-Entry System; a textual representation of the molecular structure. Serves as the primary input for generating system-specific chemical logic [4]. |

| SMARTS Patterns | A powerful extension of SMILES that describes molecular patterns and functional groups. Used by the LLM to encode reaction rules for identifying plausible reaction sites [4]. |

| Python Pybel Module | A Python module used to compile lists of active atom pairs and potential bond-breaking locations based on the generated chemical logic [4]. |

| GFN2-xTB | A semi-empirical quantum mechanical method. Used for quick generation of potential energy surfaces and large-scale screening within the ARplorer workflow [4]. |

| DFT (e.g., Gaussian 09) | Density Functional Theory software. Used for more precise and detailed quantum mechanical calculations when higher accuracy is required [4]. |

Constructing the Chemical Logic Library

The process of building the chemical logic library is methodical and occurs prior to the autonomous PES exploration. It ensures that ARplorer's searches are grounded in established chemical knowledge.

- General Chemical Logic Generation: The process begins with pre-screened data sources (books, databases, articles) being processed and indexed to form a general chemical knowledge base. This base is refined using prompt engineering to generate general SMARTS patterns, which are formalized representations of chemical rules [4].

- System-Specific Chemical Logic Generation: For a given reaction system, the reactants are converted into SMILES format. This textual representation, along with prompts designed to access the general knowledge base, is fed into a specialized LLM. The LLM then generates targeted chemical logic and SMARTS patterns specific to the system under investigation [4].

It is critical to emphasize that in the current ARplorer workflow, the LLM serves exclusively as a literature mining tool during this initial knowledge curation phase. The model is not involved in energy evaluation or pathway ranking. All assessments of reaction plausibility and kinetics are performed exclusively via first-principles QM computations, guaranteeing the quantum chemical rigor of the results [4].

Knowledge Curation Visualization

The following diagram illustrates the process of building the chemical logic library:

Experimental Protocols & Performance Benchmarks

The integration of LLM-guided chemical logic with active-learning TS sampling and parallel computing creates a highly efficient workflow for mechanistic investigation. The following protocol outlines a typical application of ARplorer for studying a multi-step reaction.

Detailed Experimental Protocol for Multi-Step Pathway Exploration

- System Preparation & Logic Curation

- Input: Provide the SMILES strings of the reactant molecules.

- Action: Execute the knowledge curation workflow (Section 3.1) to generate the system-specific chemical logic and SMARTS patterns. This logic is stored in a library for the exploration phase.

- Initialization

- Input: Load the initial reactant geometry and the curated chemical logic library.

- Action: The program uses Pybel to identify initial active atom pairs and potential bond-breaking locations based on the chemical logic [4].

- Recursive Pathway Exploration

- For each intermediate generated: a. Structure Setup: Multiple input structures are configured based on the identified active sites. b. TS Search & Optimization: An iterative TS search is launched using a hybrid GFN2-xTB/Gaussian 09 approach. The active-learning method efficiently hones in on viable transition states [4]. c. IRC & Validation: For each optimized TS structure, an IRC calculation is performed in both directions to confirm it connects to the expected reactants and products. New intermediates and products are added to the exploration queue [4]. d. Filtering & Duplicate Removal: Energy filters and duplicate checks are applied. Pathways deemed implausible by the chemical logic or with high-energy barriers are discarded.

- Termination & Output

- The process terminates when no new viable pathways are found within a specified energy window.

- Output: The program returns a comprehensive list of discovered reaction pathways, including all intermediates, transition states, and their associated thermodynamic and kinetic parameters.

Quantitative Performance Data

The effectiveness of ARplorer has been demonstrated through case studies on complex multi-step reactions, including organic cycloadditions, asymmetric Mannich-type reactions, and organometallic Pt-catalyzed reactions [4]. The table below summarizes the key performance enhancements offered by its integrated approach.

Table 1: Performance Advantages of the ARplorer Framework

| Feature | Benefit & Quantitative Impact |

|---|---|

| LLM-Guided Chemical Logic | Applies literature-derived and system-specific chemical rules to filter out implausible pathways, drastically reducing the search space and computational cost compared to unfiltered searches [4]. |

| Active-Learning TS Sampling | Enhances the efficiency and speed of transition state localization, a traditionally time-consuming step in QM calculations [4]. |

| Energy Filter-Assisted Parallel Computing | Minimizes unnecessary computations by running multiple reaction searches in parallel and filtering them based on energetic criteria [4]. |

| Multi-Step Reaction Searches | Demonstrates versatility and effectiveness in automating the exploration of complex, multi-step reaction pathways, as shown in case studies [4]. |

The paradigm of using LLMs for molecular reasoning is also being advanced by other research. For instance, the atom-anchored LLM framework demonstrates how general-purpose LLMs can be guided to perform precise chemical tasks like retrosynthesis without task-specific training [27]. This approach, which anchors chain-of-thought reasoning to unique atomic identifiers in a SMILES string, has achieved high success rates in identifying chemically plausible disconnection sites (≥90%) and final reactants (≥74%) [27].