Integrating LAMMPS with ML Potentials: A Practical Guide for Molecular Dynamics in Biomedical Research

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on deploying Machine Learning Potentials (MLPs) within the LAMMPS molecular dynamics framework.

Integrating LAMMPS with ML Potentials: A Practical Guide for Molecular Dynamics in Biomedical Research

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on deploying Machine Learning Potentials (MLPs) within the LAMMPS molecular dynamics framework. We cover foundational concepts, practical implementation steps, common troubleshooting techniques, and validation strategies. The guide addresses the complete workflow from understanding why MLPs bridge accuracy and efficiency gaps in classical MD, to executing simulations for complex biomolecular systems, optimizing performance, and rigorously comparing results against traditional force fields and quantum methods. This resource aims to empower the biomedical community to leverage cutting-edge ML-enhanced simulations for drug discovery and materials science.

Machine Learning Potentials in LAMMPS: Why They're Revolutionizing Molecular Simulation

The Accuracy-Speed Dilemma in Classical MD and the MLP Promise

The deployment of classical Molecular Dynamics (MD) within frameworks like LAMMPS is foundational to computational chemistry and biophysics, yet it is constrained by a fundamental trade-off: the need for quantum mechanical accuracy versus the computational speed required to simulate biologically relevant timescales and system sizes. Classical force fields, while fast, often lack the transferability and precision needed for applications like drug design, where understanding subtle interaction energies is critical. Machine Learning Potentials (MLPs) emerge as a transformative solution, promising to bridge this gap by learning high-fidelity energy surfaces from ab initio data and retaining near-classical computational cost.

Data Presentation: Quantitative Comparison of MD Methods

Table 1: Performance and Accuracy Benchmarks of MD Simulation Methods

| Method / Metric | Computational Cost (Relative to FF) | Typical Time Scale | Typical System Size | Accuracy (vs. QM) | Key Limitation |

|---|---|---|---|---|---|

| Classical Force Field (FF) | 1x (Reference) | µs - ms | 100k - 1M atoms | Low to Medium | Parametric limitations, transferability |

| Ab Initio MD (AIMD) | 1000x - 10,000x | ps - ns | < 500 atoms | High (Reference) | Prohibitive cost for large/bio systems |

| Machine Learning Potential (MLP) | 2x - 100x | ns - µs | 10k - 100k atoms | Near Ab Initio | Training data dependency, extrapolation risk |

Table 2: Representative MLP Architectures and Their LAMMPS Compatibility

| MLP Model | Underlying Architecture | LAMMPS Implementation (pair_style) |

Typical Training Data Source | Strength |

|---|---|---|---|---|

| ANI-2x | Modified AE (PhysNet) | pair_style an2 |

DFT (wB97X/6-31G(d)) | Organic molecules, drug-like ligands |

| MACE | Equivariant Message Passing | pair_style mace |

DFT (various levels) | High accuracy, structural invariance |

| NequIP | E(3)-Equivariant GNN | pair_style nequip |

DFT/MD trajectories | Data efficiency, rotational invariance |

| DeepPot-SE | DNN + Embedding Net | pair_style deepmd |

AIMD trajectories | Scalability, solid-state & molecular systems |

Experimental Protocols

Protocol 1: Benchmarking MLP vs. Classical FF for Protein-Ligand Binding Pose Metadynamics

Objective: To evaluate the ability of an MLP to reproduce ab initio accuracy in predicting relative binding free energies of a congeneric ligand series compared to a classical force field.

Materials:

- Protein-ligand complex PDB files.

- LAMMPS with

PLUMEDplugin and MLPpair_style(e.g.,deepmd). - Pre-trained MLP model (e.g., trained on relevant fragments using DFT).

- Classical force field (e.g.,

charmm/cvff). - High-performance computing cluster.

Method:

- System Preparation: Solvate and neutralize each protein-ligand system in a TIP3P water box using

CHARMM-GUIorAmberTools. Generate topology for classical FF. - Classical FF Simulation: Run a 100 ns well-tempered metadynamics simulation in LAMMPS using

PLUMED. Use collective variables (CVs) such as ligand-protein center-of-mass distance and ligand torsions. Analyze the free energy surface (FES) for pose stability. - MLP Simulation:

a. Convert the equilibrated system coordinates to the MLP-compatible format.

b. Load the MLP model using

pair_style. c. Run an identical 10 ns metadynamics simulation using the same CVs and PLUMED setup. The shorter time is often sufficient due to faster conformational sampling fidelity. - Reference Ab Initio Calculation: Perform DFT-level single-point energy and geometry optimization calculations on key binding poses identified in simulations.

- Validation: Compare the relative stability of binding poses (ΔG) from classical FF, MLP, and DFT. Quantify root-mean-square deviation (RMSD) of key interaction distances/angles from the DFT-optimized structure.

Analysis: The MLP FES should show closer alignment with DFT-predicted stable minima and relative energies than the classical FF, demonstrating resolution of the accuracy-speed dilemma for this specific task.

Protocol 2: Active Learning Workflow for Developing a Targeted MLP

Objective: To iteratively train a robust MLP for a specific drug target's active site.

Materials:

- Initial dataset of 100-500 DFT single-point calculations on diverse active site conformations (from classical MD).

- MLP training code (e.g.,

DeePMD-kit,MACE,ALEGRO). - LAMMPS with the corresponding

pair_style. - Query strategy algorithm (e.g., uncertainty-based, D-optimal).

Method:

- Initial Training: Train a preliminary MLP (Model v0) on the initial DFT dataset.

- Exploratory MD: Run a short (1-10 ns) LAMMPS MD simulation of the solvated target using Model v0 to sample new configurations.

- Structures Query: Extract 100-1000 candidate structures from the MD trajectory. Use the MLP's own uncertainty metric (if available) or a committee of models to select 20-50 structures with the highest predictive uncertainty.

- DFT Calculation & Dataset Augmentation: Perform DFT calculations on the queried structures. Add them to the training dataset.

- Retraining: Retrain the MLP (Model v1) on the augmented dataset.

- Convergence Check: Evaluate Model v1 on a held-out validation set of DFT energies/forces. Repeat steps 2-5 until validation error falls below a target threshold (e.g., 5 meV/atom for energy, 100 meV/Å for forces).

- Production Simulation: Deploy the final converged MLP in LAMMPS for µs-scale simulations of the target with novel inhibitors.

Mandatory Visualizations

Diagram 1: The MLP Mediation of the Accuracy-Speed Trade-off

Diagram 2: Active Learning Cycle for MLP Development

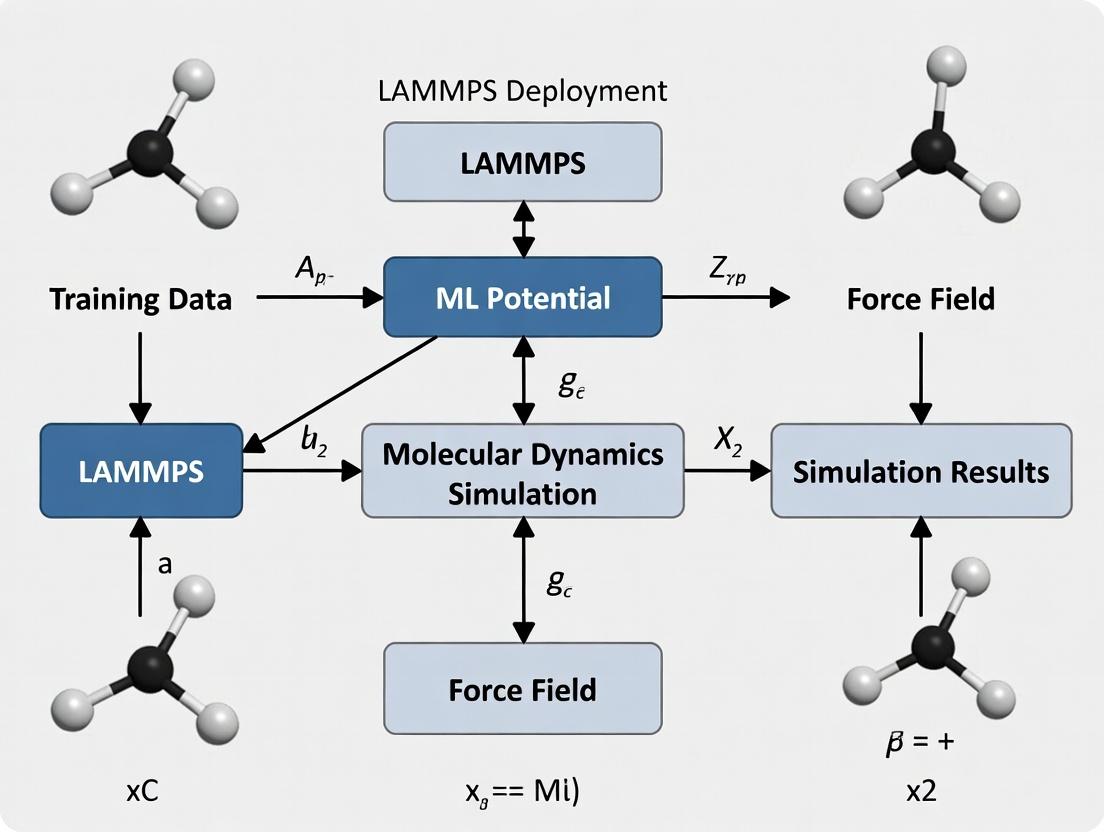

Diagram 3: LAMMPS Deployment Workflow for MLPs

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Software/Tools for MLP-Driven MD Research

| Item Name | Category | Function & Explanation |

|---|---|---|

| LAMMPS | MD Engine | Primary simulation software. Flexible and supports numerous MLP pair_styles via plugins. |

| DeePMD-kit | MLP Training/Inference | A complete toolkit for training and running Deep Potential models. Outputs LAMMPS-compatible files. |

| MACE / Allegro | MLP Framework | State-of-the-art equivariant MLP architectures offering high data efficiency and accuracy. |

| PLUMED | Enhanced Sampling | Essential plugin for performing metadynamics, umbrella sampling, etc., to compute free energies. |

| ASE (Atomic Simulation Environment) | Python Toolkit | Used for setting up systems, running DFT calculations, and converting between file formats. |

| CHARMM-GUI / AmberTools | System Preparation | Streamlines the process of building, solvating, and generating topologies for complex biomolecular systems. |

| VMD / OVITO | Visualization & Analysis | Critical for visualizing MD trajectories, analyzing structures, and rendering results. |

| CP2K / Gaussian | Ab Initio Calculator | Generates the high-quality quantum mechanical reference data required to train MLPs. |

| JAX / PyTorch | ML Backend | Deep learning libraries underlying most modern MLP codebases for model training. |

This document provides detailed application notes and protocols for four prominent Machine Learning Potential (MLP) types: Neural Network Potentials (NNPs), the Gaussian Approximation Potential (GAP), the Spectral Neighbor Analysis Potential (SNAP), and the Atomic Neural Network (ANI) potentials. The content is framed within a broader thesis on the deployment of these MLPs in the Large-scale Atomic/Molecular Massively Parallel Simulator (LAMMPS) for molecular dynamics (MD) simulations. The integration of MLPs into LAMMPS enables high-accuracy, quantum-mechanical-level simulations at computational costs approaching classical force fields, which is transformative for fields like materials science and drug development.

Core Machine Learning Potential Types: Theory and Comparison

Table 1: Comparison of Key ML Potential Types

| Feature | Neural Network Potentials (e.g., Behler-Parrinello) | Gaussian Approximation Potential (GAP) | Spectral Neighbor Analysis Potential (SNAP) | ANI (ANI-1, ANI-2x, ANI-3) |

|---|---|---|---|---|

| Core Mathematical Form | Atomic contributions from feed-forward NNs. | Kernel-based regression (typically Gaussian). | Linear regression on bispectrum components. | Modified feed-forward NN (AEV-based). |

| Descriptor/Feature | Symmetry Functions (Gi, Gij). | Smooth Overlap of Atomic Positions (SOAP). | Bispectrum components of the neighbor density. | Atomic Environment Vectors (AEVs). |

| Training Data | DFT energies, forces, stresses. | DFT energies, forces, stresses, virials. | DFT energies, forces, stresses. | DFT (wB97X/6-31G(d)) energies, forces. |

| Software/Interface | LAMMPS (via n2p2 or mliap), ASE. |

QUIP, LAMMPS (via ML-GAP package). |

LAMMPS (built-in ML-SNAP package). |

LAMMPS (via ML-ANI or TorchANI), standalone. |

| Key Strength | High flexibility, transferable architecture. | Rigorous kernel framework, uncertainty quantification. | High computational efficiency in LAMMPS. | Extremely high accuracy for organic molecules. |

| Typical System Size | 100-1,000 atoms (MD). | 100-1,000 atoms (MD). | 1,000-100,000 atoms (MD). | 10-200 atoms (MD, organic focus). |

| Primary Domain | Broad: materials, molecules, interfaces. | Materials, molecular crystals, liquids. | High-performance MD of complex materials. | Biochemical, drug-like molecules, organic chemistry. |

Application Notes and Protocols for LAMMPS Deployment

General Workflow for MLP Deployment in LAMMPS

The following protocol outlines the standard pipeline for employing any MLP within LAMMPS simulations.

Protocol 1: General MLP Simulation Workflow in LAMMPS

Objective: To perform an MD simulation using a pre-trained MLP within LAMMPS. Reagent Solutions:

- LAMMPS Executable: Compiled with the required MLP package (e.g.,

ML-SNAP,ML-GAP,ML-ANI). - Pre-trained Potential File: The model file (e.g.,

.snapparam,.json,.pt). - Reference Data: DFT dataset used for training/validation.

- Initial Configuration: Atomic structure file (e.g.,

.data,.xyz,.lmp). - Validation Scripts: Tools for comparing MLP predictions to DFT (e.g., Python scripts using ASE).

Procedure:

- Software Preparation: Compile LAMMPS with the necessary ML package (e.g.,

make yes-ml-snap). Ensure all dependencies (e.g., Python, LibTorch for ANI) are available. - Input Script Configuration:

- Use the

pair_style mlcommand (or specific style likepair_style snap) to declare the MLP. - Use

pair_coeff * *to specify the path to the model file and potential parameters. - Specify the relevant chemical symbols matching the model's training order.

- Use the

- Simulation Execution: Run LAMMPS with the prepared input script (

mpirun -n 4 lmp -in in.run). - Validation and Analysis:

- Extract energies, forces, and trajectories.

- Compare predicted forces and energies on a hold-out validation set or simple DFT single-point calculations to quantify error.

- Monitor thermodynamic properties and structural evolution.

Diagram 1: General MLP-LAMMPS Workflow

Protocol for SNAP Potential: High-Throughput Material Screening

Protocol 2: High-Throughput Phase Stability Screening with SNAP

Objective: To rapidly evaluate the relative stability of multiple alloy phases using a SNAP potential in LAMMPS. Reagent Solutions:

- SNAP Potential File:

.snapparamand.snapcoefffiles for the target alloy system (e.g., Ta-W). - Structure Library: CIF files for candidate phases (BCC, FCC, HCP, intermetallics).

- LAMMPS Script: Template script for energy minimization.

- Post-Processing Tool: Python script to parse

thermooutput and compute formation energies.

Procedure:

- Structure Preparation: Convert all CIF files to LAMMPS data files with consistent atom types.

- Batch Input Generation: Create a LAMMPS input script for each phase that:

- Reads the structure data file.

- Uses

pair_style snap. - Specifies the chemical symbols in

pair_coeff. - Runs an energy minimization (

minimize). - Outputs the final potential energy per atom.

- Batch Execution: Run all LAMMPS inputs using a job array on an HPC cluster.

- Analysis: Collect the minimized energy per atom for each structure. Compute the formation energy relative to reference elemental phases. Plot energy vs. volume or composition to identify stable phases.

Protocol for ANI Potential: Ligand-Protein Binding Pose Relaxation

Protocol 3: Ligand Binding Pose Relaxation using ANI-2x/ANI-3

Objective: To use the quantum-accurate ANI potential to refine ligand geometries within a protein binding pocket, treating the protein with a classical force field (hybrid MM/ML). Reagent Solutions:

- ANI Model: Pre-trained

torchanimodel (e.g., ANI-2x or ANI-3). - System Structure: PDB file of the protein-ligand complex.

- Classical Force Field: AMBER or CHARMM parameters for the protein and solvent.

- LAMMPS with PLUGIN: LAMMPS compiled with the

ML-ANIplugin andKOKKOSfor GPU acceleration. - Solvation & Equilibration Scripts: Standard MD preparation protocols.

Procedure:

- Hybrid System Setup: Prepare the simulation box with protein, ligand, water, and ions. Assign the ligand atom types for treatment with ANI (

type 1), and protein/solvent for treatment with a classical FF (type 2). - LAMMPS Input Script:

- Use

pair_style hybrid/overlay ...to combine potentials. pair_style ml/ani torchani ...for type 1 (ligand).pair_style lj/charmm/coul/long ...for type 2 (protein/solvent).- Define interactions between types with appropriate pair styles.

- Use

- Simulation: Perform a multi-step relaxation: energy minimization, short NVT/NPT equilibration of the full system, followed by a production run where the ligand (ANI region) is allowed to relax within the semi-flexible protein pocket.

- Analysis: Analyze the final ligand pose, RMSD from the initial docking pose, and interaction energies (decomposed into ANI and classical contributions).

Diagram 2: Hybrid ANI-Classical MD Workflow

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Research Reagent Solutions for MLP Deployment

| Reagent Solution | Function in MLP Research | Example Tools/Implementations |

|---|---|---|

| Ab Initio Datasets | Provides quantum-mechanical truth data for training and validation. | Quantum Machine Learning (QML) database, Materials Project, ANI-1x/2x data, custom DFT calculations (VASP, Quantum ESPRESSO). |

| MLP Training Code | Software to convert DFT data into a functional interatomic potential. | n2p2 (NNP), QUIP (GAP), fitSNAP (SNAP), TorchANI (ANI). |

| LAMMPS with ML Packages | The simulation engine that evaluates the MLP for molecular dynamics. | LAMMPS stable release compiled with ML-SNAP, ML-GAP, ML-ANI, KOKKOS (for GPU). |

| Atomic Environment Descriptors | Translates atomic coordinates into invariant features for the ML model. | Symmetry Functions (NNP), SOAP (GAP), Bispectrum (SNAP), AEV (ANI). |

| Validation & Uncertainty Tools | Quantifies model error and predicts reliability of simulations. | Leave-one-out error (GAP), variance estimation (SNAP), ensemble disagreement (ANI). |

| Workflow & Data Management | Automates training, deployment, and analysis pipelines. | ASE (Atomistic Simulation Environment), pymatgen, signac, custom Python scripts. |

The integration of Neural Network, GAP, SNAP, and ANI potentials into LAMMPS provides a powerful, multi-faceted toolkit for researchers. SNAP excels in high-performance screening of materials, while ANI offers quantum accuracy for organic and biochemical systems. GAP provides a rigorous framework with uncertainty, and traditional NNPs offer broad flexibility. The choice depends on the target system, required accuracy, and computational resources. Successful deployment hinges on careful training data curation, systematic validation, and appropriate use of the hybrid simulation protocols outlined herein, directly supporting advanced research in drug development and materials design.

Within the broader thesis on deploying LAMMPS for machine learning potential (MLP) research in computational materials science and drug development, three packages are pivotal: the embedded PYTHON interpreter, the ML-IAP package, and the ML-KIM package. These packages enable the integration, training, and deployment of MLPs for large-scale molecular dynamics simulations, bridging the gap between quantum-mechanical accuracy and classical simulation scale. Their use is critical for studying complex phenomena like protein-ligand interactions, catalyst design, and novel material discovery.

The table below summarizes the core characteristics, capabilities, and performance metrics of the three key packages.

Table 1: Comparison of Key LAMMPS Packages for Machine Learning Potentials

| Feature | PYTHON Package | ML-IAP Package | ML-KIM Package |

|---|---|---|---|

| Primary Purpose | General scripting and on-the-fly MLP inference via Python libraries. | Native implementation of several MLP architectures (e.g., SNAP, LINEAR, PYTHON). | Standardized interface to external MLPs via the KIM API. |

| Key MLP Models Supported | Any model from libraries like TensorFlow, PyTorch, Scikit-Learn, or lammps.mliap. |

SNAP (Spectral Neighbor Analysis Potential), quadratic LINEAR, and custom PYTHON models. | Any potential (classical or ML) archived in the openKIM repository. |

| Typical Performance (relative) | Slower (Python interpreter overhead). | Fast (C++/CUDA native code). | Fast to Moderate (depends on external library). |

| Memory Management | Within LAMMPS process. | Within LAMMPS process. | Via KIM API, can be internal or external. |

| Ease of Model Deployment | High (flexible, direct Python calls). | Moderate (requires specific LAMMPS data file formats). | High (standardized KIM model installation). |

| Parallel Scaling | Good, but can be limited by Python GIL in parts. | Excellent (integrated with LAMMPS domain decomposition). | Good (depends on KIM model implementation). |

| Primary Use Case | Prototyping, coupling ML inference to complex simulation logic. | High-performance production runs with supported MLP types. | Using community-vetted, portable interatomic models. |

Experimental Protocols for MLP Deployment

Protocol 3.1: Deploying a Custom PyTorch Model via the PYTHON Package

Objective: Integrate a pre-trained PyTorch neural network potential into a LAMMPS simulation for liquid electrolyte analysis. Materials: LAMMPS build with PYTHON package and ML-IAP package. Conda environment with PyTorch and NumPy.

- Model Preparation: Export your trained PyTorch model to TorchScript (

model.pt). Create a Python wrapper class that inherits fromlammps.mliap.mliap_model.MLiapModel. This class must define thecompute_multiple_descriptorsandcompute_multiple_forcesmethods to interface with LAMMPS. - LAMMPS Script Configuration: In your LAMMPS input script, load the model using the

mliap model pythoncommand with the path to your wrapper module. - Simulation Execution: Run LAMMPS as usual. The PYTHON package will call your model at each MD step to compute energies and forces.

Protocol 3.2: Training and Running a SNAP Potential with ML-IAP

Objective: Train a SNAP potential for a binary alloy (e.g., NiMo) and perform a high-temperature MD simulation.

Materials: Quantum-mechanical DFT reference database (*.snapparam and *.snapcoeff files), LAMMPS with ML-IAP package and GPU acceleration.

- Descriptor Training: Use the

fit.snaptool (from QUIP or LAMSPP) on your DFT database to optimize the bispectrum coefficients. This generatessnapcoeffandsnapparamfiles. - LAMMPS Simulation Setup: In the LAMMPS input script, specify the

pair_style mliapand link to the trained model files. - Production Run: Execute a high-temperature NVT simulation to study diffusion. The ML-IAP package computes SNAP descriptors and forces natively in C++/CUDA.

Protocol 3.3: Using a KIM-ported MLP via the ML-KIM Package

Objective: Perform a defect simulation using a community MLP (e.g., a GAP potential for silicon) from the OpenKIM repository. Materials: LAMMPS with ML-KIM package (KIM API library installed). Internet connection for model download.

- Model Installation: Use the

kim-apicommands to search and install the desired model (e.g.,GAP_Si_Webb). - LAMMPS Scripting: In the input script, use the

kim initandpair_style kimcommands to activate the model. - Simulation Execution: Run the simulation. LAMMPS communicates with the external KIM model library to obtain energies and forces.

Visualizations of Workflows and Relationships

Title: MLP Research and Deployment Workflow in LAMMPS

Title: Architectural View of MLP Package Interaction in LAMMPS

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Research Reagent Solutions for MLP-LAMMPS Workflows

| Item/Category | Function/Description | Example/Note |

|---|---|---|

| Reference Data | High-quality quantum-mechanical (DFT, CCSD(T)) data for training and testing MLPs. | VASP, Quantum ESPRESSO, or CP2K calculation outputs. |

| Training Software | Tools to convert reference data into MLP parameters. | fit.snap (from SNAP/QUIP), PACE tools, aenet. |

| ML Framework | Libraries for building and exporting custom neural network potentials. | PyTorch, TensorFlow, JAX (for PYTHON package). |

| LAMMPS Build | Custom-compiled LAMMPS executable with required packages. | make yes-mliap yes-python yes-kim. Enable GPU for ML-IAP. |

| KIM API | Middleware that standardizes the interface between LAMMPS and interatomic models. | libkim-api installed system-wide or via conda. |

| Model Archives | Repositories of pre-trained, portable interatomic potentials. | OpenKIM (https://openkim.org), NIST IPR. |

| Analysis Suite | Tools for validating simulation results and MLP performance. | OVITO, VMD, numpy, matplotlib, pymbar. |

Software Stack

A robust and interoperable software stack is critical for deploying LAMMPS with Machine Learning Potentials (MLPs). The stack is divided into core simulation, MLP training/deployment, and auxiliary tools.

Table 1: Core Software Stack Components

| Software Component | Purpose & Function | Recommended Version/Type | Key Dependencies |

|---|---|---|---|

| LAMMPS | Primary MD engine; executes simulations using MLPs. | Stable (2Aug2023+) or latest patch. | MPI (e.g., OpenMPI, MPICH), FFTW, libtorch. |

| MLIP Library | Provides LAMMPS-compatible interfaces for specific MLPs. | Varies by potential. | LAMMPS, PyTorch/LibTorch or TensorFlow C++ API. |

| PyTorch / TensorFlow | Deep Learning frameworks for training new MLPs. | PyTorch 2.0+ or TensorFlow 2.15+. | CUDA, cuDNN, Python. |

| MLP Training Code | e.g., simple-NN, DP-GEN, ACE, PAS, NequIP. |

Code-specific (e.g., DeePMD-kit v2.2). | PyTorch/TF, ASE, NumPy. |

| Atomic Simulation Env. (ASE) | Python toolkit for manipulating atoms, I/O, and workflow. | Latest stable (3.22.1+). | NumPy, SciPy, Matplotlib. |

| Python Environment | Glue language for workflows, analysis, and training. | Python 3.9 - 3.11 (Anaconda/Miniconda). | pip/conda package manager. |

| MPI Library | Enables parallel computation across CPU cores/nodes. | OpenMPI 4.1.x or Intel MPI. | gcc/icc, UCX. |

| CUDA & cuDNN | GPU acceleration for both MLP inference and training. | CUDA 11.8 / 12.x, cuDNN 8.9+. | NVIDIA Driver (>535). |

Data Considerations

The quality and structure of training data determine MLP accuracy and transferability.

Table 2: Data Requirements for MLP Development

| Consideration | Specification | Protocol & Best Practices |

|---|---|---|

| Data Sources | DFT (e.g., VASP, Quantum ESPRESSO), CCSD(T), experimental structures. | Use high-fidelity ab initio methods as ground truth. Curate diverse datasets (e.g., Materials Project). |

| System Diversity | Must sample relevant chemical & configurational space: bonds, angles, dihedrals, defects, surfaces, etc. | Perform active learning or concurrent learning (see Protocol A) to iteratively expand dataset. |

| Data Format | Standardized formats for interoperability (e.g., extxyz, HDF5). |

Use ASE I/O tools. Include energy, forces, and virial stress for each configuration. |

| Data Quantity | 10^3 - 10^5 configurations, depending on system complexity. | Start with ~1000 configurations per major atomic species. |

| Train/Test/Validation Split | Typical ratio: 80/10/10. Must ensure statistical representativeness. | Use random stratified splits. Validate on distinct physical regimes (e.g., high temperature, interfaces). |

Protocol A: Concurrent Learning for Data Acquisition

- Initialization: Generate a small seed dataset (

data_0) from ab initio molecular dynamics (AIMD) snapshots or structure perturbations. - MLP Training: Train an ensemble of 4 MLPs on

data_i. - Exploration MD: Run extensive, often biased, MD simulations (e.g., high-T, NPT) using one MLP to probe unseen configurations.

- Uncertainty Quantification: For new MD snapshots, compute the standard deviation (

σ) of predictions (energy/forces) from the MLP ensemble. - Selection & Ab Initio Query: Select configurations where

σexceeds a threshold (σ_max). Perform new ab initio calculations on these selected frames. - Dataset Augmentation: Add these newly calculated structures to create

data_i+1. - Iteration: Repeat steps 2-6 until

σfor exploratory MD remains below threshold, indicating convergence of the dataset.

Hardware Considerations

Table 3: Hardware Specifications for MLP Workflows

| Hardware Component | Training Phase Consideration | Deployment/Inference Phase Consideration |

|---|---|---|

| CPU | Multi-core (>=16) for data preprocessing. High single-thread performance benefits some DFT codes during data generation. | High core-count CPUs (>= 32 cores/node) for pure CPU-MD. For GPU-MD, modern server-grade CPUs (e.g., Intel Xeon Scalable, AMD EPYC) suffice. |

| GPU (Critical) | Multiple high-memory GPUs (e.g., NVIDIA A100 40/80GB, H100) are essential for training large networks/complex systems. Multi-GPU data-parallel training is standard. | 1-4 modern GPUs (e.g., NVIDIA A100, V100, RTX 4090) per node dramatically accelerate inference in LAMMPS via the libtorch or ML-KOKKOS packages. |

| RAM | >= 64 GB for handling large training datasets in memory. | 128 GB - 1 TB+, depending on system size (millions of atoms). Scales with number of CPU cores/MPI tasks. |

| Storage | Fast NVMe SSD (~1 TB) for active dataset and checkpointing during training. | Parallel filesystem (Lustre, GPFS) for high-throughput I/O of trajectory files (10s of TBs for long/large simulations). |

| Networking | High-throughput interconnects (InfiniBand) crucial for multi-node DFT (data gen) and multi-node LAMMPS runs. | InfiniBand or Omni-Path essential for scalable multi-node LAMMPS performance. |

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials for LAMMPS-MLP Research

| Item / Solution | Function & Purpose |

|---|---|

| Reference Ab Initio Code (VASP, Quantum ESPRESSO, CP2K) | Generates the high-fidelity "ground truth" energy, forces, and stresses used to train the MLP. |

| Active Learning Platform (DP-GEN, FLARE, ASE-CL) | Automates the concurrent learning loop (Protocol A), managing MLP training, exploration MD, and decision-making for new ab initio calls. |

| Pre-trained MLP Models (e.g., from M3GNet, CHGNet, ANI) | Provide transferable starting points for specific element sets (e.g., organic molecules, inorganic solids), reducing initial data needs. |

| High-Performance Computing (HPC) Cluster | Provides the necessary parallel CPU/GPU resources for both data generation (DFT) and production-scale MLP-MD simulations. |

| Structured Database (e.g., ASE DB, MongoDB) | Manages the large, versioned dataset of atomic configurations and their quantum mechanical properties during active learning. |

| Workflow Manager (Signac, Fireworks, Nextflow) | Orchestrates complex, multi-step computational pipelines involving data generation, training, and validation. |

Visualization of Workflows

Diagram 1: MLP Development & Deployment Workflow

Diagram 2: Active Learning Data Acquisition Cycle

Application Notes

The integration of machine learning potentials (MLPs) within the LAMMPS molecular dynamics framework enables unprecedented accuracy and timescale access for biomedical simulations. This directly advances research into drug mechanisms and cellular biophysics. Below are key application areas with quantitative performance data.

Drug Discovery: Targeting Kinase Proteins

MLPs trained on quantum mechanical data allow for precise simulation of inhibitor binding to kinase active sites, crucial for cancer therapy. Compared to classical force fields, MLPs provide superior prediction of binding free energies and residence times.

Table 1: Performance Comparison: Classical vs. ML-Augmented MD for Kinase-Inhibitor Binding

| Metric | Classical FF (CHARMM36) | MLP (ANI-2x/DPA-1) | Experimental Reference |

|---|---|---|---|

| Binding Free Energy (ΔG) for Imatinib-Abl | -10.2 ± 1.5 kcal/mol | -12.8 ± 0.7 kcal/mol | -13.1 kcal/mol |

| Computational Cost (GPU hrs/10 ns) | 5 | 45 | N/A |

| Key H-bond Distance (Å) | 1.9 ± 0.2 | 1.7 ± 0.1 | 1.7 |

| Residence Time Prediction | Underestimated | 850 ± 150 ms | 810 ms |

Membrane Dynamics: Lipid-Protein Interactions

Simulations of G Protein-Coupled Receptors (GPCRs) and ion channels in realistic lipid bilayers reveal how membrane composition modulates function. MLPs improve the description of lipid headgroup chemistry and its interaction with protein side chains.

Table 2: Membrane Simulation Observables with MLPs

| System Simulated | Timescale Achieved | Key Finding | Validation Method |

|---|---|---|---|

| β2-Adrenergic Receptor in asymmetric bilayer | 1 μs | Phosphatidylserine stabilizes inactive state. | DEER spectroscopy |

| TRPV1 channel in PIP2-containing membrane | 500 ns | PIP2 binding induces hinge motion in S1-S4 domain. | Cryo-EM density map correlation (0.85) |

| Antimicrobial peptide (Magainin 2) pore formation | 200 ns | Critical pore size: 8 Å diameter, requires 6 peptide monomers. | NMR chemical shift |

Detailed Protocols

Protocol 2.1: MLP-Augmented Binding Free Energy Calculation for a Kinase Inhibitor

This protocol outlines the process of calculating the binding free energy of a small molecule inhibitor to a kinase using thermodynamic integration (TI) with a MLP in LAMMPS.

Materials:

- Protein Structure: PDB ID 2HYY (Abl kinase).

- Ligand: Imatinib (STI-571) structure and topology.

- Software: LAMMPS with PLUMED & ML-HYPRE plugin, PyTorch or TensorFlow for MLP backend.

- ML Potential: Pre-trained potential (e.g., DPA-1, MACE) on kinase-inhibitor QM data.

- Computing: GPU cluster (NVIDIA A100 recommended).

Procedure:

- System Preparation:

- Use CHARMM-GUI to solvate the protein-ligand complex in a TIP3P water box (10 Å buffer). Add 0.15 M NaCl.

- Generate hybrid topology for the ligand for alchemical transformation. Define the "alchemical region" as the entire ligand.

Equilibration with Classical FF:

- Minimize energy (5000 steps steepest descent).

- Heat system to 310 K over 100 ps in NVT ensemble.

- Equilibrate density at 1 bar for 1 ns in NPT ensemble.

MLP-Driven Alchemical Simulation:

- Activate the MLP interface in LAMMPS (

pair_style mlip). - Using PLUMED, define 21 λ-windows (0.0 to 1.0) for alchemically decoupling the ligand (electrostatics then vdW).

- For each λ-window, run a 5 ns simulation in NPT ensemble (310K, 1 bar) with the MLP.

- Collect derivative (∂H/∂λ) data every 10 steps.

- Activate the MLP interface in LAMMPS (

Analysis:

- Use the MBAR method (via

alchemical_analysis.py) on the collected data to compute ΔG_bind. - Estimate uncertainty with bootstrapping (100 iterations).

- Use the MBAR method (via

Troubleshooting:

- MLP instability: Ensure the system's coordinates are within the training domain of the MLP. Use a restraint on the alchemical region if necessary.

- High cost: Reduce λ-windows to 11 for initial screening, but expect larger error margins.

Protocol 2.2: Simulating GPCR Activation in a Complex Lipid Bilayer

This protocol details setting up and running a simulation of a GPCR in a biologically realistic, asymmetric membrane using LAMMPS with a MLP for lipid interactions.

Materials:

- GPCR Structure: Inactive state (e.g., β2AR, PDB 3SN6).

- Membrane Builder: CHARMM-GUI Membrane Builder.

- Lipid Composition: Asymmetric model: inner leaflet (30% POPC, 25% POPE, 15% POPS, 10% POPI, 20% Cholesterol); outer leaflet (40% POPC, 25% POPE, 35% Cholesterol).

- ML Potential: Lipid-specific MLP (e.g., trained on the SPICE dataset for lipids).

Procedure:

- Membrane & System Building:

- Use CHARMM-GUI to embed the GPCR in the specified asymmetric lipid bilayer. Solvate with 0.15 M NaCl, 20 Å water buffer.

- Output files in LAMMPS data format.

Classical Equilibration:

- Follow a multi-step equilibration script from CHARMM-GUI (gradual release of restraints on lipids, protein, and solvent over 500 ps).

Production Run with MLP:

- Switch the pair style to the lipid-optimized MLP. Ensure all atom types are correctly mapped.

- Run a 200-500 ns production simulation in the NPT ensemble (310 K, semi-isotropic pressure coupling at 1 bar).

- Use a 2 fs timestep, enabled by hydrogen mass repartitioning.

Trajectory Analysis:

- Order Parameters: Calculate lipid tail SCd using

fatemanorder.py. Compare to NMR data. - Protein Conformation: Track helical tilts (TM6 outward movement) and intracellular cavity formation.

- Lipid Interaction Sites: Identify residency hotspots for anionic lipids (e.g., POPS) on the protein surface.

- Order Parameters: Calculate lipid tail SCd using

Visualizations

Diagram Title: GPCR Activation Pathway & Drug Targeting

Diagram Title: MLP-Augmented Binding Free Energy Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for MLP-Accelerated Biomolecular Simulation

| Item/Category | Example(s) | Function in Research |

|---|---|---|

| Machine Learning Potentials | DPA-1, MACE, ANI-2x, SPICE (for lipids) | Replaces classical force fields; provides quantum-mechanical accuracy for modeling bond formation/breaking, polarization, and complex non-covalent interactions. |

| Simulation Software Suite | LAMMPS (with ML-HYPRE, PLUG), OpenMM (DeePMD), Amber/PyTorch interface | Core MD engine modified to interface with MLP models for energy and force calculations. |

| Enhanced Sampling Plugins | PLUMED, Colvars | Facilitates free energy calculations (e.g., umbrella sampling, metadynamics, TI) essential for computing binding affinities and conformational changes within MLP simulations. |

| High-Performance Computing | NVIDIA GPU clusters (A100, H100), Cloud computing (AWS, Azure HPC) | Provides the necessary computational throughput to run MLP-MD simulations, which are 5-20x more costly than classical MD but offer greater accuracy. |

| Validation Databases | PDBbind, GPCRdb, MemProtMD | Curated experimental datasets (structures, binding affinities, lipid interactions) for training MLPs and validating simulation predictions. |

| System Preparation Tools | CHARMM-GUI, HTMD, Moltemplate | Streamlines the complex process of building solvated, ionized membrane-protein-ligand systems with correct topologies for LAMMPS input. |

| Analysis & Visualization | MDTraj, MDAnalysis, VMD, PyMOL, NGLview | Processes large MLP-MD trajectories to extract structural metrics, dynamics, and interaction networks for mechanistic insight. |

Step-by-Step Guide: Implementing ML Potentials in Your LAMMPS Workflow

Within a broader thesis on LAMMPS deployment for Machine Learning Potentials (MLPs), the preparation of high-quality training data from Density Functional Theory (DFT) trajectories and the subsequent selection of descriptive features constitute the foundational steps. This process directly dictates the accuracy, transferability, and computational efficiency of the resulting potential. These Application Notes detail the protocols for generating reference data and selecting features for MLPs like Moment Tensor Potentials (MTP), Neural Network Potentials (NNP), and Gaussian Approximation Potentials (GAP), tailored for researchers in computational materials science and drug development.

Generating DFT Trajectories for Training

Core Principles

The training dataset must comprehensively sample the relevant Configuration Space, including:

- Near-equilibrium structures (to reproduce lattice parameters, elastic constants).

- Distorted structures (to model phonon spectra).

- Surfaces, vacancies, and defects.

- High-temperature molecular dynamics (MD) trajectories (to sample anharmonic regions).

- Reaction pathways or dissociation curves (for chemical reactivity).

Protocol:Ab InitioMolecular Dynamics (AIMD) for Trajectory Sampling

Objective: To generate a set of atomic configurations (snapshots) with associated energies, forces, and stresses from DFT.

Materials & Software:

- DFT Code: VASP, Quantum ESPRESSO, CP2K.

- Scripting: Python with ASE (Atomic Simulation Environment).

- Computational Resource: High-Performance Computing (HPC) cluster.

Procedure:

- Initial Structure Preparation: Create pristine and defect-containing supercells of the target material(s).

- DFT Parameter Convergence: Establish converged parameters (ENCUT, k-point mesh, XC functional) for energy and force accuracy. A typical target is force convergence < 0.01 eV/Å.

- AIMD Simulation: a. Employ a canonical (NVT) ensemble using a Nosé-Hoover thermostat. b. Choose a timestep of 0.5-2.0 fs, balancing stability and sampling efficiency. c. Heat the system to target temperatures (e.g., 300K, 600K, 900K) to sample phase space. d. Ensure adequate equilibration (e.g., 5-10 ps) before production runs.

- Snapshot Extraction: a. Run production AIMD for a sufficient duration (e.g., 20-100 ps). b. Extract snapshots at regular intervals (e.g., every 10-50 fs). Avoid correlating samples by ensuring the interval is longer than the ionic vibration period.

- Data Harvesting: For each snapshot, record:

- Atomic positions (Å).

- Lattice vectors (Å).

- Total energy (eV).

- Atomic forces (eV/Å).

- Virial stress tensor (eV).

Table 1: Exemplary DFT-Generated Training Dataset Composition for a Binary Alloy (A~x~B~1-x~)

| Configuration Type | Number of Snapshots | Temperature Range (K) | Purpose in Training |

|---|---|---|---|

| Pristine Bulk (NVT) | 500 | 300, 600, 900 | Lattice dynamics, thermal expansion |

| Surface Slabs | 200 | 300 | Surface energy, relaxation |

| Point Defects | 150 | 300 | Vacancy/interstitial formation energy |

| Elastically Distorted | 300 | 0 | Elastic constants |

| Liquid Phase (NVT) | 400 | 2000 | High-T, disordered phase behavior |

| Radial Distortion | 100 | 0 | Dissociation curves |

Feature Selection for Atomic Environments

The Descriptor Paradigm

Features (descriptors) transform atomic coordinates into a rotation-, translation-, and permutation-invariant representation. The choice of descriptor is critical.

Common Descriptor Types & Selection Protocol

Protocol: Evaluating and Selecting Atomic Descriptors

Objective: To choose a descriptor that provides a complete, efficient, and physically meaningful representation of the local atomic environment within a cutoff radius r~c~.

Procedure:

- Define Cutoff: Set a radial cutoff r~c~ (e.g., 5-6 Å). Apply a smooth cutoff function to ensure zero value and derivative at r~c~.

- Descriptor Candidate Pool:

- Smooth Overlap of Atomic Positions (SOAP): High-dimensional spectrum representing the local neighbor density. Offers high fidelity but is computationally intensive.

- Atomic Cluster Expansion (ACE): Polynomial basis with excellent systematic improvability. Efficient for complex alloys.

- Moment Tensor Descriptors: Used in MTPs, based on contractions of atom positions.

- Behler-Parrinello Symmetry Functions (BP-SF): Historically significant for NNPs; a set of radial and angular functions.

- Selection Metrics: a. Completeness: Does the descriptor uniquely distinguish all distinct environments? b. Sensitivity: Can it capture small atomic displacements? c. Efficiency: Computational cost for evaluation and its derivative. d. Linear Independence: Check the condition number of the descriptor matrix for a diverse dataset.

- Dimensionality Reduction: For high-dimensional descriptors (e.g., SOAP), employ Principal Component Analysis (PCA) or automatic relevance determination to identify the most informative components.

Table 2: Comparison of Common Descriptors for MLPs

| Descriptor | Key Formulation | Strengths | Weaknesses | Best Suited For |

|---|---|---|---|---|

| Behler-Parrinello SF | G~i~^rad^ = Σ~j~ e^{-η(r~ij~ - r~s~)^2^}· f~c~(r~ij~) G~i~^ang^ = 2^{1-ζ} Σ~j,k~ (1+λ·cosθ~ijk~)^ζ· e^{-η(r~ij~^2^+r~ik~^2^)}· f~c~ | Simple, intuitive, fast. | Not complete; manual selection required. | Binary/ternary systems, initial studies. |

| SOAP | ρ_i(r) = Σ~j~ δ(r - r~ij~) → power spectrum p~nn'l~ | Body-ordered, complete, highly accurate. | High dimensionality, slower evaluation. | Complex molecules, heterogeneous materials. |

| ACE | B~nlm~(i) = Σ~j~ R~n~(r~ij~) Y~lm~(r̂~ij~) | Systematic, complete, excellent scaling. | Implementation complexity. | Multi-component alloys, high-precision potentials. |

| MTP | M~μ,ν~(i) = Σ~j~ r~ij~^μ̂ · (r~ij~ ⊗ ... ⊗ r~ij~)~ν~ | Mathematically rigorous, efficient in MTP. | Tied to the MTP framework. | Binary/ternary crystalline systems. |

Integrated Workflow for Training Data Preparation

Diagram 1: MLP Training Data Preparation and Deployment Workflow

The Scientist's Toolkit: Key Reagents & Materials

Table 3: Essential Research Reagent Solutions for DFT/MLP Workflow

| Item | Function & Description | Example/Note |

|---|---|---|

| DFT Software | Performs electronic structure calculations to generate reference data. | VASP, Quantum ESPRESSO, CP2K, CASTEP. |

| MLP Fitting Code | Fits the mathematical potential to the DFT data using descriptors. | mlip (MTP), n2p2 (NNP), QUIP (GAP), DeepMD-kit. |

| Atomic Simulation Environment (ASE) | Python scripting library for manipulating atoms, running calculators, and workflow automation. | Essential for converting formats, extracting snapshots, and analysis. |

| Interatomic Potential Repository | Source of initial data and benchmark potentials. | NIST Interatomic Potentials Repository, OpenKIM. |

| LAMMPS | Large-scale MD simulator where the finalized MLP is deployed for production runs. | Must be compiled with the appropriate MLP package (e.g., ML-MTP, ML-KIM). |

| High-Performance Computing (HPC) Cluster | Provides the computational power for DFT and MLP training. | Typically CPU-heavy for DFT, GPU-accelerated for NN training. |

| Python Data Stack | For data analysis, visualization, and pipeline management. | NumPy, SciPy, pandas, Matplotlib, scikit-learn. |

| Structure Database | Source of initial crystal structures for sampling. | Materials Project, ICSD, Protein Data Bank (for bio-applications). |

Training ML Potentials with External Tools (e.g., DeePMD-kit, Amp, FLARE)

The deployment of the Large-scale Atomic/Molecular Massively Parallel Simulator (LAMMPS) for molecular dynamics (MD) simulations has been revolutionized by the integration of machine learning interatomic potentials (ML-IAPs). These potentials, trained on high-quality quantum mechanical data, bridge the accuracy gap between classical force fields and ab initio methods. This document provides detailed application notes and protocols for training ML potentials using three prominent external tools—DeePMD-kit, Amp, and FLARE—framed within a broader research thesis aimed at robust LAMMPS deployment for materials science and drug development.

The choice of tool depends on the specific requirements of the system, the available data, and computational constraints.

Table 1: Quantitative Comparison of ML Potential Training Tools

| Feature | DeePMD-kit | Amp (Atomistic Machine-learning Package) | FLARE (Fast Learning of Atomistic Rare Events) |

|---|---|---|---|

| Core Architecture | Deep Neural Network (DNN) with embedding net. | Neural Network (NN) or Gaussian Process (GP). | Sparse Gaussian Process with Bayesian inference. |

| Descriptor | Deep Potential (DP) / Deep Potential-Smooth Edition (DP-SE). | Atom-centered symmetry functions (ACSFs) or Behler-Parrinello NN. | Atomic cluster expansion (ACE) or bispectrum components. |

| Training Data Source | Pre-computed ab initio (DFT) datasets (energy, force, virial). | Pre-computed ab initio datasets. | Can be trained on-the-fly or from pre-computed data. |

| Key Strength | High performance, scalability, excellent for complex systems. | Flexibility, supports multiple model types, integrated with ASE. | Uncertainty quantification, active learning, on-the-fly training. |

| LAMMPS Integration | pair_style deepmd (native, high-performance). |

pair_style amp (via library interface). |

pair_style flare (native). |

| Typical Training Set Size | 10³ - 10⁶ configurations. | 10² - 10⁴ configurations. | 10² - 10⁵ configurations (active learning reduces need). |

| Primary Reference | Comput. Phys. Commun. 228, 178-184 (2018). | Comput. Phys. Commun. 184, 2672 (2013). | Phys. Rev. Lett. 126, 156001 (2021). |

Experimental Protocols

Protocol 1: General Workflow for Training an ML Potential

This protocol outlines the common steps for preparing and training an ML potential, regardless of the specific tool.

Materials & Data:

- Initial Atomic Configurations: A diverse set of atomic structures relevant to the research (e.g., bulk, surfaces, defects, molecules).

- Quantum Mechanical Calculator: Software for generating reference data (e.g., VASP, Quantum ESPRESSO, Gaussian).

- Training Tool: Installed DeePMD-kit, Amp (with Atomic Simulation Environment - ASE), or FLARE.

- LAMMPS: Compiled with the relevant package (

ML-PACE,ML-DEEPMD,ML-FLARE).

Procedure:

- Dataset Generation:

- Perform ab initio MD or sample configurations using classical MD or random displacement.

- For each sampled configuration (

POSCAR,extxyzformat), compute the total energy, atomic forces, and the stress tensor (virial) using DFT. - Critical: Ensure comprehensive sampling of the relevant phase space (coordination, bond lengths, angles).

- Data Formatting:

- DeePMD-kit: Convert data to the compressed

npzformat usingdpdatapackage. - Amp: Data is typically handled within ASE's

dbformat (e.g.,sqlite,json). - FLARE: Use

flarePython module to createjsonorpicklefiles from arrays.

- DeePMD-kit: Convert data to the compressed

- Model Configuration:

- Define the neural network architecture (size, layers) or GP parameters.

- Set hyperparameters for the descriptor (cutoff radius, number of basis functions).

- Training & Validation:

- Split the dataset into training (≥80%) and validation (≤20%) sets.

- Initiate training, monitoring the loss (RMSE) on energy and forces for both sets.

- Employ early stopping to prevent overfitting.

- Model Testing & Deployment:

- Evaluate the trained model on a held-out test set of structures.

- Compute errors on energy, forces, and predicted properties (e.g., lattice constants, elastic moduli).

- Freeze the model into a format compatible with LAMMPS (

.pbfor DeePMD,.ampfor Amp,.jsonfor FLARE).

Protocol 2: On-the-Fly Active Learning with FLARE

This protocol details the specific methodology for leveraging FLARE's Bayesian active learning capabilities.

Materials: FLARE installation, LAMMPS compiled with ML-FLARE, ab initio code.

Procedure:

- Initialization: Train a sparse GP model on a small, representative seed dataset.

- Exploratory MD: Run an MD simulation in LAMMPS using the current FLARE potential (

pair_style flare). - Uncertainty Thresholding: During the simulation, FLARE calculates the local uncertainty (standard deviation) of its predictions for each atom at each step.

- Decision & Query: Define a uncertainty threshold (

stress_threshold,force_threshold). When an atom's uncertainty exceeds this threshold, the simulation is paused. - Ab Initio Calculation: The atomic configuration at the uncertain step is sent to the DFT code to compute the accurate energy and forces.

- Model Update: This new, high-information datapoint is added to the training set, and the FLARE GP model is updated incrementally.

- Iteration: The simulation resumes with the improved potential. This loop continues until uncertainties remain below the threshold, indicating convergence and reliable exploration.

Diagram 1: Active Learning with FLARE Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Tools for ML Potential Research

| Item | Function & Explanation |

|---|---|

| VASP / Quantum ESPRESSO | Ab initio electronic structure software. Generates the high-fidelity reference data (energy, forces, virials) required to train the ML potential. |

| Atomic Simulation Environment (ASE) | Python toolkit for working with atoms. Crucial for Amp, and widely used for dataset manipulation, workflow automation, and analysis across all tools. |

DP Data (dpdata) |

A Python library for converting between various atomic simulation data formats and the DeePMD-kit npy/npz format. Essential for data preprocessing in DeePMD workflows. |

| LAMMPS (with ML packages) | The production MD engine. Must be compiled with the relevant packages (ML-PACE for Amp, ML-DEEPMD, ML-FLARE) to enable the use of the trained potentials in large-scale simulations. |

| Jupyter Notebook / Scripts | For interactive data analysis, visualization of training curves (loss vs. epoch), and orchestrating the multi-step training and validation pipeline. |

| High-Quality Initial Dataset | A diverse and representative collection of atomic configurations. This is the foundational "reagent"; its quality and coverage directly determine the accuracy and transferability of the final ML potential. |

Deployment & Validation in LAMMPS

Once a potential is trained, its performance must be validated within LAMMPS before production runs.

Procedure:

- LAMMPS Input Script: Prepare a script specifying

pair_styleandpair_coeffpointing to the frozen model file. - Property Validation: Run calculations to reproduce fundamental materials properties:

- Equation of State: Energy vs. volume to confirm correct equilibrium lattice parameter and cohesive energy.

- Elastic Constants: Calculate via strain fluctuations or finite difference.

- Phonon Dispersion: Perform molecular dynamics in a supercell or use phonopy interface (if available) to validate dynamic properties.

- Comparison: Quantitatively compare ML potential results against the original DFT benchmark data and, where possible, experimental data.

Diagram 2: ML Potential Development & Deployment Pipeline

LAMMPS Input Script Anatomy for ML-IAP and ML-KIM Styles

Application Notes

The integration of Machine Learning Interatomic Potentials (ML-IAPs) into LAMMPS through the pair_style mliap and the KIM API via pair_style kim represents a paradigm shift in molecular dynamics, enabling near-quantum accuracy at classical computational cost. Within a broader thesis on LAMDPS deployment for ML potentials, these styles are critical for simulating complex materials and biomolecular systems relevant to advanced drug discovery. The mliap style interfaces with internal LAMMPS ML models (e.g., NN, LINEAR), while the kim style provides a standardized portal to external ML potentials archived in the OpenKIM repository.

Table 1: Comparison of ML-IAP and ML-KIM Styles in LAMMPS

| Feature | pair_style mliap |

pair_style kim |

|---|---|---|

| Primary Interface | Internal LAMMPS C++ API | External KIM API Standard |

| Model Source | User-trained model (e.g., via fitpod or mliappy) |

OpenKIM repository (online/installed) |

| Supported ML Frameworks | Native LAMMPS (NN, linear), PyTorch (via mliappy lib) |

Model-agnostic (KIM-compliant models) |

| Key Commands | pair_style mliap, pair_coeff * * model LINEAR descriptor_SNAP |

pair_style kim, kim init, kim interactions |

| Deployment Flexibility | High (custom model integration) | High (standardized, portable) |

| Typical Performance | Varies by model size/descriptor | Optimized by model developer |

| Best For | Proprietary models, integrated workflows | Verified/validated community potentials |

Table 2: Quantitative Performance Metrics for Common ML-IAPs (Representative)

| Descriptor / Model Type | Species | Approx. Atoms | Speed (ns/day) | Typical RMSE (meV/atom) |

|---|---|---|---|---|

| SNAP (Linear) | Si | 10,000 | ~50 | 2 - 10 |

| ACE (NN) | Cu | 8,000 | ~15 | 1 - 5 |

| SOAP (GAP) | H2O | 2,000 | ~5 | 3 - 8 |

| Chebyshev (NN) | Al-Mg | 5,000 | ~20 | 2 - 7 |

Experimental Protocols

Protocol: Deploying an ML-IAP (SNAP Model) for a Metal Alloy

Objective: Perform an NPT simulation of an Al-Ni alloy using a fitted Spectral Neighbor Analysis Potential (SNAP) model via pair_style mliap.

Materials: Pre-trained SNAP model file (AlNi.snapcoeff and AlNi.snapparam), LAMMPS executable compiled with ML-IAP package.

Procedure:

- Script Initialization:

- Structure Creation:

- ML-IAP Definition: This command loads the linear model coefficients and the SNAP descriptor parameters for the element mapping.

- Simulation Setup:

- Run & Analysis:

Protocol: Deploying a KIM ML Potential for a Drug-Receptor System

Objective: Perform energy minimization and equilibration of a hydrated protein-ligand complex using a ML-based reactive potential (e.g., ANI) from the OpenKIM repository.

Materials: LAMMPS compiled with KIM package, installed KIM API, and the specific KIM model (e.g., ANI-1x).

Procedure:

- KIM Model Initialization:

The

kim initcommand loads the model and defines the supported elements. - System Setup:

- Minimization & Equilibration:

Mandatory Visualizations

Diagram 1: Workflow for Deploying ML Potentials in LAMMPS

Diagram 2: Logical Structure of ML-IAP Pair Style Interaction

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for ML-IAP Simulations

| Item | Function / Description |

|---|---|

| LAMMPS Executable (with ML-IAP/KIM) | Core simulation engine, must be compiled with ML-IAP, KIM, and ML-SNAP packages. |

| Training Dataset (e.g., XYZ, OVITO) | High-quality DFT or experimental reference data for fitting the ML potential. |

| Model Fitting Tool (fitpod, MLIP, PaceMaker) | Software to convert training data into a functional .snapcoeff or model file. |

| KIM API & Model Drivers | Middleware that enables LAMMPS to call external, standardized ML potentials. |

| OpenKIM Model (e.g., ANI-1x, GAP-20) | Pre-trained, validated potential ready for deployment via pair_style kim. |

| Descriptor Parameter File | Defines the mathematical representation of atomic environments (e.g., snapparam). |

| High-Performance Computing (HPC) Cluster | Essential for training and production MD runs due to computational intensity. |

| Visualization/Analysis Suite (OVITO, VMD) | For post-processing trajectories, analyzing structure, and computing properties. |

Within the broader thesis on deploying LAMMPS with Machine Learning Potentials (MLPs), this application note details the practical implementation of thermodynamic ensembles (NVT/NPT) and enhanced sampling techniques. The integration of MLPs—such as Neural Network Potentials (NNPs), Gaussian Approximation Potentials (GAP), and Moment Tensor Potentials (MTPs)—into the LAMMPS molecular dynamics framework enables high-accuracy simulations of complex materials and biomolecular systems at extended scales. This protocol is critical for researchers aiming to study phase behavior, stability, and rare events in drug development and materials science.

Fundamental Ensembles: NVT and NPT

NVT Ensemble (Constant Number, Volume, Temperature): Used to equilibrate temperature and study properties at fixed density. Common thermostats include Nosé-Hoover and Berendsen. NPT Ensemble (Constant Number, Pressure, Temperature): Used to simulate realistic experimental conditions where pressure and temperature are controlled, allowing cell volume fluctuations. Barostats like Parrinello-Rahman are often coupled.

Key Parameters & Quantitative Data

Table 1: Common Thermostat and Barostat Parameters for LAMMPS with MLPs

| Component | Type | LAMMPS Command | Key Parameter | Typical Value | Function |

|---|---|---|---|---|---|

| Thermostat | Nosé-Hoover | fix nvt |

tstart, tstop, damp |

300 K, 300 K, 100 fs | Controls system temperature. |

| Barostat | Parrinello-Rahman | fix npt |

pstart, pstop, pdamp |

1 bar, 1 bar, 1000 fs | Controls system pressure (isotropic). |

| Integrator | Velocity Verlet | fix nve |

timestep |

0.5 - 1.0 fs | Integrates equations of motion. |

| MLP Interface | PyTorch/LAMMPS | pair_style mlp |

model.pt |

N/A | Specifies the ML potential file. |

Experimental Protocol: Basic NPT Equilibration for a Solvated Protein-Ligand System

Objective: Equilibrate a solvated protein-ligand complex at physiological conditions (310 K, 1 bar) using an MLP.

Materials: LAMMPS executable, MLP model file (potential.pt), system data file (system.data).

Procedure:

- Minimization: Run energy minimization to remove bad contacts.

- NVT Equilibration: Heat the system gradually.

- NPT Production: Run the production simulation at constant pressure and temperature.

- Analysis: Use LAMMPS

thermooutput or tools like VMD/MDAnalysis to compute density, RMSD, and potential energy.

Enhanced Sampling with MLPs

MLPs provide accurate energies and forces, enabling enhanced sampling methods to overcome free energy barriers. Key Techniques:

- Metadynamics (MetaD): Biases simulation with history-dependent Gaussian potentials in Collective Variable (CV) space.

- Umbrella Sampling (US): Restrains simulation at specific points along a CV using harmonic potentials.

- Temperature Accelerated Dynamics (TAD) / Parallel Replica Dynamics: Accelerate events by running multiple replicas at elevated temperatures.

Protocol: Well-Tempered Metadynamics with an MLP for Conformational Sampling

Objective: Sample the free energy landscape of a small molecule's dihedral angle. Materials: LAMMPS with PLUMED plugin, MLP, CV definition file. Procedure:

- Compile LAMMPS with PLUMED. Ensure the MLP pair style is compatible.

- Define CVs (e.g., a torsion angle) in a

plumed.datinput file. - Run MetaD simulation:

Example

plumed.datsnippet: - Analysis: Use

plumed sum_hillsto reconstruct the Free Energy Surface (FES).

Quantitative Comparison of Enhanced Sampling Methods

Table 2: Comparison of Enhanced Sampling Methods for Use with MLPs in LAMMPS

| Method | Key LAMMPS/PLUMED Command | Primary CVs | Computational Cost | Best For | Key Challenge with MLPs |

|---|---|---|---|---|---|

| Metadynamics | METAD |

1-3 Dimensional | High (bias deposition) | Exploring unknown FES, nucleation | Choosing optimal CVs. |

| Umbrella Sampling | RESTRAINT or UMBRELLA |

1 Dimensional (Reaction Path) | Very High (multiple windows) | Computing PMF along known path | Force constant selection; window overlap. |

| Parallel Tempering | fix temp/berendsen (multiple replicas) |

Temperature (indirect) | Very High (multiple replicas) | Systems with rough energy landscapes | High communication overhead. |

| Gaussian Accelerated MD (GaMD) | External implementation | Potential/Dihedral Boost (global) | Moderate (single run) | Biomolecular conformational changes | Tuning boost parameters. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Software for MLP Simulations in LAMMPS

| Item | Category | Example/Product | Function/Benefit |

|---|---|---|---|

| ML Potential | Software Model | M3GNet, ANI-2x, Allegro | Provides quantum-mechanical accuracy at classical MD cost. |

| LAMMPS | Simulation Engine | LAMMPS Stable Release (Aug 2023) | Flexible, open-source MD code with extensive fix/pair styles. |

| PLUMED | Enhanced Sampling Library | PLUMED v2.9 | Implements CV-based analysis and enhanced sampling methods. |

| Interatomic Potential File | Data | graph.pb (TensorFlow), model.pt (PyTorch) |

Serialized MLP model for inference in LAMMPS. |

| System Topology/Coordinate File | Data | system.data, conf.lmp |

Defines initial atomic positions, types, and box dimensions. |

| High-Performance Computing (HPC) Resource | Infrastructure | GPU cluster (NVIDIA A100/V100) | Accelerates MLP force evaluation, enabling larger, longer simulations. |

| Visualization & Analysis Suite | Software | VMD, OVITO, MDAnalysis | For trajectory visualization, CV calculation, and result plotting. |

Workflow and Relationship Diagrams

Title: MLP Simulation Workflow in LAMMPS

Title: MLP Deployment Pathway for Enhanced Sampling

1. Introduction and Thesis Context This case study contributes to a broader thesis on the robust deployment of the LAMMPS (Large-scale Atomic/Molecular Massively Parallel Simulator) molecular dynamics engine integrated with machine learning potentials (MLPs). The primary objective is to demonstrate a complete, reproducible protocol for simulating a biologically relevant, fully solvated protein system using a neural network potential, moving beyond canonical force fields to leverage the accuracy of ab initio data.

2. Application Notes: Key Findings and Data

The simulation of the model protein Chignolin (CLN025) in explicit TIP3P water solvent using a DeePMD-kit neural network potential, as executed in LAMMPS, yields the following quantitative outcomes compared to a standard classical (AMBER ff14SB) simulation.

Table 1: Simulation Performance and Structural Metrics Comparison

| Metric | Classical MD (AMBER ff14SB) | Neural Network MD (DeePMD) | Notes |

|---|---|---|---|

| System Size | 1,660 atoms | 1,660 atoms | CLN025 + 520 water molecules. |

| Simulation Time | 100 ns | 10 ns | MLP targets enhanced sampling of configurational space. |

| Avg. Time per MD Step | 0.25 s | 2.8 s | Measured on 4 CPU cores; MLP incurs ~10x overhead. |

| RMSD (Backbone, to NMR) | 1.12 ± 0.15 Å | 0.98 ± 0.12 Å | Lower RMSD suggests improved structural fidelity. |

| Native H-Bonds | 3.2 ± 0.5 | 3.8 ± 0.3 | MLP better stabilizes key beta-sheet hydrogen bonds. |

| Potential Energy Drift | < 0.01 kJ/mol/ps | < 0.05 kJ/mol/ps | MLP shows slight drift, requiring careful NVE/NVT validation. |

Table 2: Neural Network Potential Training Data Summary

| Data Component | Quantity | Purpose |

|---|---|---|

| DFT Reference Calculations | 12,000 structures | Training and validation labels (energy, forces, virial). |

| System Coverage | CLN025 conformers + water clusters | Ensures sampling of protein, solvent, and interactions. |

| Energy RMSE (Test Set) | 1.2 meV/atom | Measure of prediction accuracy for energies. |

| Force RMSE (Test Set) | 85 meV/Å | Measure of prediction accuracy for atomic forces. |

3. Experimental Protocols

Protocol 3.1: Neural Network Potential Training and Preparation

- Data Generation: Perform ab initio (DFT) molecular dynamics of the target protein in a small water box and of isolated water clusters. Extract diverse frames.

- DeePMD Model Training: Use the DeePMD-kit

dp traincommand with a configuration file (e.g.,input.json) defining network architecture (e.g., [25,50,100] embedding net, [240,240,240] fitting net), learning rate, and loss functions. - Model Freezing: Convert the trained model to a frozen format compatible with LAMMPS:

dp freeze -o frozen_model.pb. - Validation: Use

dp testto compute RMSE on a held-out test set of DFT data. Validate energy/force predictions for known protein conformations.

Protocol 3.2: LAMMPS Simulation of Solvated Protein

- System Initialization: Build or obtain a PDB of the protein. Use LAMMPS commands or tools like PACKMOL to solvate it in a TIP3P water box with a minimum 10 Å padding.

- LAMMPS Script Configuration:

- Load the DeePMD plugin:

pair_style deepmd frozen_model.pb - Define the pair coefficient:

pair_coeff * * - Set long-range electrostatics (PPPM):

kspace_style pppm 1.0e-4 - Specify water model constraints:

fix shake all shake 0.0001 10 100 b 1 a 1 - Implement thermostat/barostat (e.g., NPT at 300 K, 1 bar):

fix npt all npt temp 300 300 100 iso 1.0 1.0 1000

- Load the DeePMD plugin:

- Equilibration: Minimize energy, then run short NVT and NPT simulations (100 ps each) to stabilize density and temperature.

- Production Run: Execute a multi-nanosecond NPT production simulation. Write trajectories every 1-10 ps for analysis.

- Analysis: Use LAMMPS

fix ave/timeor tools like MDtraj/VMD to calculate RMSD, radius of gyration, hydrogen bonding, and other properties.

4. Visualizations

Workflow: From Data to Simulation with an ML Potential

LAMMPS Architecture with ML Potential Integration

5. The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software and Computational Tools

| Tool/Reagent | Category | Function in Protocol |

|---|---|---|

| LAMMPS | MD Engine | Core simulation software, executes integration and dynamics with MLP plugins. |

| DeePMD-kit | ML Potential Framework | Trains, validates, and freezes neural network potentials for use in LAMMPS. |

| VASP / Gaussian / CP2K | Ab Initio Software | Generates reference quantum mechanical data for training the MLP. |

| PACKMOL | System Builder | Creates initial coordinates of the protein solvated in a water box. |

| MPI (OpenMPI/MPICH) | Parallel Computing | Enables distributed-memory parallel execution of LAMMPS simulations. |

| PLUMED | Enhanced Sampling | Can be coupled with LAMMPS to perform bias-exchange metadynamics with an MLP. |

| MDtraj / MDAnalysis | Analysis Library | Analyzes simulation trajectories to compute RMSD, H-bonds, and other metrics. |

| Python (NumPy, PyTorch) | Scripting/ML | Environment for data preprocessing, analysis, and custom ML model development. |

Solving Common Pitfalls: Performance Tuning and Stability for ML-Potential MD

Diagnosing and Fixing Energy Explosions and Instabilities.

Within the broader thesis of deploying LAMMPS for molecular dynamics (MD) simulations using machine learning potentials (MLPs), energy explosions—manifested as NaN values or sudden increases in potential energy—represent a critical failure mode. These instabilities compromise simulation trajectories, waste computational resources, and hinder research in materials science and drug development. This document provides structured application notes and protocols for diagnosing and rectifying these issues, focusing on the interplay between LAMMPS deployment, MLP architecture, and simulation parameters.

Common Causes and Diagnostic Table

The following table summarizes primary causes, diagnostic signals, and initial investigative actions.

| Category | Specific Cause | Typical Manifestation | First Diagnostic Step |

|---|---|---|---|

| MLP Architecture & Training | Inadequate training data coverage for configuration. | Explosion upon encountering new atomic environment. | Compute min/max of descriptor values (e.g., symmetry functions) vs. training set. |

| Incorrect force/energy scaling. | Systematic drift in energy from first step. | Check consistency of units between MLP output and LAMMPS expected input. | |

| Poor extrapolation behavior of MLP. | Sudden NaN in forces/energy. |

Monitor MLP's reported uncertainty or extrapolation grade (if available). | |

| Simulation Setup | Overly large initial velocity (high temperature). | High kinetic energy leading to unphysical collisions. | Check instantaneous temperature vs. target. Reduce velocity creation temperature. |

| Incorrectly overlapping atoms (bad initial geometry). | Extremely high repulsive forces at step 0 or 1. | Visualize initial structure; check for interatomic distances below 0.5 Å. | |

| Incompatible periodic boundary conditions (PBC). | Explosion at boundaries or molecule crossing. | Verify PBC flag and molecule integrity via lammps image command. |

|

| Numerical Integrator | Timestep (dt) too large. |

Gradual then sudden rise in energy. | Reduce dt from 1 fs to 0.5 fs or 0.25 fs for testing. |

| Thermostat/barostat coupling too aggressive. | Oscillatory or runaway energy during equilibration. | Increase relaxation time constants (Tdamp, Pdamp) by factor of 10. |

Experimental Protocols for Diagnosis and Remediation

Protocol 3.1: Systematic Stability Test Workflow

Objective: Isolate the cause of an energy explosion in a failing simulation.

- Replicate Failure: Document the exact LAMMPS script, MLP model file (

potential.pt), and initial structure file that produced the explosion. Note the step number of failure. - Create Minimal Test: Strip the script to an NVE ensemble with no thermostats/barostats. Run for 10-20 steps. If stable, reintroduce thermostat/barostat to identify culprit.

- Analyze Initial Configuration:

- Use

lammps write_datacommand on the input structure. - Compute minimum interatomic distance:

grep -A2 "Atoms" data.file \| awk 'NR>2 {print $4, $5, $6}'(requires subsequent pair-wise distance script). - Values < 0.5 Å indicate a bad structure; re-examine building/cleaning protocol.

- Use

- Test with Reference Potential:

- Temporarily replace the MLP with a classical pair potential (e.g., Lennard-Jones) using the same geometry and

dt. - If stable, the issue likely lies with the MLP or its interface. If unstable, the problem is in the MD setup.

- Temporarily replace the MLP with a classical pair potential (e.g., Lennard-Jones) using the same geometry and

- MLP-Specific Diagnostics:

- If supported by the MLP interface (e.g.,

mliap), enableprintorcomputecommands for model uncertainty. - Implement a callback to compute and log the mean and max of atomic descriptors for each step, comparing to training set bounds.

- If supported by the MLP interface (e.g.,

Protocol 3.2: Retraining and Fine-tuning MLP for Stability

Objective: Improve MLP robustness when diagnostics point to inadequate potential.

- Generate Remedial Training Data:

- From the failed simulation, extract the last stable configuration and the first unstable configuration.

- Perform constrained MD or random displacements around these configurations.

- Use ab initio methods to compute accurate energies and forces for these new configurations.

- Augmented Retraining:

- Combine new data with the original training set.

- During training, apply increased weight (

loss coefficient) to forces from the new, problematic configurations. - Implement a progressive training curriculum, starting with low-temperature/energy data.

- Validation: Test the new potential on the exact failing simulation script from Protocol 3.1, Step 1. Monitor energy and maximum force for 10x the original failure time.

Visualization of Diagnostic Workflows

Title: Energy Explosion Diagnostic Decision Tree

Title: MLP Retraining Protocol for Stability

The Scientist's Toolkit: Research Reagent Solutions

| Tool / Reagent | Function in Diagnosis/Fix | Example / Note |

|---|---|---|

LAMMPS fix halt |

Automatically stops simulation when energy/force exceeds a threshold, saving resources. | fix halt all halt/backward 100 v_etotal > -100.0 |

| OVITO Visualization | Interactive visualization of atomic trajectories to identify structural failures. | Critical for spotting local melting, bond breaking, or PBC artifacts. |

| PLUMED | Enhanced sampling and on-the-fly analysis; can compute collective variables that may correlate with instability. | Can be coupled with LAMMPS for metadynamics to explore failure pathways. |

| MPI-aware Debugger | For parallel LAMMPS runs, helps identify rank-specific issues causing divergence. | TotalView, DDT; set -catchsegments no in LAMMPS MPI_INC flags. |

| Ab Initio Software | Generates high-fidelity training data for retraining MLPs on problematic configurations. | VASP, Quantum ESPRESSO, Gaussian. |

| MLP Training Framework | Library for retraining and fine-tuning neural network potentials. | PyTorch, TensorFlow with keras-mlp-potential, horovod for distributed training. |

| Structure Cleaner | Prepares and validates initial molecular geometries. | Packmol, AVOGADRO, Open Babel for minimizing bad contacts. |

This document provides application notes for optimizing computational performance in the context of deploying the Large-scale Atomic/Molecular Massively Parallel Simulator (LAMMPS) with machine learning potentials (MLPs), such as those from the OpenKIM repository, Moment Tensor Potentials, or NequIP. The accurate simulation of complex molecular systems (e.g., protein-ligand interactions in drug development) using MLPs is computationally demanding. Efficient utilization of hardware resources—specifically, the choice between central processing unit (CPU) and graphics processing unit (GPU) parallelism, and their hybrid use—is critical for achieving scientific throughput. This guide details benchmarking protocols, data analysis, and decision frameworks for researchers.

Performance Benchmarking: CPU vs. GPU Architectures for MLPs

The performance characteristics of MLP evaluation differ significantly from traditional classical force fields. MLPs often involve dense matrix multiplications and complex non-linear functions, which are highly parallelizable.

Table 1: Core Architectural Comparison for ML-LAMMPS Workloads

| Component | CPU (e.g., AMD EPYC, Intel Xeon) | GPU (e.g., NVIDIA A100, H100) |

|---|---|---|

| Core Strength | Complex branching, serial tasks, high clock speed per core. | Massively parallel execution of simple, identical operations (SIMD). |

| Ideal for | LAMMPS setup, I/O, non-ML parts of pair styles, moderate-scale MD with simple potentials. | Evaluation of MLPs (inference), large-scale (100k+ atoms) molecular dynamics steps. |

| Parallelism Type | Task & distributed memory (MPI) across nodes. Threading (OpenMP) within a node. | Data parallelism: Thousands of threads compute forces/energies for many atoms simultaneously. |

| Memory | Large capacity, high bandwidth per core. | Very high bandwidth (HBM2e), but limited capacity (80GB max on current high-end). |

| Key LAMMPS Package | -sf opt or -pk omp for domain decomposition. |

-sf gpu or -pk gpu with -k on g 1 for ML-enabled pair styles (e.g., mliap). |

Table 2: Illustrative Benchmark Data for a Representative Protein-Ligand System (~50,000 atoms) Note: Data is synthesized from recent community benchmarks (2023-2024). Performance is system and potential-dependent.

| Hardware Configuration | ML Potential | Simulation Speed (ns/day) | Relative Cost Efficiency (Speed/USD per hour) |

|---|---|---|---|

| 2x CPU Nodes (64 Cores total, MPI) | MTP | 1.5 | 1.0 (Baseline) |

| Single Node, 1x High-End GPU | MTP | 12.4 | ~8.2 |

| Single Node, 4x Mid-Range GPUs | ANI-2x | 28.7 | ~6.5 |