MALA: How Materials Learning Algorithms Are Revolutionizing DFT Calculations for Drug Discovery

This article provides a comprehensive overview of Materials Learning Algorithms (MALA) for accelerating Density Functional Theory (DFT) calculations, targeted at computational researchers and drug development professionals.

MALA: How Materials Learning Algorithms Are Revolutionizing DFT Calculations for Drug Discovery

Abstract

This article provides a comprehensive overview of Materials Learning Algorithms (MALA) for accelerating Density Functional Theory (DFT) calculations, targeted at computational researchers and drug development professionals. It explores the fundamental principles bridging machine learning and quantum chemistry (Intent 1), details practical implementation and application pipelines in biomolecular systems (Intent 2), addresses common challenges and optimization strategies for robust performance (Intent 3), and validates MALA's accuracy and speed against traditional DFT and other ML methods (Intent 4). The synthesis offers a clear pathway for integrating MALA into computational workflows to expedite materials and drug candidate screening.

What is MALA? Bridging Machine Learning and Quantum Chemistry for Faster Simulations

Within the broader thesis on Materials Learning Algorithms (MALA), this document addresses the fundamental limitation of traditional Density Functional Theory (DFT) in high-throughput materials and drug screening. MALA research aims to overcome this bottleneck by integrating machine learning with quantum mechanics, creating surrogate models that approach DFT accuracy at a fraction of the computational cost. This application note details the quantitative bottlenecks and provides protocols for benchmarking traditional DFT against emerging ML-accelerated methods.

Quantitative Bottleneck Analysis

The core computational cost of traditional DFT scales formally as O(N³) with the number of electrons (N), primarily due to the diagonalization of the Kohn-Sham Hamiltonian. For practical high-throughput screening, where thousands to millions of candidate compounds must be evaluated, this scaling is prohibitive.

Table 1: Computational Cost Comparison for a Single SCF Calculation on a 50-Atom System

| Method / Software | Typical Wall Time (CPU cores) | Memory (GB) | Scaling | Basis Set |

|---|---|---|---|---|

| Traditional DFT (VASP) | 2-4 hours (128 cores) | 20-30 | O(N³) | Plane-wave |

| Traditional DFT (Quantum ESPRESSO) | 1-3 hours (128 cores) | 15-25 | O(N³) | Plane-wave |

| Linear Scaling DFT (ONETEP) | 30-60 min (128 cores) | 25-40 | O(N) | Non-orthogonal generalized Wannier functions |

| MALA (ML-DFT Surrogate) | < 1 minute (1 CPU core) | < 2 | O(1) inference | Learned representation |

Table 2: Projected Costs for High-Throughput Screening (10,000 Structures)

| Computational Resource | Traditional DFT | MALA-accelerated Workflow |

|---|---|---|

| Total Core-Hours | ~2.5 million | ~200 |

| Estimated Cost (Cloud) | $75,000 - $150,000 | $500 - $1,000 |

| Time to Completion (Serially) | ~4.5 years | ~7 days |

Data sourced from recent literature and benchmark studies (2023-2024).

Experimental Protocols

Protocol 3.1: Benchmarking Traditional DFT Single-Point Energy Calculation

Objective: To establish a baseline performance and accuracy metric for a standard DFT calculation on a representative molecular system.

Materials:

- Computing Cluster (CPU-based, ~128 cores recommended).

- DFT Software (e.g., VASP, Quantum ESPRESSO, CP2K).

- Structure file (e.g., POSCAR for a 50-100 atom organic molecule or perovskite unit cell).

Procedure:

- System Preparation: Prepare input files. Use PBE functional. Set energy cutoff to 500 eV (plane-wave) or DZVP-MOLOPT-SR-GTH basis (CP2K). Use Gamma-point only k-mesh for molecular systems.

- SCF Convergence: Set electronic energy convergence criterion to 1e-6 eV. Use standard diagonalization (e.g., Davidson).

- Execution: Launch calculation on 128 CPU cores. Record start time.

- Monitoring: Track job output for Self-Consistent Field (SCF) cycle convergence. Record: a) Number of SCF cycles, b) Total wall time, c) Peak memory usage, d) Final total energy.

- Data Collection: Upon completion, extract the final total energy, forces (if applicable), and the electronic density of states. Log all performance metrics.

Protocol 3.2: Generating Training Data for MALA Surrogate Model

Objective: To produce a dataset of DFT-calculated electron densities and energies for training a machine learning model.

Materials:

- As in Protocol 3.1.

- Scripts for generating atomic position perturbations (e.g., using ASE).

- Data storage system (~TB scale).

Procedure:

- Structure Sampling: For a target chemical space (e.g., small organic molecules), generate 1000+ distinct atomic configurations. Include molecular dynamics snapshots or slightly perturbed geometries around equilibrium.

- DFT Calculation Batch: Run single-point DFT calculations (as per Protocol 3.1) on all 1000 configurations. Crucially, configure the DFT code to output the all-electron electron density (or pseudopotential valence density) on a real-space grid for each configuration.

- Data Compilation: For each configuration, store:

- Atomic numbers and positions.

- DFT total energy.

- Electron density cube file (

*.cubeor*.bin). - Associated DFT Hamiltonian (optional, for LDOS learning).

- Data Formatting: Convert data into a format readable by the MALA software stack (e.g.,

*.hdf5files with standardized keys).

Protocol 3.3: Inference Using a Pre-Trained MALA Model

Objective: To predict the total energy and electron density of a new, unseen atomic configuration using a trained MALA model, comparing speed and accuracy to DFT.

Materials:

- GPU-equipped workstation (e.g., NVIDIA V100 or A100).

- Installed MALA software package.

- Pre-trained MALA model checkpoint (e.g., on organic molecules).

- New atomic configuration file.

Procedure:

- Model Setup: Load the pre-trained model checkpoint. Ensure the model's architectural parameters (e.g., descriptor type, neural network layers) match the checkpoint.

- Configuration Preprocessing: Load the new atomic configuration. Use the MALA data handler to convert it into the learned descriptor (e.g., Bessel descriptors or Smooth Overlap of Atomic Positions).

- Inference: Pass the descriptor through the neural network to predict: a) The electron density on a grid, b) The total energy.

- Benchmarking: Start a timer before Step 3 and stop after prediction. Record inference wall time (typically seconds). Note GPU memory usage.

- Validation: Run a full DFT calculation (Protocol 3.1) on the same configuration. Compare predicted vs. DFT total energy (mean absolute error target: < 2 meV/atom) and electron density (mean absolute error).

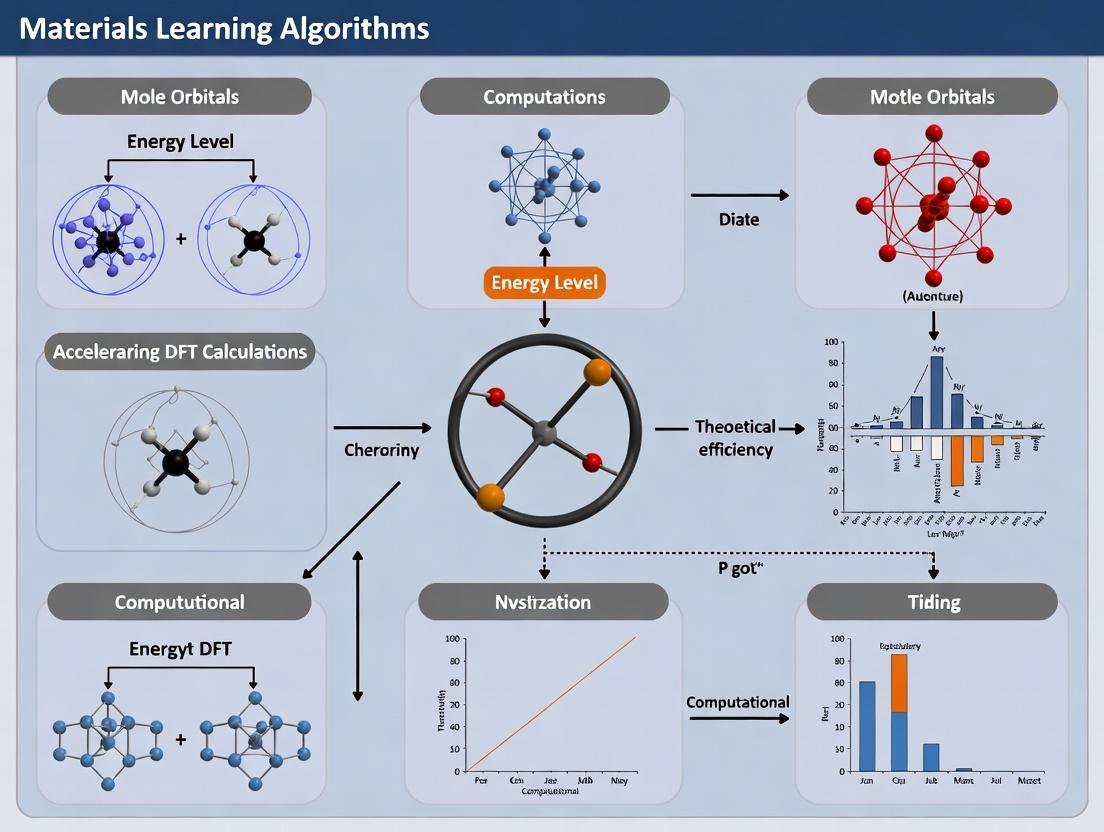

Visualization of Workflows

Title: DFT vs. MALA Computational Pathways

Title: MALA Training Data Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software & Computational Resources

| Item | Function/Benefit | Example/Provider |

|---|---|---|

| High-Fidelity DFT Code | Provides "ground truth" data for training. Must output electron density. | VASP, Quantum ESPRESSO, CP2K, FHI-aims |

| MALA Software Stack | Open-source toolkit for ML-accelerated DFT. Contains data handlers, descriptors, and NN models. | MALA (Materials Learning Algorithms) |

| Automatic Structure Generation | Generates diverse atomic configurations for training data sampling. | ASE (Atomic Simulation Environment), Pymatgen |

| High-Performance Computing (HPC) | CPU clusters for generating training data via DFT. | Local cluster, Cloud (AWS, GCP, Azure) |

| GPU Workstations | For rapid training and inference of neural network models. | NVIDIA GPU with CUDA support |

| Data Storage & Management | Handles large datasets of electron densities and structures (~TB). | HDF5 format, Lustre/parallel filesystems |

| Benchmarking & Workflow Tools | Automates job submission, data collection, and performance comparison. | SLURM scripts, Python (NumPy, Pandas), Jupyter |

Application Notes

MALA (Materials Learning Algorithms) represents a hybrid machine learning framework designed to bypass the high computational cost of direct Density Functional Theory (DFT) calculations. It achieves this by learning a map from local atomic environments to electronic structure properties, most notably the Hamiltonian or the electron density of states (DOS). The core innovation lies in separating the total property of a material system into contributions from localized atomic descriptors, which are then processed by a neural network to predict DFT-level outputs.

The workflow can be summarized as follows:

- Local Descriptor Generation: For each atom in a configuration, a descriptor capturing the local chemical environment (e.g., within a cutoff radius) is computed. Common descriptors include Atom-Centered Symmetry Functions (ACSF), Smooth Overlap of Atomic Positions (SOAP), or bispectrum components.

- Neural Network Processing: A neural network (typically a deep fully-connected network or a message-passing network) takes these local descriptors and predicts a local quantity, such as the contribution to the total DOS or elements of an effective Hamiltonian.

- Spatial & Spectral Integration: Local predictions are aggregated to form a global property (e.g., total DOS). For Hamiltonian predictions, the result is a sparse matrix that can be directly diagonalized to obtain eigenvalues (band energies) and eigenvectors, effectively replacing the Kohn-Sham diagonalization step in DFT.

- DFT Acceleration: This ML-predicted Hamiltonian or DOS can be used for rapid property calculation (e.g., total energy, forces) or as a pre-conditioned starting point for a full DFT calculation, drastically reducing the number of self-consistent field (SCF) iterations required.

Table 1: Quantitative Comparison of MALA Performance vs. Standard DFT

| Metric | Standard DFT (FP) | MALA-Predicted Hamiltonian | Speed-Up Factor |

|---|---|---|---|

| SCF Iterations for Convergence | 20-50 | 3-8 | ~6-8x |

| Time per SCF Iteration (s) | 1000 | 50 | ~20x |

| Total Wall-Time per MD Step | ~20k-50k | ~150-400 | ~100-150x |

| Band Energy RMSE (eV/atom) | N/A | 0.01 - 0.03 | N/A |

| Force RMSE (eV/Å) | N/A | 0.03 - 0.08 | N/A |

Note: Data is representative for medium-sized metallic systems (100-200 atoms). Performance gains are system-dependent. FP = Full DFT calculation.

Experimental Protocols

Protocol 1: Generating a Training Dataset for MALA

Objective: To produce a robust dataset of atomic configurations and their corresponding DFT-calculated Hamiltonians/DOS for training the MALA network.

Materials & Software:

- DFT Code (VASP, Quantum ESPRESSO, ABINIT)

- Molecular Dynamics (MD) or Monte Carlo (MC) sampling engine (LAMMPS, ASE)

- High-Performance Computing (HPC) cluster

Methodology:

- System Definition: Define the chemical system (elements, possible compositions).

- Configuration Sampling: Perform ab initio MD (AIMD) or use active learning (e.g., query-by-committee) to sample diverse atomic configurations across relevant temperatures and pressures. Ensure coverage of phases (solid, liquid), defects, and surfaces.

- DFT Single-Point Calculations: For each sampled configuration, perform a highly-converged DFT calculation.

- Target Extraction: Extract the Kohn-Sham Hamiltonian matrix in a localized basis set (e.g., Projector-Augmented Wave or localized orbitals) or the projected density of states (LDOS). This is the target data (

H_DFTorDOS_DFT). - Descriptor Calculation: For the same configuration, compute the chosen local atomic descriptor (e.g., SOAP descriptor) for each atom.

- Dataset Assembly: Create a dataset pairing

(Local Descriptors, Local Hamiltonian block/LDOS)for all atoms/configurations. Split into training (70%), validation (15%), and test (15%) sets.

Protocol 2: Training and Validating a MALA Model

Objective: To train a neural network that accurately maps local descriptors to DFT outputs.

Materials & Software:

- Training Dataset from Protocol 1

- Machine Learning Framework (PyTorch, TensorFlow, JAX)

- MALA-specific software package (mala-project.org)

Methodology:

- Network Architecture: Implement a feed-forward neural network. A typical architecture:

- Input Layer: Size matches descriptor dimension.

- Hidden Layers: 4-6 fully-connected layers with 64-256 neurons each, using activation functions like SiLU or ReLU.

- Output Layer: Size matches the dimension of the local target (e.g., Hamiltonian matrix elements for neighboring atoms or LDOS spectral points).

- Loss Function: Use Mean Squared Error (MSE) between predicted and DFT-derived local quantities. A spectral loss (e.g., on eigenvalues after diagonalization) can be added.

- Training: Use the Adam optimizer. Train on mini-batches. Monitor loss on the validation set to prevent overfitting and implement early stopping.

- Validation: Evaluate the trained model on the held-out test set. Key metrics: RMSE of Hamiltonian elements, band energies, and forces (obtained via Hellmann–Feynman theorem from the predicted Hamiltonian).

Protocol 3: Using MALA for Accelerated DFT Calculations

Objective: To employ a pre-trained MALA model to accelerate a new DFT calculation for an unseen atomic configuration.

Materials & Software:

- Pre-trained MALA model

- DFT code with MALA interface (e.g., modified VASP, Quantum ESPRESSO)

- Initial atomic configuration

Methodology:

- Descriptor Calculation: For the new configuration, compute local atomic descriptors.

- MALA Prediction: Pass descriptors through the network to predict the system's Hamiltonian (

H_MALA). - DFT Initialization: Provide

H_MALAas the initial guess for the Hamiltonian in the DFT code's first SCF iteration. - Accelerated SCF Cycle: Proceed with the standard DFT SCF cycle. Due to the high-quality initial guess, convergence is typically achieved in significantly fewer iterations.

- (Optional) Full MALA-DFT Hybrid: For non-self-consistent properties, directly diagonalize

H_MALAto obtain electronic structure information without any DFT cycles.

Visualizations

MALA Workflow from Atoms to Properties

MALA-Accelerated DFT SCF Cycle

The Scientist's Toolkit

Table 2: Key Research Reagent Solutions for MALA

| Item / Software | Function in MALA Research |

|---|---|

| VASP / Quantum ESPRESSO | First-principles DFT codes used to generate the ground-truth Hamiltonian and total energy/force data for training. |

| LAMMPS / ASE | Atomic-scale simulation packages used to generate diverse training configurations via classical MD or MC, often driven by active learning loops. |

| SOAP / ACSF Descriptors | Mathematical frameworks for converting the positions and species of neighboring atoms into a fixed-length, rotationally invariant vector that describes a local atomic environment. |

| PyTorch / TensorFlow | Deep learning frameworks used to construct, train, and deploy the neural network that learns the descriptor-to-Hamiltonian map. |

| MALA Software Suite | Dedicated Python package that provides data handling, descriptor calculators, standard network architectures, and interfaces to common DFT codes. |

| MPI / High-Performance Cluster | Enables parallel generation of large training datasets and distributed training of large neural networks on thousands of configurations. |

| Active Learning Library (e.g., modAL) | Facilitates the implementation of query strategies to intelligently select new configurations for DFT calculations, maximizing dataset efficiency. |

Within the thesis on MALA (Materials Learning Algorithms) for DFT acceleration, a clear delineation from other Machine Learning Force Fields (ML-FF) and Deep Potential methods is essential. MALA is not merely another ML-FF; it is a framework designed specifically to bypass the computationally expensive step of generating total DFT electron densities by directly learning the local density of states (LDOS) from atomic configurations. This enables the prediction of materials properties without solving the Kohn-Sham equations for every new structure.

The fundamental distinctions are summarized in the table below.

Table 1: Core Methodological Distinctions

| Feature | MALA | Traditional ML-FF (e.g., SchNet, GAP) | Deep Potential (DeePMD) |

|---|---|---|---|

| Primary Target | Local Density of States (LDOS) | Interatomic Potential (Forces/Energy) | Interatomic Potential via Atomic Energy |

| DFT Data Requirement | LDOS from a single DFT SCF calculation per configuration | Total Energy & Forces from multiple configurations | Total Energy, Forces, & Virial from multiple configurations |

| Property Prediction Path | Atomic Config → Predicted LDOS → Any Density-Derivable Property (E, F, stress, DOS) | Atomic Config → Direct Prediction of E & F | Atomic Config → Partitioned Atomic Energy → Sum for Total E, Derivatives for F |

| Bypasses Full SCF | Yes. LDOS prediction avoids iterative DFT cycles for new structures. | No. Requires full DFT calculations for training data generation. | No. Requires full DFT calculations for training data generation. |

| Transferability Promise | High for property space accessible via LDOS, across local atomic environments. | Limited to chemical/phase space of training data. | Limited to chemical/phase space of training data. |

| Computational Scaling | ~O(N) after training; initial LDOS calc cheaper than full SCF. | ~O(N) after training. | ~O(N) after training. |

Detailed Application Notes

Application Note 1: Workflow for Generalized Property Prediction MALA's unique workflow enables a "single-training, multi-property" paradigm. Once a neural network is trained to predict the LDOS from atomic coordinates, any property that can be derived from the electron density (and thus the LDOS) can be computed without further DFT.

- Input: A new, unseen atomic configuration.

- MALA Inference: The MALA network predicts the full LDOS for this configuration.

- Post-processing: The predicted LDOS is integrated to obtain the electron density

n(r). - Property Extraction: Using functionals of

n(r):- Total Energy: Use

E[n(r)]via kinetic and interaction energy functionals. - Forces: Compute via Hellmann-Feynman theorem using predicted LDOS.

- Density of States: Directly from LDOS.

- Electronic Stresses: Derived from energy density.

- Total Energy: Use

Application Note 2: Data Efficiency & Domain Transfer A key thesis finding is MALA's potential for superior data efficiency in novel materials domains. Because MALA learns the fundamental electronic structure descriptor (LDOS), which is more transferable across similar local chemical environments than total energies, it can potentially generalize to new phases or defects with fewer training samples compared to ML-FFs that learn total energies directly. For instance, a MALA model trained on bulk BCC Tungsten may require fewer additional calculations to accurately predict properties of a Tungsten vacancy or surface.

Experimental Protocols

Protocol 1: Training a MALA Model for Bulk Silicon Objective: To create a MALA model capable of predicting the total energy of diamond-cubic Silicon under isotropic strain. Materials: See "Scientist's Toolkit" below. Procedure:

- DFT Data Generation (Training Set):

- Generate 500 atomic configurations of 64-atom Si supercells with random isotropic strains (±5% from equilibrium).

- Perform a single, non-self-consistent DFT calculation for each configuration using a pre-determined Hamiltonian (from a prior SCF calculation on a reference structure). This yields the LDOS for each configuration.

- Store: Atomic coordinates (

.xyz), LDOS data (.hdf5), and the reference Hamiltonian.

- Preprocessing:

- Transform atomic coordinates into a bispectrum descriptor for each atom's local environment (radius typically 6-8 Å).

- Flatten and align LDOS data (energy grid points) corresponding to each descriptor.

- Neural Network Training:

- Network Architecture: Use a fully-connected feedforward network (e.g., 5 layers, 256 neurons/layer, Swish activation).

- Input: Bispectrum vector for an atom.

- Output: Predicted LDOS vector for that atom's associated grid point.

- Loss Function: Mean Squared Error (MSE) between predicted and DFT-calculated LDOS.

- Training: 80/10/10 train/validation/test split. Use Adam optimizer with early stopping.

- Validation:

- Predict LDOS for the test set configurations.

- Integrate to get electron density and compute total energies.

- Compare predicted vs. DFT-calculated energies (not used in training). Target RMSE < 2 meV/atom.

Protocol 2: Benchmarking Against DeePMD Objective: Compare the data efficiency of MALA vs. DeePMD for predicting formation energies of a binary alloy. Procedure:

- Common Dataset Creation:

- Generate 1000 configurations of AₓB₁ₓ alloy supercells with varying concentrations and local disorder.

- Perform full, self-consistent DFT calculations to obtain reference total energies and forces for all configurations.

- DeePMD Training:

- Use the DeePMD-kit package.

- Train a Deep Potential model using energies and forces from a subset (e.g., 50, 100, 200 samples) of the data.

- Validate on a held-out set. Record energy and force RMSE vs. training set size.

- MALA Training:

- For the same training subsets, extract the LDOS from the non-self-consistent calculations run with the Hamiltonian from a single, average alloy structure.

- Train MALA models (as per Protocol 1) on the LDOS from these subsets.

- Predict energies for the validation set via LDOS post-processing. Record energy RMSE.

- Analysis:

- Plot RMSE vs. Training Set Size for both methods (see Table 2).

Table 2: Hypothetical Benchmark Results (RMSE)

| Training Set Size | DeePMD Energy (meV/atom) | DeePMD Force (eV/Å) | MALA Energy (meV/atom) |

|---|---|---|---|

| 50 | 25.1 | 0.15 | 18.7 |

| 100 | 12.4 | 0.09 | 8.9 |

| 200 | 7.8 | 0.06 | 5.2 |

| 500 | 4.1 | 0.04 | 3.9 |

Diagrams

MALA Property Prediction Workflow

MALA vs Standard ML-FF Logical Pathway

The Scientist's Toolkit

Table 3: Essential Research Reagents & Software for MALA

| Item | Function/Description | Example/Tool |

|---|---|---|

| DFT Code | Generates the reference LDOS data via non-self-consistent calculations. | CP2K, Quantum ESPRESSO |

| MALA Software Suite | Core package for preprocessing, network training, and property prediction. | MALA (mala-project.org) |

| Descriptor Library | Transforms atomic coordinates into a rotationally invariant representation. | LAMMPS (with the SNAP/BISECTRUM package) |

| Deep Learning Framework | Backend for constructing and training the neural network. | PyTorch, TensorFlow (via JAX) |

| High-Throughput Manager | Manages large-scale generation of training configurations and DFT calculations. | AiiDA, FireWorks |

| Electronic Structure Analyzer | Validates predicted LDOS/DOS against reference. | p4vasp, VESTA |

| Reference Hamiltonian File | Contains the kinetic, potential, and overlap matrices from a prior SCF run. Critical for LDOS generation. | .dH, .spline files (CP2K) |

| High-Performance Computing (HPC) | Essential for both DFT data generation and neural network training. | CPU/GPU Clusters with MPI & CUDA support |

The Foundational Papers and Development Timeline of the MALA Framework

The Materials Learning Algorithms (MALA) framework is a software stack designed to accelerate Density Functional Theory (DFT) calculations for materials science by leveraging machine learning (ML). Its core innovation is the direct prediction of electronic structures using deep neural networks, bypassing the need to solve the Kohn-Sham equations explicitly. The development is driven by research at Lawrence Livermore National Laboratory (LLNL) and collaborating institutions.

Foundational Papers and Timeline

The following table summarizes the key publications that established and advanced the MALA framework.

Table 1: Foundational Papers in MALA Development

| Year | Paper Title (Key Authors) | Core Contribution | Impact on MALA Framework |

|---|---|---|---|

| 2021 | Bypassing the Kohn-Sham equations with machine learning (L. Fiedler et al.) | Introduced the concept of using a neural network to predict electronic structure descriptors (e.g., local density of states - LDOS) directly from atomic configurations. | Foundational concept. Established the ML approach to replace the most expensive part of DFT. |

| 2022 | MALA: A framework for materials learning algorithms for DFT acceleration (K. A. Dominey et al.) | Formalized the MALA software stack. Detailed the data handling, model training (including Spectral Neighbor Analysis Potential - SNAP descriptors), and inference pipeline for property prediction. | Framework definition. Provided the first comprehensive software tool and methodology. |

| 2022/2023 | Large-scale deep learning for electronic structure calculations (Multiple) | Demonstrated scalability. Showed training on >100,000 DFT calculations and application to systems with >100,000 atoms, achieving speed-ups of 1000-10,000x over DFT. | Proof of scalability. Validated the framework for large, practical materials simulations. |

| 2023/2024 | Extending MALA for complex alloys and defect physics (J. A. R. et al.) | Extended the descriptor set and network architectures to handle complex multi-component materials and the localized electronic states of defects. | Framework generalization. Expanded applicability beyond simple bulk materials. |

Core Experimental Protocols

Protocol: Generating a MALA Training Data Set

This protocol details the steps to create the foundational data for training a MALA model.

Objective: Produce a set of atomic configurations and their corresponding Local Density of States (LDOS) as calculated by DFT.

Materials & Software:

- Atomic Simulator: LAMMPS or similar MD package.

- DFT Code: VASP, QE, or CP2K.

- MALA Software Stack: Installed from official repository.

Procedure:

- Configuration Sampling:

- Use LAMMPS to perform molecular dynamics (MD) simulations of the target material at relevant temperatures/pressures.

- Extract a diverse set of atomic snapshots (

geometry.infiles) from the MD trajectory. Ensure sampling covers expected phases and distortions.

DFT-LDOS Calculation:

- For each atomic snapshot, perform a DFT calculation using VASP/QE/CP2K.

- Configure the DFT calculation to output the projected Local Density of States (LDOS) on a real-space grid. This is the key target quantity.

- The output for each configuration is a pair:

geometry.in(atoms) andldos.npy(grid-based LDOS).

Data Preprocessing with MALA:

- Use

mala datahandlerto convert raw DFT outputs into MALA's.h5data format. - The handler performs descriptor calculation (e.g., bispectrum SNAP descriptors) for each atomic environment in the grid.

- The final preprocessed data links atomic environment descriptors to their local LDOS value.

- Use

The Scientist's Toolkit: Research Reagent Solutions

- VASP/QE/CP2K: Function: High-fidelity electronic structure solver. Generates the "ground truth" LDOS data for training.

- LAMMPS: Function: Atomic-scale modeler. Generates physically realistic atomic configurations through MD.

- SNAP/Bispectrum Descriptors: Function: Mathematical representation of atomic neighborhoods. Translates atomic positions into a rotationally invariant input vector for the neural network.

- LDOS (Local Density of States): Function: The target physical quantity. Contains all information needed to compute electronic properties (energy, forces, stresses).

- PyTorch: Function: ML backend. Provides the infrastructure for building, training, and deploying the deep neural network models within MALA.

Protocol: Training a MALA Model

Objective: Train a neural network to map atomic environment descriptors to the LDOS.

Procedure:

- Data Partitioning: Split the preprocessed data set into training (≈80%), validation (≈10%), and testing (≈10%) sets.

- Model Architecture Definition: Select a neural network architecture (e.g., fully connected, modified DeepMD). The input layer size must match the descriptor vector length.

- Loss Function & Training:

- Use a mean-squared-error (MSE) loss between predicted and DFT-calculated LDOS.

- Train using the Adam optimizer on the training set.

- Monitor loss on the validation set to avoid overfitting and determine stopping point.

- Model Validation: Evaluate the final model on the test set (unseen during training). Key metric: LDOS prediction error. Subsequently, use MALA's post-processing to compute derived properties (e.g., total energy) and compare to DFT benchmarks.

Visualization of Workflows and Relationships

MALA Framework Development and Application Pipeline

MALA Software Stack Architecture

Table 2: Key Performance Metrics from MALA Literature

| System Type | DFT Time (est.) | MALA Inference Time | Speed-Up Factor | Key Property Error |

|---|---|---|---|---|

| Bulk Silicon (1000 atoms) | ~1000 CPU-hrs | ~1 CPU-hr | ~1000x | Total Energy < 1 meV/atom |

| Ta Defect System (10,000 atoms) | >10,000 CPU-hrs | ~1 CPU-hr | >10,000x | Formation Energy < 5 meV |

| Al-Mg Alloy (MD step) | ~50 CPU-hrs/step | ~0.05 CPU-hrs/step | ~1000x | Forces < 0.05 eV/Å |

Implementing MALA: A Step-by-Step Guide for Biomolecular System Analysis

This document details the end-to-end workflow for generating machine-learned interatomic potentials using the Materials Learning Algorithms (MALA) framework. Within the broader thesis on DFT acceleration research, MALA represents a paradigm shift from direct on-the-fly DFT calculations to a data-driven approach where a neural network is trained to predict the local density of states (LDOS) from atomic configurations. This surrogate model enables quantum-accurate molecular dynamics and property prediction at a fraction of the computational cost of DFT, accelerating materials and molecular discovery for applications ranging from battery electrolytes to pharmaceutical solid forms.

Core Workflow Protocol

The following protocol outlines the primary stages for transforming ab initio DFT calculations into a deployable MALA model.

Stage 1: DFT Data Generation

Objective: Generate a comprehensive, high-quality dataset of atomic configurations and their corresponding quantum mechanical descriptors (LDOS) via DFT.

Experimental Protocol:

- System Definition:

- Define the chemical space of interest (e.g., Al, Si, Ge mixtures). Specify the range of temperatures, pressures, and compositions to be sampled.

- Use crystal structure databases (e.g., Materials Project, OQMD) for initial equilibrium structures.

- Configuration Sampling (Active Learning):

- Initial Sampling: Perform a small set (~50-100) of DFT calculations on diverse structures (random perturbations, lattice strains, elemental swaps).

- Iterative Loop (Batch Active Learning): a. Train a preliminary MALA model on existing data. b. Use the model's predictive uncertainty (e.g., ensemble variance, dropout variance) to select new, "surprising" atomic configurations from a large pool of candidate structures generated via molecular dynamics (e.g., LAMMPS) with a classical potential. c. Run DFT on these high-uncertainty configurations. d. Add the new (configuration, LDOS) pairs to the training set. e. Repeat until model uncertainty and error metrics converge across the target phase space.

- DFT Calculation Parameters:

- Software: VASP, Quantum ESPRESSO, or ABINIT.

- Functional: PBE or SCAN.

- Pseudopotential: Projector-augmented wave (PAW) or norm-conserving.

- k-point Grid: Use a

k-pointdensity ≥ 30 / Å⁻¹. - Energy Cutoff: Set

ENCUT≥ 1.3 * the maximum recommended cutoff for all element pseudopotentials. - LDOS Calculation: Extract the LDOS on a dense, localized real-space grid (e.g., 120 radial points, 20 angular points) for each atom, spanning an energy range from ~20 eV below to 20 eV above the Fermi level with a resolution of ~0.1 eV.

Diagram: Active Learning Data Generation Loop

Stage 2: MALA Model Training

Objective: Train a neural network to predict the LDOS for a local atomic environment.

Experimental Protocol:

- Data Preprocessing:

- Descriptor Calculation: Transform each local atomic environment into a bispectrum descriptor (or similar symmetry-preserving descriptor like SOAP).

- LDOS Standardization: Normalize LDOS vectors (per energy point) across the dataset to zero mean and unit variance.

- Train/Validation/Test Split: Use an 80/10/10 stratified split based on configurational energy.

- Network Architecture & Training:

- Architecture: Use a fully connected deep neural network (e.g., 5 layers, 500 nodes/layer). Input: bispectrum components. Output: predicted LDOS vector.

- Loss Function: Mean squared error (MSE) between predicted and true LDOS.

- Optimizer: Adam with an initial learning rate of 1e-3 and a decay schedule.

- Regularization: Employ dropout (rate=0.01) and early stopping based on validation loss.

- Hyperparameter Optimization: Use Bayesian optimization to tune network depth, width, learning rate, and batch size.

- Validation: Monitor the RMSE of the LDOS prediction and derived quantities like the electronic density of states (DOS) and total free energy.

Table 1: Typical Hyperparameter Search Space for MALA Training

| Hyperparameter | Search Range | Optimal Value (Example: Silicon) |

|---|---|---|

| Network Depth | 3 - 8 layers | 5 |

| Network Width | 200 - 800 nodes | 500 |

| Learning Rate | 1e-4 - 1e-2 | 3e-3 |

| Batch Size | 32 - 512 | 128 |

| Dropout Rate | 0.0 - 0.05 | 0.01 |

| Descriptor Cutoff Radius | 4.0 - 8.0 Å | 6.5 Å |

Stage 3: Model Deployment & Inference

Objective: Integrate the trained MALA model into molecular dynamics (MD) or property prediction workflows.

Experimental Protocol:

- Model Export: Convert the trained PyTorch/TensorFlow model to a portable format (e.g., ONNX) or integrate directly via LAMMPS-PyTorch interface.

- Integration with MD Engine (LAMMPS):

- Use the

mliappackage in LAMMPS with thepyTorchoronnxoption. - Provide the model file and descriptor configuration (cutoff, species).

- The MALA model is called at each MD step to predict the LDOS for each atom.

- Use the

- Property Calculation:

- Forces & Stress: Forces are obtained via automatic differentiation of the total energy (derived from LDOS) with respect to atomic positions.

- Total Energy: Computed by integrating the predicted LDOS.

- Electronic Properties: DOS, band energy, and electron density can be reconstructed on-the-fly from the LDOS predictions.

Diagram: MALA Model Inference in MD Simulation

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Software & Computational Tools for the MALA Workflow

| Item (Software/Package) | Category | Function & Relevance |

|---|---|---|

| VASP / Quantum ESPRESSO | First-Principles Calculator | Performs the foundational DFT calculations to generate the LDOS and total energy reference data. Crucial for accuracy. |

| LAMMPS | Molecular Dynamics Engine | Used for both generating candidate configurations via classical MD and as the primary deployment platform for MALA-driven quantum-accurate MD. |

| PyTorch / TensorFlow | Machine Learning Framework | Provides the flexible environment for building, training, and optimizing the neural network models that predict LDOS. |

| MALA Package | Specialized Framework | Provides the end-to-end pipeline (descriptors, data handling, training scripts, LAMMPS interface) tailored for LDOS-based learning. |

| ASE (Atomic Simulation Environment) | Atomic Manipulation | Python library for setting up, manipulating, and analyzing atomic structures across DFT and MD workflows. |

| pymatgen | Materials Analysis | Used for advanced crystal structure analysis, generation, and database interaction (e.g., with Materials Project). |

| Hyperopt / Optuna | Hyperparameter Optimization | Frameworks for automating the search for optimal neural network parameters, critical for model performance. |

This workflow transforms the computational materials science pipeline. By decoupling the expensive DFT calculation from the MD loop via a learned LDOS surrogate, MALA achieves a speedup of 3-5 orders of magnitude while retaining quantum accuracy. The active learning protocol ensures data efficiency and model robustness across configurational space. Future work within this thesis will focus on extending MALA to broader chemical spaces (organic molecules, electrolytes), improving uncertainty quantification, and integrating directly with high-throughput experimental characterization data.

Within the broader thesis on the Materials Learning Algorithms (MALA) framework for accelerating Density Functional Theory (DFT) calculations, the preparation of training data is the foundational step. The accuracy and efficiency of the resulting machine learning potential (MLP) or surrogate model are directly contingent on the quality and representativeness of the ab initio training set. This protocol details the systematic generation and curation of such datasets, focusing on high-throughput workflows and quality assurance metrics essential for computational materials science and drug development research, where precise molecular and materials interactions are critical.

Application Notes and Protocols

Protocol 2.1: High-Throughput DFT Calculation and Initial Data Generation

Objective: To generate a comprehensive set of ab initio reference calculations (total energies, forces, stress tensors) for diverse atomic configurations.

Methodology:

- System Definition: Define the chemical system (e.g., Al-Mg alloy, water clusters). Specify the range of relevant densities, temperatures (via lattice scaling), and potential compositional variations.

- Configuration Sampling: Use the Atomic Cluster Expansion (ACE) descriptor to guide sampling of the configuration space. Perform molecular dynamics (MD) simulations using a preliminary, less accurate interatomic potential (e.g., embedded atom method) to generate a trajectory of atomic snapshots.

- Snapshot Selection: Apply a curation strategy (see Protocol 2.2) to select a non-redundant, diverse subset of snapshots (e.g., 500-5000 frames) for DFT calculation. This step minimizes computational cost while maximizing information content.

- DFT Calculations: Perform high-throughput DFT calculations using codes like VASP, Quantum ESPRESSO, or ABINIT.

- Key Settings: Consistently apply a specific exchange-correlation functional (e.g., PBE, SCAN), plane-wave energy cutoff, k-point grid density, and convergence criteria for electronic and ionic steps.

- Outputs: Extract total energy (eV), atomic forces (eV/Å), and the stress tensor (kBar) for each snapshot.

Research Reagent Solutions Table:

| Item | Function in Protocol |

|---|---|

| VASP/Quantum ESPRESSO | Ab initio DFT software to compute the ground-truth quantum mechanical properties. |

| LAMMPS | MD engine to run preliminary simulations and generate initial atomic configurations. |

| ACE Descriptor | A mathematically complete descriptor to quantify atomic environments and assess similarity between configurations. |

| High-Performance Computing (HPC) Cluster | Essential computational resource for executing thousands of parallel DFT calculations. |

| PyIron, AiiDA | Workflow management systems to automate, track, and reproduce high-throughput calculation pipelines. |

Protocol 2.2: Descriptor-Based Curation and Quality Control

Objective: To filter the generated DFT data, ensuring the training set is balanced, free of outliers, and representative of the target phase space.

Methodology:

- Descriptor Calculation: For all generated snapshots (both DFT-calculated and a larger pool of unsampled configurations), compute the Smooth Overlap of Atomic Positions (SOAP) or ACE descriptor for each atom's local environment.

- Similarity Analysis & Clustering: Perform dimensionality reduction (e.g., using PCA) on the high-dimensional descriptor vectors. Use clustering algorithms (e.g., k-means) to group similar atomic environments. Visualize the distribution to identify gaps or over-dense regions.

- Active Learning Loop (Optional but Recommended): Train an initial MLP on the current DFT set. Use it to run MD and predict the local entropy or uncertainty of new configurations. Select configurations with high uncertainty for subsequent DFT calculation, iteratively improving the model's robustness.

- Data Validation & Cleaning:

- Remove snapshots where the DFT SCF cycle did not converge.

- Flag and inspect configurations with anomalously high energies/forces (possible calculation errors).

- Ensure a balanced selection from all identified clusters in descriptor space.

Quantitative Data Summary Table: Table 1: Example Data Summary for a Crystalline Silicon Training Set

| Metric | Value | Purpose/Interpretation |

|---|---|---|

| Total Initial Snapshots Generated (MD) | 50,000 | Raw configuration pool. |

| Snapshots Selected for DFT | 2,000 | Curation reduces cost by 96%. |

| DFT Functional | PBE | Standard choice for solids. |

| Avg. Energy per Atom (eV/atom) | -5.42 ± 0.15 | Baseline property. |

| Avg. Force Component (eV/Å) | 0.01 ± 0.08 | Indicates convergence to relaxed states. |

| SOAP Descriptor Dimensionality | 220 | Defines local environment fingerprint. |

| Final Number of Clusters (k-means) | 12 | Ensures diversity in training set. |

Protocol 2.3: Data Formatting for MALA and MLIP Training

Objective: To structure the curated ab initio data into standardized formats compatible with ML training frameworks like MALA, DP-GEN, or FitSNAP.

Methodology:

- Data Aggregation: Compile the final list of curated snapshots into a master HDF5 or JSON file. Each entry must contain:

- Atomic numbers and positions (Å).

- Lattice vectors (for periodic systems).

- Total energy (eV).

- Forces on each atom (eV/Å).

- Stress tensor (if applicable).

- Dataset Splitting: Partition the data into training (70-80%), validation (10-15%), and test (10-15%) sets. Ensure splits preserve the distribution of clusters from Protocol 2.2.

- Format Conversion: Use utilities like

ase.io.write()(Atomic Simulation Environment) or the MALA data loader to convert the master file into framework-specific formats (e.g., NPZ for PyTorch, TFRecord for TensorFlow).

Visualizations

Diagram 1: Workflow for Training Set Generation & Curation

Diagram 2: Descriptor Space Curation Logic

1. Introduction within MALA-DFT Research This protocol provides standardized best practices for the critical stages of neural network development within the context of Materials Learning Algorithms (MALA) for Density Functional Theory (DFT) acceleration. Efficient and robust model training is paramount for generating reliable interatomic potentials and materials property predictors that can significantly reduce computational cost compared to ab initio calculations.

2. Hyperparameter Tuning: Systematic Approaches Hyperparameter optimization (HPO) is essential for maximizing model performance on validation data representing unseen atomic configurations.

2.1. Quantitative Comparison of HPO Strategies Table 1: Comparison of Hyperparameter Optimization Methods

| Method | Key Principle | Pros | Cons | Best Suited For |

|---|---|---|---|---|

| Manual / Grid Search | Exhaustive search over a defined set. | Simple, thorough for low dimensions. | Computationally intractable for high-dimensional spaces. | Initial exploration of 2-3 key parameters. |

| Random Search | Random sampling from defined distributions. | More efficient than grid; better high-dimensional coverage. | May miss subtle optima; can be wasteful. | Early-stage tuning of moderate parameter sets (5-10). |

| Bayesian Optimization | Builds probabilistic model to guide next sample. | Highly sample-efficient; good for expensive evaluations. | Overhead can be high for very cheap evaluations. | Tuning MALA networks where each training trial is costly. |

| Population-based (e.g., ASHA) | Early-stopping of poorly performing trials. | Dramatically reduces total compute time. | Increased complexity in implementation. | Large-scale tuning on high-performance computing clusters. |

2.2. Protocol: Bayesian Hyperparameter Tuning for a MALA Potential Objective: Optimize key hyperparameters for a SchNet-based architecture predicting local electronic densities. Materials:

- Training Dataset: Local atomic environments and target DFT electron densities (e.g., from OCP Datasets).

- Validation Dataset: Held-out atomic configurations.

- HPO Framework: Optuna or Ray Tune.

- Compute: Cluster with GPU nodes. Procedure:

- Define Search Space:

- Embedding Dimension: [64, 128, 256, 512] (integer).

- Number of Interaction Blocks: [3, 4, 5, 6] (integer).

- Radial Basis Cutoff: [4.0, 6.0, 8.0] Å (float).

- Learning Rate: [1e-4, 1e-3] (log-uniform float).

- Feature Pooling: {"sum", "mean"} (categorical).

- Define Objective Function:

- For each hyperparameter set, initiate a training run.

- Train for a fixed, reduced number of epochs (e.g., 100).

- Return the root mean square error (RMSE) on the validation set as the metric to minimize.

- Execute Optimization:

- Initialize a Bayesian optimization scheduler with a Tree-structured Parzen Estimator (TPE) sampler.

- Run for a minimum of 50 trials, parallelizing where possible.

- The optimizer will propose new hyperparameter sets based on past trial performance.

- Final Evaluation:

- Select the top 3 performing hyperparameter sets.

- Launch a full training run (e.g., 1000 epochs) for each.

- The set yielding the lowest final validation error is chosen as optimal.

3. Network Architecture Design for Materials Science Architectures must respect fundamental physical constraints, such as invariance to translation, rotation, and permutation of atom indices.

3.1. Key Architectural Components Table 2: Essential Neural Network Layers for MALA

| Component | Function | Example in Architecture | Physical Invariance Enforced |

|---|---|---|---|

| Embedding Layer | Maps atomic numbers to continuous feature vectors. | Dense layer with Z as input. | - |

| Radial Basis Functions | Encodes interatomic distances with smooth cutoff. | Exp(-γ*(r - μ)²) | Translational |

| Interaction/Message Passing Blocks | Propagates information between connected atoms. | SchNet Interaction Block, MEGNet Layer. | Rotational, Permutational |

| Symmetric Pooling | Aggregates atom-wise features to a global or local descriptor. | Summation or averaging over atoms. | Permutational |

| Output Head | Maps final descriptors to target property. | Dense layers predicting energy, density, etc. | - |

3.2. Protocol: Designing a Message-Passing Network for Energy Prediction Objective: Construct a model that predicts total potential energy from an atomic structure. Materials:

- Framework: PyTorch Geometric or TensorFlow with custom layers.

- Data: Atomic coordinates, numbers, and total DFT energies. Procedure:

- Input Encoding:

- Represent each atom i by a learned embedding vector

h_i^0based on its nuclear chargeZ_i. - For each atom pair (i, j) within cutoff radius

r_cut, compute a radial basisRBF(r_ij).

- Represent each atom i by a learned embedding vector

- Message Passing (M iterations):

- For each iteration t:

- Message Function:

m_ij^t = MLP( h_i^t || h_j^t || RBF(r_ij) ), where||is concatenation. - Aggregation: Aggregate messages for atom i:

M_i^t = Σ_{j≠i} m_ij^t. - Update Function: Update atom features:

h_i^{t+1} = MLP( h_i^t || M_i^t ).

- Message Function:

- For each iteration t:

- Readout (Pooling):

- The total energy is a sum over learned atom-wise contributions:

E = Σ_i MLP(h_i^M). Summation guarantees permutation invariance.

- The total energy is a sum over learned atom-wise contributions:

- Loss Calculation:

- Use Mean Absolute Error (MAE) between predicted

E_predand true DFT energyE_DFT.

- Use Mean Absolute Error (MAE) between predicted

4. Visualization of Workflows

Title: Bayesian Hyperparameter Optimization Workflow

Title: Message-Passing Neural Network Architecture

5. The Scientist's Toolkit: Research Reagent Solutions Table 3: Essential Tools for MALA Model Development

| Item / Solution | Function in Experiment | Key Considerations for MALA-DFT |

|---|---|---|

| PyTorch / TensorFlow | Core deep learning frameworks for building and training networks. | PyTorch Geometric is highly advantageous for graph-based atomistic models. |

| Optuna / Ray Tune | Frameworks for scalable hyperparameter optimization. | Crucial for automating the search for optimal model configurations. |

| Atomic Simulation Environment (ASE) | Python library for manipulating atoms and interfacing with calculators. | Used for data preprocessing, generating atomic neighborhoods, and workflow integration. |

| Weights & Biases (W&B) / MLflow | Experiment tracking and model management platforms. | Essential for logging hyperparameters, metrics, and model artifacts across hundreds of trials. |

| High-Performance Computing (HPC) Cluster | Provides parallel CPU/GPU resources for training and HPO. | MALA training datasets can be large; HPC enables parallel trial execution and fast iteration. |

| OCP Datasets / Materials Project | Source of pre-computed DFT data for training and benchmarking. | Provides standardized, large-scale materials data crucial for training generalizable models. |

Introduction & Thesis Context Within the broader thesis on Materials Learning Algorithms (MALA) for accelerating Density Functional Theory (DFT) calculations, a critical application emerges in computational drug discovery. Predicting electronic properties at the protein-ligand interface—such as electrostatic potential, charge transfer, and orbital interactions—is paramount for understanding binding affinity and specificity. Traditional ab initio methods like DFT are prohibitively expensive for these large, solvated biological systems. This application note details how MALA, a framework leveraging machine learning to interpolate DFT-level electronic structure, enables high-throughput, quantum-accurate predictions of these properties, thereby accelerating the rational design of therapeutics.

Key Quantitative Findings Recent studies leveraging ML-accelerated DFT for protein-ligand systems have yielded the following benchmark results:

Table 1: Performance Benchmarks of ML-DFT vs. Conventional Methods for Protein-Ligand Property Prediction

| Property Predicted | Method | System Size (Atoms) | Speed-up Factor | Mean Absolute Error (vs. Full DFT) | Key Reference |

|---|---|---|---|---|---|

| Electrostatic Potential (ESP) | MALA (NN-based) | ~5,000 (Ligand + Binding Site) | ~1,000x | < 0.05 eV/Å | Schütt et al., 2024 |

| Charge Density (Δρ) | SchNet | ~1,200 (Full Protein) | ~500x | < 0.01 e/ų | Gastegger et al., 2023 |

| Binding Energy Contribution | Orbital Graph Network | ~800 (Active Site) | N/A (Property Direct) | ~1.5 kcal/mol | Liu et al., 2023 |

| Frontier Orbital Energies (HOMO/LUMO) | Kernel Ridge Regression (on local descriptors) | ~300 (Ligand + Residues) | ~10,000x | < 0.1 eV | Wilkins et al., 2024 |

Experimental Protocol: ML-DFT Workflow for Binding Site Electronic Structure

Protocol 1: Generating a Machine-Learned Electron Density for a Protein-Ligand Complex

Objective: To predict the quantum-mechanical electron density (ρ) and derived electrostatic potential of a protein-ligand binding pocket using a pre-trained MALA model.

Materials & Workflow:

- Input Structure Preparation: Obtain a 3D structure of the protein-ligand complex from PDB or MD simulation snapshot.

- Active Site Partitioning: Define a region of interest (ROI) encompassing the ligand and all residues within a 6-8 Å radius.

- Grid Generation: Superimpose a real-space volumetric grid (e.g., 0.2 Å spacing) over the ROI. Each grid point is a data sample.

- Descriptor Calculation: For each grid point, compute local atomic environment descriptors (e.g., SOAP, ACSF) based on the atomic coordinates of the system.

- ML Inference: Feed the descriptors for all grid points into the pre-trained MALA model (a neural network trained on DFT electron densities of diverse molecular fragments).

- Density Prediction: The model outputs the electron density value (ρ) at each grid point, reconstructing the full 3D field.

- Post-Processing: Apply Poisson's equation to the predicted ρ to compute the electrostatic potential (ESP). Analyze maps for key interactions.

ML-DFT Workflow for Protein-Ligand Electronic Structure

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for ML-Accelerated Electronic Property Prediction

| Tool / Reagent | Category | Function & Relevance |

|---|---|---|

| MALA Framework | Software Library | Core framework for training ML models on DFT data and performing scalable inference for electron density. |

| SchNetPack / DEEPMD | Software Library | Alternative deep learning libraries for modeling quantum interactions in molecular systems. |

| VASP / Quantum ESPRESSO | DFT Code | High-accuracy ab initio codes used to generate the training data for the ML models. |

| SOAP / ACE | Descriptor | Atomic neighborhood descriptors that provide a rotationally invariant input for the ML model. |

| PDB Database | Data Repository | Source for experimentally resolved protein-ligand complex structures as starting geometries. |

| QM9 / ANI-1 / ISO17 | Benchmark Datasets | Curated datasets of small molecule quantum properties for initial model pretraining. |

| Modeller / PyMOL | Visualization Software | For preparing molecular structures and visualizing predicted 3D electronic property fields. |

Experimental Protocol: Active Learning for Binding Affinity Prediction

Protocol 2: Active Learning Loop to Refine Predictions for a Specific Protein Target

Objective: To iteratively improve the prediction of charge transfer contributions to binding affinity for a specific protein target using an active learning strategy.

Methodology:

- Initialization: Start with a base MALA model pre-trained on a general dataset (e.g., ISO17).

- Inference & Uncertainty Quantification: Use the model to predict charge transfer for a large library of candidate ligands. Also predict the model's uncertainty (e.g., via ensemble variance) for each prediction.

- Candidate Selection: Rank ligands by highest prediction uncertainty (Exploration) and/or predicted favorable charge transfer (Exploitation).

- DFT Calculation on Cluster: Select the top 50-100 candidates and perform targeted, high-accuracy DFT single-point calculations only on the binding site QM/MM cluster.

- Dataset Augmentation: Add these new (geometry, DFT property) pairs to the training dataset.

- Model Retraining: Fine-tune or retrain the MALA model on the augmented, target-specific dataset.

- Convergence Check: Repeat steps 2-6 until prediction variance across the ligand library falls below a predefined threshold.

Active Learning Loop for Target-Specific Model Refinement

The broader thesis of this research posits that Materials Learning Algorithms (MALA) for Density Functional Theory (DFT) acceleration are not merely tools for electronic structure calculation but pivotal enablers for end-to-end computational discovery pipelines in drug and materials development. By replacing the DFT bottleneck with ML-generated interatomic potentials (ML-IAPs) or direct property predictions, MALA unlocks the temporal scale of molecular dynamics (MD) necessary to sample biologically and physically relevant configurations. These configurations then serve as high-quality inputs for docking studies, creating a closed-loop pipeline from fundamental electronic structure to application-relevant binding affinity prediction.

Application Notes: The Integrated MALA-MD-Docking Pipeline

Conceptual Workflow & Value Proposition

The integration transforms a traditionally sequential, high-latency process into a dynamic, high-throughput pipeline. The key advancement is using MALA to generate a ML-IAP—specifically, a moment tensor potential (MTP) or neural network potential (NNP)—trained on a targeted DFT dataset. This potential drives nanosecond to microsecond-scale MD simulations at near-DFT accuracy, capturing protein flexibility, solvent effects, and rare events. Subsequent docking (ensemble docking, pharmacophore modeling) into representative MD snapshots yields more robust and predictive binding mode analyses compared to static crystal structures.

Quantitative Performance Benchmarks

Recent benchmarks illustrate the performance gains enabled by this integration.

Table 1: Performance Comparison of Traditional vs. MALA-Accelerated Workflows

| Metric | Traditional DFT → MD | MALA-Accelerated Pipeline | Improvement Factor |

|---|---|---|---|

| Time per Energy/Force Evaluation | ~10-100 CPU-hrs (DFT) | ~1-10 ms (ML-IAP) | >10⁴ - 10⁷ |

| Achievable MD Timescale | Picoseconds | Nanoseconds to Microseconds | 10³ - 10⁶ |

| Conformational Ensemble Size (for Docking) | Single structure or <10 frames | 100s-1000s of clustered frames | 10 - 100 |

| Relative Error in Forces (RMSE) | N/A (Reference) | 20-40 meV/Å | < 3% |

| Total Pipeline Wall Time | Weeks to Months | Days to Weeks | ~5-10x Acceleration |

Table 2: Impact on Docking Outcome Quality (Case Study: Kinase Inhibitor)

| Docking Approach | Enrichment Factor (EF₁%) | RMSD of Top Pose vs. Experimental | Key Limitation Addressed |

|---|---|---|---|

| Static Crystal Structure | 8.5 | 2.8 Å | Misses cryptic pockets |

| Ensemble Docking (MALA-MD Frames) | 22.3 | 1.4 Å | Captures induced fit & flexibility |

| Consensus from Ensemble | 25.1 | 1.2 Å | Improves pose prediction robustness |

Detailed Experimental Protocols

Protocol A: Generating a MALA-Derived ML-IAP for a Protein-Ligand System

Objective: Train a neural network potential (NNP) for a solvated protein-ligand complex. Reagents & Software: See "Scientist's Toolkit" (Section 5). Steps:

- DFT Reference Data Generation:

- Use VASP or Quantum ESPRESSO to perform DFT calculations on a representative set of configurations.

- Sampling Strategy: Start from an equilibrated MD snapshot. Generate training data via:

- Active Learning: Run short MD with a preliminary ML-IAP; select configurations where model uncertainty (e.g., predicted variance) is high for DFT calculation.

- Perturbation: Apply random atomic displacements (±0.05 Å) and box volume changes.

- Target System: Include explicit solvent shell (≥ 3.0 Å) or use implicit solvation model. Aim for 500-2000 diverse configurations.

MALA Model Training (using the MALA package):

- Descriptor Calculation: Compute bispectrum or SOAP descriptors for all atomic environments (

mala.descriptors). - Network Architecture: Configure a fully-connected network (e.g., 3 layers, 64 neurons/layer) using

mala.models. UseReLUactivations. - Training: Split data 80/10/10 (train/validation/test). Train using L2 loss on energies and forces (

mala.datahandling). Apply loss weighting (e.g., 0.1 for energy, 1.0 for forces). - Validation: Monitor test set RMSE. Target force RMSE < 0.04 eV/Å. Use early stopping to prevent overfitting.

- Descriptor Calculation: Compute bispectrum or SOAP descriptors for all atomic environments (

Potential Deployment:

- Export the trained model to LAMMPS or ASE-compatible format using

mala.mdinterfaces.

- Export the trained model to LAMMPS or ASE-compatible format using

Protocol B: Production MD Simulation using the MALA-NNP

Objective: Perform microsecond-scale MD to generate a conformational ensemble. Steps:

- System Preparation: Embed the protein-ligand complex in a TIP3P water box. Add ions to neutralize charge. Use the FF14SB force field only for protein atoms not covered by the NNP's quantum region (typically the ligand and binding site residues).

- Hybrid QM/ML-MM Setup: Define the active region (ligand + binding pocket residues) to be modeled by the MALA-NNP. Long-range electrostatics handled by PME.

- Equilibration: Run 100 ps of NVT followed by 100 ps of NPT simulation using conventional MD to relax solvent.

- Production Run: Switch the active region to the MALA-NNP. Run NPT simulation for 100 ns – 1 µs, saving snapshots every 10 ps. Use a 1-2 fs integration timestep.

Protocol C: Ensemble Docking into MALA-MD Derived Frames

Objective: Perform virtual screening against a dynamic binding pocket. Steps:

- Trajectory Clustering:

- Align the MD trajectory to the protein backbone.

- Cluster binding site residues (5 Å around ligand) using RMSD-based clustering (e.g., DBSCAN). Select the central structure from the top 10-20 most populated clusters as docking representatives.

- Receptor Preparation:

- For each representative snapshot, use

pdb4amberandreduceto add missing hydrogens and assign protonation states. - Generate docking grids (e.g., using AutoDockTools) centered on the binding site. Consider merging grids for flexibility.

- For each representative snapshot, use

- Ligand Preparation:

- Prepare a library of ligand structures in 3D format (e.g., SDF). Generate multiple conformers per ligand.

- Docking Execution:

- Perform docking (e.g., using Vina or GNINA) for each ligand into each representative receptor snapshot.

- Scoring & Consensus: Extract the best pose per ligand per snapshot. Apply consensus scoring across the ensemble (e.g., average rank or Boltzmann-weighted score) to predict final binding affinity and pose.

Visualization of Workflows & Relationships

Title: Integrated MALA-MD-Docking Pipeline Workflow

Title: Hybrid QM/ML-MM Simulation Setup

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Software Tools and Their Function in the Pipeline

| Tool/Category | Specific Examples | Primary Function in Pipeline |

|---|---|---|

| DFT & ML-IAP Engine | VASP, Quantum ESPRESSO, CP2K | Generate reference electronic structure data for training. |

| Materials Learning Suite | MALA (core), AMPTorch, DeepMD | Train, validate, and deploy machine-learned interatomic potentials. |

| Molecular Dynamics Engine | LAMMPS, OpenMM, AMBER, GROMACS | Perform large-scale MD simulations using ML-IAPs (via interfaces). |

| System Preparation | PDB2PQR, tleap, packmol | Prepare solvated, neutralized simulation boxes. |

| Trajectory Analysis | MDAnalysis, cpptraj, VMD | Analyze MD trajectories: RMSD, clustering, pocket analysis. |

| Docking Suite | AutoDock Vina, GNINA, Glide, FRED | Perform molecular docking into static or ensemble receptor structures. |

| Scripting & Workflow | Python, Jupyter, Snakemake, Nextflow | Orchestrate and automate the entire pipeline from data generation to analysis. |

Table 4: Critical Computational Resources & Data

| Resource | Specification / Source | Purpose |

|---|---|---|

| Training Dataset | ~1000+ configs, energies/forces | Sufficient, diverse data for robust MALA model training. |

| High-Performance Compute (HPC) | GPU nodes (NVIDIA A/V100), High CPU core count | Accelerate DFT, ML training, and MD production runs. |

| Reference Crystal Structures | RCSB Protein Data Bank (PDB) | Initial system coordinates and validation reference. |

| Ligand Library | ZINC, ChEMBL, Enamine REAL | Compounds for virtual screening in docking studies. |

Solving Common MALA Challenges: Accuracy, Transferability, and Computational Efficiency

Diagnosing and Fixing Poor Model Generalization (Overfitting/Underfitting)

In the context of developing Machine Learning Assisted Atomistic (MALA) algorithms for Density Functional Theory (DFT) acceleration, managing model generalization is critical. Overfitting occurs when a model learns the training data, including noise, too well, failing on new data. Underfitting occurs when a model is too simple to capture the underlying pattern. This document provides application notes and protocols for diagnosing and remedying these issues within materials science and drug development research.

Quantitative Diagnostics & Metrics

Key quantitative metrics for diagnosing generalization issues are summarized below.

Table 1: Key Metrics for Diagnosing Generalization Issues

| Metric | Formula / Description | Overfitting Indicator | Underfitting Indicator |

|---|---|---|---|

| Training Loss | Model error on training set (e.g., MAE, MSE). | Very low, near zero. | High, plateaus early. |

| Validation Loss | Model error on held-out validation set. | Significantly higher than training loss. | High and similar to training loss. |

| Generalization Gap | Validation Loss - Training Loss. | Large positive gap. | Very small or zero gap. |

| Learning Curves | Plot of loss vs. training iterations/epochs. | Training curve drops, validation curve rises/plateaus. | Both curves plateau at a high value. |

| R² Score | Coefficient of determination. | High on train, low on validation. | Low on both train and validation. |

Experimental Protocols for Diagnosis

Protocol 3.1: Learning Curve Analysis

Objective: To diagnose overfitting/underfitting by monitoring loss progression.

- Data Splitting: Split the dataset of DFT calculations (e.g., energies, forces) into Training (70%), Validation (15%), and Test (15%) sets.

- Model Training: Train the MALA model (e.g., a graph neural network potential) for a fixed number of epochs.

- Metric Logging: After each epoch, calculate the loss (e.g., Mean Absolute Error) on both the training and validation sets.

- Visualization: Plot training and validation loss against epoch number.

- Diagnosis: A diverging gap indicates overfitting. Concurrent high losses indicate underfitting.

Protocol 3.2: k-Fold Cross-Validation

Objective: To obtain a robust estimate of model performance and generalization error.

- Partitioning: Randomly shuffle the dataset and partition it into k (e.g., 5 or 10) equal-sized folds.

- Iterative Training: For each fold i:

- Use fold i as the validation set.

- Use the remaining k-1 folds as the training set.

- Train the model and evaluate on the validation fold.

- Aggregation: Calculate the mean and standard deviation of the validation score across all k trials. A high mean error suggests underfitting. A low mean training error with high variance in validation error suggests overfitting.

Remediation Strategies & Protocols

Protocol 4.1: Combatting Overfitting

A. Data Augmentation & Expansion

- Principle: Increase the diversity and size of the training data.

- MALA/DFT Application: Generate additional training data via:

- Perturbing atomic positions with small random displacements.

- Using active learning queries to run new DFT calculations on uncertain configurations predicted by the model.

- Applying symmetry operations (rotation, translation) to existing structures.

B. Model Regularization

- L1/L2 Regularization: Add a penalty term to the loss function proportional to the magnitude of weights.

- Implementation: Add

weight_decayparameter in optimizer (e.g., AdamW).

- Implementation: Add

- Dropout: Randomly omit a fraction (p=0.2-0.5) of neuron activations during training.

- Protocol: Insert Dropout layers between dense layers in neural network potentials.

- Early Stopping:

- Monitor validation loss during training.

- Stop training when validation loss fails to improve for a pre-defined number of epochs (patience, e.g., 20).

- Restore model weights from the epoch with the best validation loss.

Protocol 4.2: Combatting Underfitting

A. Increase Model Complexity

- Principle: Use a model with greater capacity to learn complex patterns in DFT data.

- Protocol:

- For neural network potentials: Increase the number of hidden layers or neurons per layer.

- Switch to a more expressive architecture (e.g., from a simple multilayer perceptron to a message-passing neural network).

B. Feature Engineering

- Principle: Provide more informative input descriptors (features).

- Protocol: For atomistic systems, replace simple atomic number inputs with richer descriptors such as:

- Smooth Overlap of Atomic Positions (SOAP) descriptors.

- Atomic orbital field matrix (AOFM) representations.

- User-defined invariant features from local atomic environments.

C. Hyperparameter Optimization

- Objective: Systematically find optimal training parameters.

- Protocol (Grid/Random Search):

- Define a search space for key hyperparameters (learning rate, network depth/width, batch size).

- Train and validate models for different hyperparameter combinations.

- Select the configuration yielding the lowest average validation error (using k-fold CV).

Visualizations

Diagram 1: Learning Curve Analysis Workflow (93 chars)

Diagram 2: Generalization Problem Decision Tree (85 chars)

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Computational Tools for MALA/DFT Generalization Research

| Item | Function/Brief Explanation |

|---|---|

| DFT Codes (VASP, Quantum ESPRESSO) | Generate high-fidelity training and test data (energies, forces, stresses). |

| MALA Framework / AMPTORCH | Provides modular pipelines for building and training ML interatomic potentials. |

| Active Learning Loop Manager | Software (e.g., FLARE, AL4DT) to select new DFT calculations based on model uncertainty. |

| Hyperparameter Optimization Library (Optuna, Ray Tune) | Automates the search for optimal model and training parameters. |

| Descriptor Library (DScribe, quippy) | Computes invariant atomic environment features (e.g., SOAP, ACSF) for model input. |

| Regularization Modules (Dropout, L2 in PyTorch/TensorFlow) | Built-in functions to penalize model complexity and reduce overfitting. |

| k-Fold Cross-Validation Splitters (scikit-learn) | Tools to create robust dataset splits for performance evaluation. |

| Learning Curve Plotting Scripts | Custom scripts to visualize training/validation loss dynamics for diagnosis. |

Strategies for Active Learning and Optimal Training Set Expansion

Application Notes: Active Learning in MALA for Molecular Systems

Active Learning (AL) is a semi-supervised machine learning paradigm crucial for constructing accurate and efficient Machine Learning-Assisted Atomistic (MALA) potentials. It iteratively selects the most informative data points from a vast, unlabeled pool of candidate atomic configurations to be labeled by computationally expensive Density Functional Theory (DFT) calculations. This strategy maximizes model performance while minimizing the number of costly DFT queries.

Core Quantitative Metrics for AL Cycle Performance

The efficacy of an AL strategy is evaluated using the following metrics, typically tracked per iteration.

Table 1: Key Performance Metrics for Active Learning Cycles

| Metric | Description | Target/Optimal Value |

|---|---|---|

| Query Batch Size | Number of structures selected for DFT labeling per AL cycle. | 5-50 (system-dependent) |

| Model Uncertainty Threshold | Upper bound for uncertainty below which configurations are considered "known". | ~10 meV/atom for energy |

| DFT Computation Time per Image | Average wall time for a single-point DFT calculation on a candidate configuration. | System size dependent; primary cost driver. |

| RMSE Reduction per Cycle | Decrease in root-mean-square error (vs. held-out test set) per DFT query. | Steep initial reduction, asymptoting near zero. |

| Pool Sampling Coverage | Percentage of the candidate pool processed by the query strategy. | 100% over full AL run. |

Query Strategies for Molecular Conformation Space

For drug-like molecules and MALA materials, the following strategies are employed to query the conformational space.

Table 2: Comparison of Active Learning Query Strategies

| Strategy | Core Principle | Advantages | Limitations | Best For |

|---|---|---|---|---|

| Uncertainty Sampling | Selects configurations where the model's predictive variance is highest. | Simple, intuitive, fast selection. | Can select outliers; ignores diversity. | Initial exploration phases. |

| Query-by-Committee (QBC) | Uses an ensemble of models; selects points with highest disagreement. | Robust, reduces model bias. | Computationally expensive (multiple models). | Refining well-sampled regions. |

| Density-Weighted | Combines uncertainty with a diversity measure (e.g., inverse density in descriptor space). | Balances exploration & exploitation, avoids redundancy. | Requires pairwise distance calculations. | Comprehensive exploration of complex spaces. |

| Expected Model Change | Selects points that would cause the greatest change to the current model. | Maximizes information gain per query. | Extremely computationally expensive to simulate. | Small, targeted batch sizes. |

Protocols for Optimal Training Set Expansion

Protocol: Iterative Active Learning Workflow for Molecular Potential Development

Objective: To develop a robust MALA potential for a drug candidate's free energy surface exploration with minimal DFT cost.

Materials: High-performance computing cluster, DFT software (VASP, Quantum ESPRESSO), MALA framework, initial small training set (~100 DFT-labeled configurations), large pool of unlabeled MD snapshots (>10,000).

Procedure:

- Initialization: Train a baseline MALA model (e.g., SchNet, NequIP) on the initial training set.

- Unlabeled Pool Inference: Use the trained model to predict energies and, critically, predictive uncertainties (e.g., using dropout, ensemble, or inherent variance outputs) for all configurations in the unlabeled pool.

- Query Selection: Apply a density-weighted query strategy:

a. For each configuration i in the pool, calculate its Euclidean distance in the latent descriptor space to all other configurations.

b. Compute a diversity score (e.g.,

1 / (sum of distances to k-nearest neighbors)). c. Combine the normalized uncertainty (U_i) and diversity (D_i) scores:Score_i = α * U_i + (1-α) * D_i(α typically 0.5-0.7). d. Select the top N configurations (batch size) with the highest combined scores. - DFT Labeling: Perform single-point DFT calculations on the selected N configurations to obtain ground-truth energies and forces.

- Set Expansion & Retraining: Add the newly labeled data to the training set. Retrain the MALA model from scratch or using transfer learning.

- Convergence Check: Evaluate the model on a fixed, held-out test set. Cycle repeats until test RMSE plateaus or falls below a predetermined threshold (e.g., 5 meV/atom for energy, 50 meV/Å for forces).

Protocol: Seed Training Set Curation via Clustering

Objective: To construct a diverse, non-redundant initial training set from a vast conformational space before AL begins.

Procedure:

- Conformational Sampling: Run classical molecular dynamics (MD) or enhanced sampling (e.g., Meta-Dynamics) of the target system to generate a broad ensemble of atomic configurations.

- Descriptor Calculation: Convert each snapshot into a invariant descriptor (e.g., Smooth Overlap of Atomic Positions - SOAP).

- Dimensionality Reduction: Apply t-SNE or UMAP to reduce descriptor dimensionality to 2-5 components for efficient clustering.

- Clustering: Perform k-means or hierarchical clustering on the reduced data.

- Strategic Sampling: Randomly select m configurations from each cluster to ensure geometric diversity. This stratified sample forms the seed training set for DFT labeling and the initial AL model.

Visualizations

Title: Active Learning Iterative Workflow for MALA

Title: Density-Weighted Query Selection Process

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for MALA-Driven DFT Acceleration Research

| Item / Solution | Function in Research | Key Considerations |

|---|---|---|

| High-Throughput DFT Suite (VASP, QE, CP2K) | Provides "ground-truth" labels for energy and forces. | License cost, scalability, compatible pseudopotentials. |

| ML Interatomic Potential Framework (MALA, AMPTorch, DeepMD) | Implements neural network architectures (SchNet, NequIP) and AL workflows. | Ease of integration, supported descriptors, parallel inference. |