MLIP Benchmarking in Drug Discovery: A Comprehensive Guide to Protocols, Best Practices, and Validation

This article provides a comprehensive guide to Machine Learning Interatomic Potential (MLIP) benchmarking for drug development researchers and scientists.

MLIP Benchmarking in Drug Discovery: A Comprehensive Guide to Protocols, Best Practices, and Validation

Abstract

This article provides a comprehensive guide to Machine Learning Interatomic Potential (MLIP) benchmarking for drug development researchers and scientists. We cover the foundational concepts of MLIPs, detailed methodological protocols for application, troubleshooting strategies for common pitfalls, and robust validation frameworks for comparative analysis. This holistic guide equips professionals to implement rigorous, reproducible, and predictive MLIP simulations in biomedical research.

What Are MLIPs? Core Concepts and When to Use Them in Drug Discovery

Defining Machine Learning Interatomic Potentials (MLIPs) vs. Traditional Force Fields

Within the context of establishing rigorous benchmarking protocols and best practices for interatomic potentials, a fundamental step is to precisely define and contrast the two dominant paradigms: Machine Learning Interatomic Potentials (MLIPs) and Traditional Force Fields. The choice between these approaches directly impacts the accuracy, computational cost, and predictive reliability of molecular simulations in materials science, chemistry, and drug development.

Core Definitions and Theoretical Foundation

Traditional Force Fields

Traditional force fields are based on pre-defined analytical mathematical functions that describe the potential energy of a system as a sum of terms representing bonded and non-bonded interactions. The functional form and its parameters are derived from empirical fitting, quantum mechanical calculations, and experimental data.

General Functional Form: E_total = E_bonded + E_non-bonded E_bonded = E_bond_stretch + E_angle_bend + E_torsion + E_improper E_non-bonded = E_van_der_Waals + E_electrostatic

Machine Learning Interatomic Potentials

MLIPs are statistical models trained on high-fidelity quantum mechanical (e.g., Density Functional Theory) data. They learn a mapping from atomic configurations (positions, chemical species) to total energy, forces, and sometimes other properties, without requiring a pre-specified functional form. The energy is typically expressed as a sum of atomic contributions, ensuring linear scaling.

General Form: E_total = Σ_i E_i, where E_i = f( {r_ij, z_j} ) is learned by a neural network, Gaussian process, or other ML model.

Table 1: Core Characteristics Comparison

| Feature | Traditional Force Fields | Machine Learning Interatomic Potentials (MLIPs) |

|---|---|---|

| Functional Form | Pre-defined, fixed analytical equations. | Flexible, learned from data (no explicit form). |

| Parameter Source | Fit to experimental & QM data; often transferable. | Trained exclusively on high-fidelity QM data. |

| Accuracy | Limited by functional form; typically 1-5 kcal/mol error for energy. | Can approach QM accuracy (<< 1 kcal/mol error) within training domain. |

| Computational Cost | Very low (fast evaluation). | Moderate to high (depends on model complexity), but far cheaper than QM. |

| Extrapolation | Generally poor outside parametrized regimes. | Poor; strictly interpolative within training data manifold. |

| Domain of Applicability | Broad but shallow; good for known chemistries. | Narrow but deep; excellent for specific systems covered in training. |

| Treatment of Electronic Effects | Implicit, via fixed partial charges and functional terms. | Captured implicitly if present in training data (e.g., polarization). |

| Development Workflow | Manual parameterization, iterative refinement. | Automated training pipeline, requires careful dataset generation. |

Table 2: Typical Benchmark Performance Metrics (Representative Values)

| Metric | Traditional FF (e.g., GAFF) | MLIP (e.g., NequIP, MACE) | Target (QM) |

|---|---|---|---|

| Energy RMSE (meV/atom) | 20 - 100 | 1 - 10 | 0 |

| Force RMSE (meV/Å) | 200 - 1000 | 10 - 50 | 0 |

| Inference Speed (atom-step/s) | 10^7 - 10^9 | 10^5 - 10^7 | 10^-2 - 10^1 |

| Training Data Size (configurations) | N/A (param fit) | 10^3 - 10^5 | N/A |

Experimental Protocols for Benchmarking

A robust benchmarking protocol is essential for comparative evaluation within MLIP research.

Protocol 4.1: Generation of Reference Quantum Mechanical Dataset

Objective: Create a high-quality, diverse dataset for training and testing potentials.

- System Selection: Define the chemical space (elements, phases, compositions).

- Configuration Sampling: Use ab initio molecular dynamics (AIMD) at relevant temperatures, random structure searches, or displacement perturbations from equilibrium geometries to sample configurations.

- QM Calculation: Perform DFT (or higher-level) calculations for each snapshot. Key Outputs: Total energy, atomic forces, stress tensor.

- Dataset Splitting: Partition into training (≈80%), validation (≈10%), and held-out test (≈10%) sets. Ensure statistical representativeness.

Protocol 4.2: Training a Neural Network Potential (e.g., Behler-Parrinello type)

Objective: Train an MLIP model on the QM dataset.

- Featureization: Transform atomic coordinates into invariant/equivariant descriptors (e.g., atom-centered symmetry functions, ACE, or SOAP).

- Model Architecture: Construct a neural network with 2-3 hidden layers (e.g., (50, 50) nodes). Input: descriptors for atom i. Output: atomic energy contribution E_i.

- Loss Function: Define L = w_E * MSE(E) + w_F * MSE(F) + w_S * MSE(S), where E, F, S are energy, forces, and stress.

- Training: Use Adam optimizer. Monitor validation loss. Employ early stopping to prevent overfitting.

Protocol 4.3: Validation and Benchmarking Simulation

Objective: Assess the predictive performance and stability of the trained MLIP.

- Static Property Prediction: Predict energies/forces for the held-out test set. Calculate RMSE and MAE vs. QM reference.

- Dynamic Stability Test: Run MD (NVT ensemble) at a temperature not included in training.

- Measure property evolution (e.g., radial distribution function, mean squared displacement).

- Check for unphysical energy drift or structural collapse.

- Property Calculation: Perform a production MD simulation to compute target macroscopic properties (e.g., diffusion coefficient, lattice constant, elastic moduli, phonon spectrum). Compare to FF predictions and experimental data (if available).

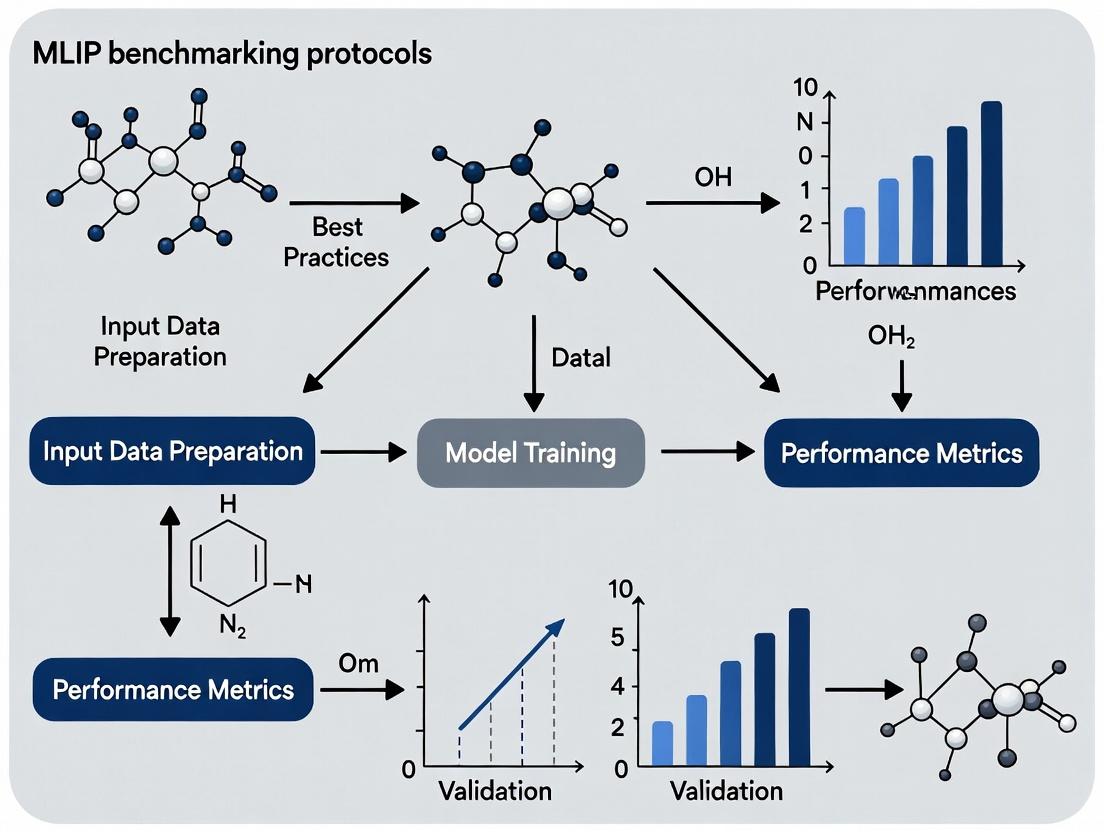

Visualization of Workflows and Relationships

Diagram 1: MLIP vs FF Benchmarking Research Workflow (100 chars)

Diagram 2: Conceptual Comparison of FF and MLIP Models (99 chars)

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 3: Essential Materials for MLIP/FF Benchmarking Studies

| Item / Solution | Category | Function / Purpose |

|---|---|---|

| VASP / Quantum ESPRESSO / Gaussian | QM Software | Generates the high-fidelity reference data (energies, forces) for training and testing. |

| LAMMPS / GROMACS / OpenMM | MD Engine | Performs molecular dynamics simulations using either the traditional FF or the MLIP (via interface). |

| Atomic Cluster Expansion (ACE) / SOAP | MLIP Descriptor | Translates atomic neighbor environments into a fixed-length, rotationally invariant vector for ML model input. |

| n2p2 / DeepMD-kit / AMPTORCH | MLIP Training Code | Provides frameworks to construct, train, and export neural network or other ML-based potentials. |

| QUIP / INTERFACE | MLIP-MD Interface | Libraries (e.g., ML-IAP, TorchANI) that allow MD packages to call MLIP models during simulation. |

| Reference Molecular Dynamics (RMD) Dataset | Benchmark Data | A curated set of diverse atomic configurations with QM-calculated properties for standardized testing. |

| Force Field Parameterization Tool (e.g., ffTK, LigParGen) | FF Development | Aids in deriving partial charges, torsion parameters, etc., for traditional force fields for organic molecules. |

| Visualization Suite (VMD, OVITO) | Analysis Tool | Critical for visualizing trajectories, debugging unphysical structures, and analyzing simulation results. |

The Critical Role of MLIPs in Modern Computational Drug Discovery

Machine Learning Interatomic Potentials (MLIPs) have emerged as a transformative force in computational drug discovery. They bridge the gap between quantum mechanical (QM) accuracy and classical molecular dynamics (MD) scalability, enabling high-fidelity simulations of biomolecular systems at unprecedented scales. Within the broader thesis on benchmarking protocols, this document establishes application notes and experimental methodologies for the rigorous evaluation and deployment of MLIPs in target validation, ligand binding studies, and free energy calculations.

Quantitative Comparison of MLIP Frameworks

Table 1: Benchmarking Performance of Popular MLIPs on Drug Discovery-Relevant Tasks (2024 Data)

| MLIP Model | Underlying Architecture | Typical System Size (atoms) | Speed vs. DFT | Force Error (eV/Å) | Key Drug Discovery Application |

|---|---|---|---|---|---|

| ANI-2x | AE-ANN | 50,000 | 10^6–10^7x | ~0.03 | High-throughput ligand geometry optimization |

| MACE | Equivariant MPNN | 20,000 | 10^5–10^6x | ~0.02 | Protein-ligand binding dynamics with full QM accuracy |

| NequIP | E(3)-Equivariant GNN | 10,000 | 10^5x | ~0.015 | Allosteric site discovery via side-chain flexibility |

| GemNet | SE(3)-Equivariant | 5,000 | 10^4x | ~0.01 | Transition state modeling for reaction mechanism studies |

| CHGNet | GNN + Charge Features | 100,000+ | 10^6x | ~0.04 | Long-timescale MD for protein folding/misfolding |

Table 2: Computational Cost Analysis for a 100ns Simulation of a Protein-Ligand Complex

| Method | Hardware (GPU) | Wall-clock Time | Estimated Cost (Cloud) | Energy Error (kcal/mol) |

|---|---|---|---|---|

| DFT (CP2K) | 256 CPU Cores | ~3 years | $220,000 | 0.0 (reference) |

| Classical FF (AMBER) | 1x A100 | 5 days | $400 | 5.0–10.0 |

| MLIP (MACE) | 4x A100 | 12 days | $2,800 | 0.5–1.5 |

| MLIP (ANI-2x) | 1x A100 | 7 days | $900 | 1.0–2.0 |

Application Notes & Detailed Protocols

Protocol: MLIP-Driven Binding Free Energy Calculation (ΔG)

Objective: To compute the relative binding free energy of a congeneric ligand series to a kinase target using MLIP-refined simulations.

Workflow Diagram Title: MLIP-Enhanced Binding Free Energy Workflow

Step-by-Step Protocol:

- System Preparation: Using PDB ID 3P7N (PI3Kγ kinase), prepare the protein-ligand complex with protonation states assigned at pH 7.4. Solvate in a TIP3P water box with 12 Å buffer. Add 0.15 M NaCl.

- Initial Minimization & Equilibration: Use OpenMM with the CHARMM36m force field. Minimize energy for 5,000 steps (steepest descent). Equilibrate in NVT ensemble (298 K, Langevin thermostat) for 100 ps, then NPT ensemble (1 atm, Monte Carlo barostat) for 200 ps.

- MLIP Refinement: Extract 10 equidistant snapshots from the last 50 ps of classical NPT. For each snapshot, perform geometry optimization using the ANI-2x potential (via TorchANI) with the protein backbone constrained (force constant 1.0 kcal/mol/Ų). Use the lowest-energy refined structure for FEP.

- Classical FEP Setup: Using the refined structure, set up a relative FEP calculation for 5 ligand analogs. Employ 12 dual-topology λ windows. Run 1 ns equilibration per window, followed by 5 ns production per window (AMBER22, GPU pmemd).

- MLIP Correction: Extract 500 snapshots from the production phase of each λ window. Calculate the potential energy for each snapshot using both the classical force field and the MACE MLIP (single-point calculations). Compute the energy difference (ΔEMLIP - ΔEMM) for each snapshot.

- Analysis: Use the Multistate Bennett Acceptance Ratio (MBAR) to compute the classical ΔΔG. Apply the ensemble-average MLIP energy correction to each transformation's free energy estimate: ΔΔGcorrected = ΔΔGclassical + <ΔEMLIP - ΔEMM>.

Protocol: High-Throughput Virtual Screening with MLIP Re-scoring

Objective: To screen 10,000 compounds from the ZINC20 library against the SARS-CoV-2 Mpro active site using a Glide/MLIP hybrid protocol.

Workflow Diagram Title: MLIP-Rescoring Virtual Screening Pipeline

Step-by-Step Protocol:

- Library Preparation: Download SMILES strings for 10,000 "drug-like" molecules from ZINC20. Generate up to 32 stereoisomers, tautomers, and protonation states per molecule (pH 7.0 ± 2.0) using LigPrep (Schrödinger Suite). Output as 3D SDF files.

- Protein Preparation: Prepare the Mpro structure (PDB 6LU7) with the Protein Preparation Wizard: add missing hydrogens, assign bond orders, optimize H-bond networks, and perform a constrained minimization (OPLS4).

- Initial Docking: Define the active site grid centered on the cocrystallized ligand. Perform Glide Standard Precision (SP) docking for all prepared ligands. Retain the top 1,000 poses based on GlideScore.

- Refinement with MMGBSA: For the top 1,000 poses, perform Prime MMGBSA calculation. Use the VSGB solvation model and OPLS4 force field. Minimize the complex while keeping the protein rigid. Retain the top 500 based on MMGBSA ΔG.

- MLIP Re-scoring: For each of the 500 complexes, run a short 10 ps NVT MD simulation at 300 K using the NequIP potential to relax side chains and ligand. From the final snapshot, calculate the protein-ligand interaction energy as: Einteraction = Ecomplex - (Eprotein + Eligand). Use this as the MLIP score.

- Consensus Ranking: Normalize scores from Glide, MMGBSA, and MLIP to Z-scores. Generate a final rank using a weighted sum: Final Score = 0.3Z(GlideScore) + 0.3Z(MMGBSA) + 0.4*Z(MLIP_Interaction).

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Software and Database Solutions for MLIP-Driven Drug Discovery

| Item Name | Vendor/Project | Function in MLIP Workflow | Key Feature for Drug Discovery |

|---|---|---|---|

| TorchANI | OpenAI | Provides pre-trained ANI-2x potential | Fast, GPU-accelerated energy/force calls for geometry optimization. |

| MACE | MACE Developers | Equivariant MLIP for high accuracy | Models explicit long-range electrostatics critical for binding. |

| NequIP | MIT | E(3)-equivariant graph neural network potential | Data-efficient, excellent for small biomolecule systems. |

| OpenMM | Stanford/Virtual School | MD engine with MLIP plugin support | Enables hybrid MLIP/classical simulation workflows. |

| CHARMM36m | CHARMM Developers | Traditional force field | Baseline for equilibration and MLIP correction protocols. |

| PDB | RCSB | Source of experimental structures | Provides initial coordinates and validation benchmarks. |

| ZINC20 | UCSF | Free database of commercially available compounds | Library for virtual screening and lead discovery. |

| AlphaFold DB | DeepMind | Repository of predicted protein structures | Enables MLIP studies on targets without crystal structures. |

| AWS ParallelCluster | Amazon Web Services | HPC cluster management on cloud | Scalable infrastructure for large-scale MLIP MD simulations. |

| JupyterLab | Project Jupyter | Interactive development environment | Facilitates data analysis, visualization, and protocol sharing. |

Pathway Visualization: MLIPs Enabling Allosteric Drug Discovery

Diagram Title: MLIP-Driven Allosteric Site Identification Pathway

Application Notes

This document provides application notes and experimental protocols for key Machine Learning Interatomic Potential (MLIP) architectures, framed within a benchmarking thesis for computational materials science and drug development. MLIPs bridge quantum mechanical accuracy with classical molecular dynamics speed, enabling large-scale, high-fidelity simulations.

Neural Network Potentials (NNPs), such as Behler-Parrinello and Deep Potential, use atom-centered symmetry functions or embedding networks to represent atomic environments, followed by feed-forward neural networks to predict energies. They excel in modeling complex, high-dimensional potential energy surfaces (PES) for diverse material systems.

Gaussian Process Regression (GPR) potentials offer a non-parametric, Bayesian approach. They provide inherent uncertainty quantification, which is critical for active learning and robust sampling of configurations. Their computational cost scales cubically with training set size, often limiting them to smaller systems or used in hybrid approaches.

Other Architectures include linear models (e.g., Spectral Neighbor Analysis Potential, SNAP), kernel-based methods, and emerging graph neural networks (e.g., MACE, Allegro). These architectures balance interpretability, data efficiency, and scalability.

The choice of architecture depends on system complexity, data availability, required accuracy, and computational budget. Benchmarking protocols must standardize training, validation, and testing across these paradigms.

Experimental Protocols for MLIP Benchmarking

Protocol 1: Unified Training and Validation Workflow

- Data Curation: Assemble a diverse dataset of atomic configurations (e.g., from ab initio molecular dynamics, density functional theory calculations). Split into training (70%), validation (15%), and hold-out test (15%) sets.

- Descriptor/Feature Generation: For each architecture, compute relevant input features.

- For NNPs (Behler-Parrinello): Calculate radial and angular symmetry functions for each atom.

- For GPR: Compute a smooth overlap of atomic positions (SOAP) or atomic cluster descriptors.

- For Linear Models: Build the bispectrum or equivalent descriptor vectors.

- Model Training:

- NNP: Train feed-forward network using mean squared error loss between predicted and DFT energies/forces. Use validation set for early stopping.

- GPR: Optimize kernel hyperparameters (length scale, noise) by maximizing the log marginal likelihood on the training set.

- Hyperparameter Optimization: Conduct a grid or Bayesian search over key parameters (e.g., network width/depth, learning rate, regularization for NNPs; kernel type and scale for GPR).

Protocol 2: Performance Benchmarking on Test Set

- Energy & Force Accuracy: Calculate Root Mean Square Error (RMSE) and Mean Absolute Error (MAE) for energy per atom and atomic force components on the hold-out test set.

- Phonon Dispersion & Elastic Constants: Perform finite-displacement calculations to derive phonon spectra and elastic tensors. Compare to reference DFT results.

- Molecular Dynamics (MD) Validation: Run NVT and NPT MD simulations on unseen phases or defect structures. Monitor stability and compare radial distribution functions, diffusion coefficients, or phase transition temperatures to ab initio or experimental benchmarks.

Protocol 3: Efficiency Assessment

- Training Time & Scalability: Measure wall-clock time to convergence vs. training set size.

- Inference Speed: Measure the time (and cost) to perform a single MD step for a standardized system (e.g., 256 atoms). Compare to a baseline (e.g., DFT, classical force field).

Table 1: Benchmark Performance of MLIP Architectures on a Standardized Test Set (Example: Silicon Bulk & Defects)

| Architecture | Energy RMSE (meV/atom) | Force RMSE (eV/Å) | Single-step Inference Time (ms) | Max Stable MD Time (ps) | Training Time (GPU-hr) |

|---|---|---|---|---|---|

| Behler-Parrinello NNP | 2.1 | 0.15 | 5.2 | >100 | 12.5 |

| DeepPot-SE | 1.8 | 0.12 | 7.8 | >100 | 25.0 |

| Gaussian Approximation Pot. (GAP) | 1.5 | 0.10 | 45.0 | >100 | 120.0 (CPU) |

| Spectral Neighbor Anal. Pot. (SNAP) | 3.0 | 0.22 | 12.3 | 85 | 5.0 (CPU) |

| Graph Neural Network (MACE) | 1.2 | 0.08 | 15.5 | >100 | 40.0 |

Table 2: Typical Hyperparameter Search Space for Key Architectures

| Architecture | Key Hyperparameters | Typical Search Range |

|---|---|---|

| Behler-Parrinello NNP | Number of hidden layers, neurons/layer, radial/angular function cutoffs | 2-4 layers, 10-50 neurons, 4.0-8.0 Å |

| Deep Potential | Size of embedding & fitting nets, smoothing parameter (r_sel) | (25,50,100) nets, 5.0-7.0 Å |

| Gaussian Process (GPR) | Kernel type (SOAP, dot product), length scale, noise | Length scale: 0.1-10.0 |

| Linear Model (SNAP) | Bispectrum order (jmax), radial basis cutoff | jmax: 1-5, cutoff: 4.0-6.0 Å |

Visualizations

Title: Standard MLIP Development and Benchmarking Workflow

Title: MLIP Architecture Comparison: Types and Trade-offs

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software and Libraries for MLIP Research

| Item (Software/Library) | Primary Function | Key Use Case in Protocol |

|---|---|---|

| LAMMPS | Molecular Dynamics Simulator | The primary engine for running MD simulations with fitted MLIPs (Protocol 2, Step 3). |

| QUIP/GAP | Software package for GAP | Used to fit and run Gaussian Approximation Potentials (Protocol 1, Step 3). |

| DeePMD-kit | Toolkit for Deep Potential | Training and running DeepPot-SE and related NNPs (Protocol 1 & 2). |

| ASE (Atomic Simulation Environment) | Python toolkit for atomistics | Used for data set manipulation, descriptor calculation, and interfacing different codes. |

| PyTorch/TensorFlow | Deep Learning Frameworks | Backend for building and training custom neural network potentials. |

| SNAPU or LibTorch-SNAP | Implementations of SNAP | Fitting Spectral Neighbor Analysis Potentials (Protocol 1). |

| JAX or JAX-MD | Accelerated computing library | Increasingly used for developing new, differentiable MLIP models. |

| VASP/Quantum ESPRESSO | Ab Initio Electronic Structure | Generating the reference training data (Protocol 1, Step 1). |

Application Notes on Multi-Fidelity Training Data for MLIPs

The development of robust and transferable Machine Learning Interatomic Potentials (MLIPs) for chemical and biochemical systems requires training on high-quality, multi-fidelity datasets. These datasets span varying levels of computational cost and accuracy, from fast but approximate Density Functional Theory (DFT) to highly accurate but expensive coupled-cluster theory (CCSD(T)) and experimental measurements.

Table 1: Characteristic Fidelity, Cost, and Applications of Core Data Sources

| Data Source | Typical Accuracy (Energy) | Computational Cost | Primary Use in Training | Key Limitations |

|---|---|---|---|---|

| DFT (e.g., PBE, B3LYP) | ~5-10 kcal/mol | Moderate | Generating large-scale structural datasets; initial potential fitting. | Functional dependence; poor dispersion; inaccurate for transition states. |

| CCSD(T)/CBS (Gold Standard) | <1 kcal/mol | Very High | Small, high-accuracy training sets; correction schemes; final validation. | Prohibitively expensive for >20 atoms or dynamical sampling. |

| Experimental Data (e.g., XRD, NMR, ∆G) | Varies (Direct Physical Measurement) | N/A (Acquisition Cost) | Anchoring model to physical reality; thermodynamic/kinetic parameter fitting. | Sparse; often indirect for energies; requires careful error modeling. |

Best Practices for Data Curation

- Stratified Sampling: Training sets must sample relevant chemical and conformational space. Use DFT-based active learning or molecular dynamics to generate candidate structures, then select a subset for high-level (CCSD(T)) single-point energy calculations.

- Error Consistency: Quantify and document systematic errors for each data source. For example, a specific DFT functional may consistently underbind dispersion complexes by 2 kcal/mol.

- Multi-Fidelity Integration: Employ Δ-Machine Learning or hierarchical approaches. A base model is trained on abundant DFT data, then a correction model is trained on the difference between CCSD(T) and DFT energies for a smaller, representative set.

- Experimental Data Integration: Experimental observables (e.g., free energies of binding, lattice constants) should be incorporated via a loss function during training or used for rigorous validation, not as direct energy labels.

Detailed Protocols for Constructing a Multi-Fidelity Training Set

Protocol: Generating a CCSD(T)-Corrected DFT Dataset for Organic Molecule Conformers

Objective: Create a dataset of organic molecule conformer energies suitable for training a generalizable MLIP.

Materials & Software:

- Initial Conformer Pool: Generated using RDKit or CREST.

- DFT Engine: Gaussian 16, ORCA, or PSI4.

- High-Level Computation Engine: MRCC, CFOUR, or ORCA (for DLPNO-CCSD(T)).

- Scripting: Python with ASE (Atomic Simulation Environment) or Psi4NumPy.

Procedure:

- Conformer Generation: For each target molecule (e.g., drug-like fragments), generate an ensemble of low-energy conformers using a meta-dynamics or systematic search (MMFF94 force field).

- DFT Pre-Optimization and Single-Point: Optimize all conformer geometries using a standard DFT functional (e.g., ωB97X-D/def2-SVP). Perform a tighter single-point energy calculation on each optimized geometry (e.g., ωB97X-D/def2-QZVP).

- High-Level Subset Selection: Cluster conformers based on root-mean-square deviation (RMSD). From each major cluster, select the centroid and 1-2 edge-case structures.

- CCSD(T) Benchmark Calculation: Perform DLPNO-CCSD(T)/def2-QZVP single-point energy calculations on the selected subset (typically 50-100 structures per molecule). Note: Ensure extrapolation to the complete basis set (CBS) if maximum accuracy is required.

- Δ-Data Creation: For the subset, calculate ΔE = ECCSD(T) - EDFT. Train a

Δ-model(e.g., Gaussian Process Regression) to predict this correction as a function of the DFT-derived electronic descriptors or geometry. - Corrected Dataset Assembly: Apply the trained

Δ-modelto predict corrections for the entire DFT dataset. Create the final training set labels: Efinal = EDFT + ΔE_predicted.

Protocol: Integrating Experimental Binding Free Energy Data

Objective: Refine an MLIP to reproduce experimental protein-ligand binding affinities (ΔG).

Materials:

- MLIP: Pre-trained on DFT data (e.g., ANI-2x, MACE).

- Experimental Data: Public databases (e.g., PDBbind, BindingDB).

- Free Energy Calculation Software: SOMD, GROMACS with plumed, or Schrodinger FEP+.

Procedure:

- Data Curation: From PDBbind, select a high-quality subset of protein-ligand complexes with experimentally measured ΔG, crystal structures (resolution < 2.0 Å), and well-defined binding pockets.

- System Preparation: Prepare the complexes (protonation, solvation, minimization) using a standard molecular mechanics force field.

- Alchemical Transformation Setup: Set up free energy perturbation (FEP) or thermodynamic integration (TI) calculations for a series of congeneric ligands.

- MLIP-Driven Simulation: Perform the alchemical simulations using the MLIP (via interfaces like TorchMD or DeepMD) for the ligand and its immediate environment, while treating the rest of the protein with a classical force field.

- Loss Function and Refinement: Define a loss function L = Σ (ΔGcalc - ΔGexp)². Use gradient-based optimization to refine the MLIP's last layer or a dedicated correction network, minimizing L. This is typically a fine-tuning step, not training from scratch.

- Validation: Validate the refined MLIP on a held-out test set of experimental ΔG data not used in refinement.

Visualizations

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Computational Reagents for Multi-Fidelity Dataset Creation

| Item/Software | Category | Primary Function in Protocol |

|---|---|---|

| RDKit / CREST | Conformer Generation | Generates initial, diverse ensembles of molecular geometries for subsequent QM treatment. |

| ORCA / Gaussian 16 | DFT Engine | Performs density functional theory calculations for geometry optimization and moderate-accuracy single-point energies. |

| MRCC / CFOUR | Coupled-Cluster Engine | Executes high-accuracy CCSD(T) calculations, often considered the quantum chemical "gold standard." |

| DLPNO-CCSD(T) | Approximate Coupled-Cluster | Enables CCSD(T)-level calculations on larger systems (50-200 atoms) with minimal accuracy loss. |

| ASE (Atomic Simulation Environment) | Scripting & Workflow | Python library for orchestrating calculations across different quantum chemistry codes and managing atoms. |

| TorchMD / DeepMD-Kit | MLIP Simulation Interface | Allows pre-trained MLIPs to be used for molecular dynamics and free energy simulations. |

| PDBbind / BindingDB | Experimental Database | Curated sources of experimental protein-ligand binding affinities and structures for validation/refinement. |

| GROMACS / SOMD | Free Energy Calculation | Software to perform alchemical free energy simulations (FEP/TI) using MLIPs or classical force fields. |

Application Notes

The benchmarking of Machine Learning Interatomic Potentials (MLIPs) is critical for establishing trust in their application to computational drug discovery. Within the broader thesis on MLIP benchmarking protocols, three high-value use cases emerge: predicting protein-ligand binding affinities, sampling conformational dynamics, and modeling solvation effects. These applications test an MLIP's accuracy beyond single-point energies, evaluating its performance on thermodynamic and kinetic properties essential for understanding biomolecular function.

Quantitative benchmarks require comparison against high-level quantum mechanics (QM) and/or robust experimental data. The tables below summarize key performance metrics and datasets relevant for MLIP evaluation in these domains.

Table 1: Benchmark Datasets for MLIP Validation in Drug-Relevant Use Cases

| Dataset Name | Primary Use Case | Target Property | Reference Data Source | Key Metric(s) |

|---|---|---|---|---|

| PoseBusters | Protein-Ligand Binding | Binding pose plausibility | Crystal structures & physics | RMSD, steric clashes, formal charges |

| PLAS-5k | Protein-Ligand Binding | Relative binding free energy (ΔΔG) | Experimental affinity | RMSE, R², Kendall's τ |

| Protein Conformational Ensembles | Conformational Dynamics | State populations, transition rates | NMR, DEER spectroscopy | Free energy landscape, kinetics |

| SPICE | Solvation | Solvation free energies (ΔG_solv) | Experimental/Implicit solvation | RMSE, Mean Absolute Error |

| WSAS | Solvation | Water site stability & entropy | MD simulations with explicit water | Residence time, density maps |

Table 2: Typical MLIP Performance Targets for Biomolecular Simulations

| Property | Target Accuracy (vs. QM/Experiment) | Required Simulation Time | Relevant MLIP Architecture Examples |

|---|---|---|---|

| Binding Affinity (ΔG) | < 1.0 kcal/mol RMSE | 10-100 ns per window | NequIP, Allegro, MACE |

| Side-Chain Rotamer Populations | > 0.9 Correlation | 100 ns - 1 µs | TorchANI, ANI-2x, PiNN |

| Solvation Free Energy | < 1.5 kcal/mol RMSE | 1-10 ns per compound | Solvent-trained specialized MLIPs |

| Macromolecular RMSD Stability | < 2.0 Å (vs. Target) | 100 ns - 1 µs | Generalizable biomolecular MLIPs |

Experimental Protocols

Protocol 1: Relative Binding Free Energy (RBFE) Calculation using MLIPs

This protocol outlines an alchemical free energy perturbation (FEP) approach to compute ΔΔG for congeneric ligands, a critical benchmark for MLIPs in drug discovery.

1. System Preparation:

- Source: Obtain protein-ligand complexes from the PDB or prepare using docking (e.g., GLIDE, AutoDock).

- Parameterization: Generate initial coordinates and topologies for the protein and ligands. Use the MLIP for all atoms, avoiding hybrid QM/MM setups for pure MLIP tests.

- Solvation: Solvate the system in a truncated octahedral or rectangular water box, ensuring a minimum 10 Å buffer from the solute. Add ions to neutralize charge and achieve physiological concentration (e.g., 150 mM NaCl).

2. Alchemical Pathway Setup:

- Define the thermodynamic cycle linking two ligands (L1 → L2).

- Discretize the transformation into 12-24 λ windows. Use a soft-core potential for Lennard-Jones interactions to avoid singularities.

- For each λ window, energy minimize and equilibrate (NVT then NPT) for 100-200 ps.

3. Production Simulation & Analysis:

- Run molecular dynamics for each λ window using the MLIP. Collect 5-10 ns of data per window (total ~60-240 ns per transformation).

- Use the Multistate Bennett Acceptance Ratio (MBAR) or Thermodynamic Integration (TI) to analyze the energy differences and compute ΔΔG.

- Benchmarking: Compare MLIP-derived ΔΔG values against experimental IC50/Ki data (converted to ΔG) and results from classical FEP (e.g., using AMBER/CHARMM). Calculate RMSE, R², and Kendall's τ rank correlation.

Protocol 2: Characterizing Conformational Dynamics via MLIP MD

This protocol benchmarks an MLIP's ability to reproduce protein conformational landscapes and transition kinetics.

1. Initial Structure and Simulation Setup:

- Select a protein with well-characterized conformational states (e.g., T4 lysozyme L99A, GPCRs).

- Prepare the system as in Protocol 1, ensuring the simulation box accommodates large-scale motion.

- Perform extended energy minimization and equilibration.

2. Enhanced Sampling Simulation:

- Employ enhanced sampling methods to overcome timescale limitations. Use Gaussian Accelerated MD (GaMD) or Metadynamics.

- For GaMD: Calculate system potential statistics over a 20 ns preliminary run, then apply the boost potential for a 500 ns - 1 µs production run using the MLIP.

- Select collective variables (CVs) carefully (e.g., distance between key residues, dihedral angles).

3. Analysis of Dynamics:

- Free Energy Surface: Construct 2D free energy landscapes by projecting the simulation trajectory onto the selected CVs.

- State Identification: Use clustering (e.g., k-means, hierarchical) on CVs or RMSD to identify metastable conformational states.

- Kinetics: Perform Markov State Model (MSM) analysis to compute transition rates between identified states.

- Benchmarking: Compare state populations, free energy barriers, and transition rates against experimental data (NMR relaxation, smFRET) or long-timescale classical MD simulations.

Protocol 3: Solvation Free Energy Calculation

This protocol tests an MLIP's accuracy in modeling solvent interactions by calculating the solvation free energy (ΔG_solv) for small molecules.

1. Ligand and Simulation Setup:

- Select a diverse set of 10-20 small molecules from the SPICE or FreeSolv database.

- For each molecule, create two simulation systems: (1) solute in vacuum, (2) solute solvated in water (TIP3P or SPC/E, or as defined by MLIP training).

- Use a smaller box (≥8 Å buffer) for efficiency. Apply periodic boundary conditions.

2. Alchemical Decoupling Simulation:

- Define an alchemical path to decouple the solute from the solvent over 12-16 λ windows. Electrostatic interactions are typically decoupled before van der Waals.

- For each λ window, equilibrate for 50 ps, then run production MLIP-MD for 2-5 ns.

3. Free Energy Analysis and Validation:

- Use MBAR/TI to compute ΔG_solv from the alchemical work values.

- Benchmarking: Compare the MLIP-computed ΔG_solv against the reference experimental values from the database. Compute mean unsigned error (MUE) and RMSE. A robust MLIP should achieve an MUE < 1.0 kcal/mol for drug-like molecules.

Visualization

MLIP Benchmarking Workflow

Binding Free Energy Thermodynamic Cycle

Conformational Dynamics State Model

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for MLIP Biomolecular Benchmarking

| Item Name | Category | Function & Relevance to Benchmarking |

|---|---|---|

| OpenMM | Simulation Engine | A versatile, high-performance toolkit for running MLIP and classical MD simulations. Essential for implementing alchemical FEP and enhanced sampling protocols. |

| MDAnalysis | Analysis Library | A Python library to analyze trajectories from MLIP-MD. Used to compute RMSD, distances, dihedrals, and other CVs for dynamics benchmarks. |

| pymbar | Analysis Library | Python implementation of the MBAR estimator for accurate free energy calculation from alchemical simulations (Protocols 1 & 3). |

| PLUMED | Enhanced Sampling | A library for implementing GaMD, Metadynamics, and defining CVs. Integrates with MLIP codes to sample conformational transitions (Protocol 2). |

| SPICE Dataset | Reference Data | A curated QM and experimental dataset of small molecule solvation and thermodynamic properties. Primary benchmark for solvation free energy calculations. |

| PDBbind | Reference Data | A comprehensive database of protein-ligand complex structures and binding affinities. Used to curate test sets for binding affinity prediction benchmarks. |

| DeePMD-kit | MLIP Framework | A widely used framework to run simulations with MLIPs like DeepPot-SE. Often used as a baseline for performance comparisons. |

| CHARMM36 | Classical Force Field | The standard classical force field for biomolecules. Provides the essential reference point against which MLIP accuracy and efficiency are compared. |

A Step-by-Step Protocol for Robust MLIP Benchmarking and Deployment

Within the broader thesis on Machine Learning Interatomic Potential (MLIP) benchmarking protocols, this document provides detailed Application Notes and Protocols. A rigorous, standardized workflow is essential for the robust evaluation of MLIPs, which are critical tools for researchers, scientists, and drug development professionals in molecular simulation and materials discovery.

The Benchmarking Workflow: A Five-Stage Protocol

A comprehensive MLIP benchmarking workflow consists of five sequential, interdependent stages.

Diagram 1: MLIP Benchmarking Workflow

Title: Five-Stage MLIP Benchmarking Workflow

Stage 1: Problem Definition & Scope Delineation

Protocol: Before any data is collected, explicitly define the chemical space, material classes (e.g., organic molecules, metallic alloys, semiconductors), and target properties for evaluation. Document intended use cases (e.g., molecular dynamics for protein folding, energy prediction for crystal structures).

Output: A benchmarking charter specifying elements, phases, conditions (T, P), and key properties of interest (energy, forces, stresses, vibrational spectra, defect formation energies).

Stage 2: Data Curation & Dataset Assembly

This foundational stage involves sourcing and preparing high-quality reference data. Sources include Density Functional Theory (DFT) databases, experimental repositories, and high-level quantum chemistry calculations.

Protocol 2.2.1: Multi-Source Data Aggregation

- Source Identification: Query established databases (see Table 1). Use APIs (e.g., Materials Project API, OQMD API) for programmatic retrieval.

- Data Download: Extract structures and target properties (energy, forces).

- Initial Filtering: Remove duplicates and structures with failed calculations.

- Format Standardization: Convert all data to a common format (e.g., ASE.db, .xyz, extended XYZ).

Protocol 2.2.2: Dataset Splitting Strategy

- Define Splits: Create distinct sets for Training (≈70-80%), Validation (≈10-15%), and Testing (≈10-15%).

- Apply Splitting Algorithm: Use structure-based splitting (e.g., SLATM, SOAP) via tools like

sklearnormdsplitsto ensure dissimilar structures are in different splits, preventing data leakage. - Finalize & Archive: Save split indices and final datasets with unique identifiers. Calculate dataset statistics.

Table 1: Key Data Sources for MLIP Benchmarking

| Source Name | Type | Primary Content | Access Method | Key Consideration |

|---|---|---|---|---|

| Materials Project | DFT Database | Inorganic crystals, formation energies, elastic tensors | REST API | PBE functional; may require correction schemes. |

| OQMD | DFT Database | Inorganic materials, thermodynamic stability | REST API | Large volume; requires careful quality filtering. |

| NOMAD | Repository | Diverse data (DFT, experiment, MD) | Archive Browser/API | Heterogeneous; requires extensive curation. |

| ANI-1x/ANI-2x | ML-Oriented | DFT (wB97X/6-31G*) organic molecules | Download | High-quality, general-purpose for molecules. |

| rMD17 | ML-Oriented | DFT (PBE+D3) trajectories of small molecules | Download | Benchmark for forces and dynamics. |

Model Training & Validation Protocol

Protocol 3.1: Standardized Training Loop

- Initialization: Initialize MLIP architecture (e.g., NequIP, MACE, Allegro) with published or defined hyperparameters.

- Loss Function Definition: Use a composite loss:

L = w_energy * MSE(E) + w_forces * MSE(F) + [w_stress * MSE(S)]. - Optimization: Use Adam or AdamW optimizer with a learning rate scheduler (e.g., ReduceLROnPlateau).

- Validation Checkpointing: After each epoch, compute validation loss. Save the model with the best validation loss.

- Early Stopping: Halt training if validation loss does not improve for a pre-defined number of epochs (patience=50).

Diagram 2: Training & Validation Loop

Title: MLIP Training and Validation Loop

Systematic Evaluation & Testing Protocol

Protocol 4.1: Property Prediction on Static Test Set

- Inference: Use the checkpointed best model to predict energies (E), atomic forces (F), and stresses (σ) for all entries in the held-out test set.

- Error Calculation: Compute per-structure errors relative to reference (DFT) values. Common metrics include Mean Absolute Error (MAE) and Root Mean Square Error (RMSE).

Protocol 4.2: Molecular Dynamics Stability Test

- Simulation Setup: Select 5-10 diverse test structures. Run NVT MD using the MLIP at a relevant temperature (e.g., 300K, 1000K) for 10-50 ps with a 0.5-1.0 fs timestep.

- Stability Metric: Monitor the root mean square deviation (RMSD) of the core structure and check for unphysical bond breaking or atom evaporation.

- Energy Conservation: In an NVE simulation, monitor the total energy drift per atom over time (should be < 1 meV/atom/ps).

Table 2: Example Benchmarking Results on a Hypothetical Test Set

| Model Architecture | Energy MAE (meV/atom) | Forces MAE (meV/Å) | Stress MAE (GPa) | Stable MD? (Y/N) | Energy Drift (meV/atom/ps) |

|---|---|---|---|---|---|

| Model A (e.g., NequIP) | 8.2 | 86.5 | 0.45 | Y | 0.3 |

| Model B (e.g., MACE) | 6.5 | 71.2 | 0.38 | Y | 0.2 |

| Model C (Baseline) | 25.1 | 152.7 | 1.12 | N | 5.8 |

Metric Calculation, Reporting, and Analysis

Protocol 5.1: Comprehensive Metric Calculation

- Aggregate Statistics: Calculate mean, standard deviation, and max error across the entire test set for all primary properties.

- Per-Element/Per-Phase Analysis: Segment errors by chemical element or crystal phase to identify model biases.

- Speed Benchmarking: Measure the wall-clock time for a single force call and for 1 ps of MD simulation on standard hardware (e.g., 1x NVIDIA A100 GPU).

Protocol 5.2: Reporting Standard Create a final benchmark report containing:

- Dataset statistics (size, composition, splits).

- Full training hyperparameters.

- Table of aggregate metrics (like Table 2).

- Per-element error plots.

- MD stability/energy drift results.

- Computational cost metrics.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools and Libraries for MLIP Benchmarking

| Item (Tool/Library) | Category | Function & Purpose |

|---|---|---|

| ASE (Atomic Simulation Environment) | Core Library | Python framework for setting up, running, and analyzing atomistic simulations. Handles I/O, geometry optimization, and MD. |

| JAX / PyTorch | ML Framework | Libraries for building, training, and executing machine learning models, including graph neural networks for MLIPs. |

| DeePMD-kit | MLIP Framework | A toolkit for training and running the DeepPot-SE model. Provides utilities for data preparation and model deployment. |

| NequIP / MACE / Allegro | MLIP Architecture | State-of-the-art, equivariant graph neural network architectures for constructing highly accurate and data-efficient MLIPs. |

| FAIR-Chem-LAMMPS | MD Engine | A modified version of LAMMPS integrated with PyTorch and JAX for efficient MD simulations with MLIPs on GPUs. |

| CHGNet / M3GNet | Pretrained Potential | Broad, pretrained MLIPs for inorganic materials, useful as baselines or for initial structure screening. |

| Matbench | Benchmarking Suite | A collection of ready-to-use benchmark tasks for evaluating ML models on materials science problems. |

| MODEL-ZOO | Model Repository | A platform for sharing and discovering pretrained MLIPs, promoting reproducibility and community standards. |

This document provides application notes and protocols for dataset splitting, a foundational step in machine learning for interatomic potentials (MLIP) development and benchmarking within drug discovery and materials science. Proper partitioning is critical for developing robust, generalizable models and for fair performance evaluation.

Core Principles and Quantitative Guidelines

Table 1: Recommended Dataset Split Ratios by Scenario

| Scenario / Data Type | Typical Size | Training (%) | Validation (%) | Hold-out Test (%) | Key Rationale |

|---|---|---|---|---|---|

| Large, Homogeneous Dataset | >100,000 samples | 70-80 | 10-15 | 10-15 | Maximizes learning, sufficient data for reliable validation/test. |

| Medium-Sized Dataset | 10,000 - 100,000 samples | 60-70 | 15-20 | 15-20 | Balances learning with evaluation stability. |

| Small or Expensive Dataset | <10,000 samples | 50-60 | 20-25 | 20-25 | Prioritizes evaluation reliability; may require cross-validation. |

| Temporal/Sequential Data | Variable | Chronological first 70-80 | Chronological next 10-15 | Chronological last 10-15 | Preserves temporal causality; prevents data leakage. |

| Highly Imbalanced Classes | Variable | Preserve class ratios in all splits (Stratified Splitting) | Ensures all splits represent the underlying class distribution. |

Table 2: Common Splitting Pitfalls and Mitigations

| Pitfall | Consequence | Mitigation Protocol |

|---|---|---|

| Data Leakage | Over-optimistic, invalid performance estimates. | Hold-out test set must be locked before any model development. Apply same pre-processing (scaling, imputation) independently per split using training set parameters only. |

| Non-IID Splits | Poor model generalization to new data distributions. | Use domain-aware splitting (e.g., by scaffold, by composition, by temporal block). |

| Inadequate Validation Set | Unreliable hyperparameter tuning and model selection. | Ensure validation set is large enough to detect performance differences (> a few hundred samples). Use k-fold cross-validation for small datasets. |

| Single Random Split | High variance in reported performance metrics. | Use multiple random splits with different seeds or nested cross-validation; report mean and std. dev. of metrics. |

Experimental Protocols for MLIP Benchmarking

Protocol 3.1: Standard Randomized Split for Homogeneous Data

- Objective: Create baseline splits for initial model development.

- Materials: Complete dataset (D), random number generator with seed.

- Procedure:

- Shuffle dataset D randomly using a fixed seed for reproducibility.

- Allocate 70% of D to the training set (Dtrain).

- Allocate 15% of D to the validation set (Dval).

- Allocate the final 15% of D to the hold-out test set (Dtest).

- Lock Dtest: Store it separately and do not use for any training or validation activities.

- Use Dtrain for model fitting and Dval for hyperparameter tuning.

- Perform final evaluation once on D_test.

Protocol 3.2: Scaffold Split for Molecular Datasets in Drug Development

- Objective: Assess MLIP ability to generalize to novel chemical scaffolds.

- Materials: Molecular dataset with associated Bemis-Murcko scaffold identifiers.

- Procedure:

- Generate a unique molecular scaffold for each compound in D.

- Group all molecules by their scaffold.

- Sort scaffold groups by size (descending).

- Iteratively assign entire scaffold groups to Dtrain, Dval, and Dtest to approximate target ratios (e.g., 70/15/15). This ensures no scaffold appears in more than one split.

- Lock Dtest and proceed as in Protocol 3.1.

Protocol 3.3: Temporal Split for Evolving Datasets

- Objective: Simulate real-world deployment where future data is unknown.

- Materials: Dataset with timestamps (e.g., experimental results over time).

- Procedure:

- Sort dataset D chronologically by timestamp.

- Designate the earliest 70% of data points as Dtrain.

- Designate the next 15% as Dval.

- Designate the most recent 15% as Dtest.

- Lock Dtest and proceed. Do not shuffle.

Protocol 3.4: Nested Cross-Validation for Small Datasets

- Objective: Maximize use of limited data for both hyperparameter tuning and unbiased performance estimation.

- Procedure:

- Define an outer k-fold (e.g., k=5). Split data into k folds.

- For each outer fold

i:- Hold out fold

ias the test set. - Use the remaining k-1 folds for an inner loop:

- Perform a second, independent k-fold split on the k-1 folds.

- Use these inner splits to tune hyperparameters via grid/random search.

- Train a final model on the k-1 folds with the best hyperparameters.

- Evaluate this model on the held-out outer test fold

i.

- Hold out fold

- The final performance estimate is the average across all k outer test folds.

Visualizations

Diagram Title: Workflow for Dataset Splitting and Model Development

Diagram Title: Nested 5-Fold Cross-Validation Schema

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Dataset Management

| Item / Tool | Primary Function | Application in MLIP/Drug Development Context |

|---|---|---|

Scikit-learn (train_test_split, StratifiedKFold, GroupShuffleSplit) |

Provides robust, standard algorithms for creating dataset splits. | Implementing Protocols 3.1, 3.3, and 3.4. Essential for reproducible random sampling and stratified splits. |

| RDKit | Open-source cheminformatics toolkit. | Generating molecular scaffolds (Bemis-Murcko) for implementing Protocol 3.2 (scaffold split). |

| Pandas / NumPy | Data manipulation and numerical computing in Python. | Core libraries for loading, filtering, shuffling, and indexing datasets before and after splitting. |

| Chemical Checker or TDC (Therapeutics Data Commons) | Provide pre-processed, curated biomedical datasets with suggested benchmark splits. | Accessing standardized datasets and split definitions for fair MLIP benchmarking in drug discovery tasks. |

| Weights & Biases (W&B) / MLflow | Experiment tracking and versioning platforms. | Logging dataset split hash/version, hyperparameters, and results to ensure traceability and reproducibility. |

| Custom Splitting Scripts (Python) | Domain-specific splitting logic. | Implementing complex splitting rules (e.g., by material composition, by protein family) not covered by standard libraries. |

| Checksum Tool (e.g., MD5) | Generating unique hash identifiers for files. | Creating a unique fingerprint for locked test sets to guarantee their integrity throughout a research project. |

Within the thesis on Machine Learning Interatomic Potential (MLIP) benchmarking protocols and best practices, the rigorous selection and calculation of essential metrics form the cornerstone of reliable model validation. This document provides detailed application notes and protocols for evaluating MLIP performance based on energy, atomic forces, and material property predictions. Accurate benchmarking across these metrics is critical for the deployment of MLIPs in research and industrial applications, such as drug development and materials discovery.

Core Quantitative Metrics: Definitions & Equations

The performance of an MLIP is quantified by comparing its predictions against reference data, typically derived from quantum mechanical calculations like Density Functional Theory (DFT). The following errors are fundamental.

Table 1: Definitions of Core MLIP Error Metrics

| Metric | Formula | Description |

|---|---|---|

| Energy Error (per atom) | RMSE_E = sqrt( 1/N ∑_i^N ((E_i_pred - E_i_ref)/n_i)^2 ) |

Root Mean Square Error (RMSE) in total energy, normalized per atom for system size independence. n_i is the number of atoms in configuration i. |

| Forces Error | RMSE_F = sqrt( 1/(3*M) ∑_i^M ∑_α (F_i,α_pred - F_i,α_ref)^2 ) |

RMSE over all M atoms and all Cartesian components (α ∈ {x,y,z}) of the atomic force vectors. |

| Energy-Forces Trade-off | Typically visualized via a 2D scatter plot of RMSE_E vs. RMSE_F for multiple models. |

Highlights the Pareto front; models closer to the origin and the front are superior. |

| Property Error | Error_P = | P_pred - P_ref | (or relative error) |

Error in derived material properties (e.g., lattice constant, elastic moduli, vibrational frequencies). |

Experimental Protocols for Metric Calculation

Protocol 3.1: Dataset Preparation and Partitioning

Objective: To create a standardized dataset for training, validation, and testing.

- Source Data: Gather reference data from databases (e.g., Materials Project, QM9, OC20) or generate new DFT calculations.

- Splitting: Implement a structure-based split (e.g., by composition, crystal prototype, or molecular scaffold) to prevent data leakage. A common ratio is 70:15:15 (Train:Validation:Test).

- Normalization: Scale energy and force targets to zero mean and unit variance using training set statistics.

Protocol 3.2: Calculation of Energy and Force Errors

Objective: To compute RMSEE and RMSEF on a held-out test set.

- Model Inference: Use the trained MLIP to predict energies (

E_pred) and forces (F_pred) for all configurations in the test set. - Per-configuration Error: Calculate Mean Absolute Error (MAE) per configuration for debugging.

- Aggregate RMSE: Apply the formulas in Table 1 across the entire test set to compute the final RMSE values.

- Unit Conversion: Report errors in standardized units (e.g., meV/atom for energy, meV/Å for forces).

Protocol 3.3: Calculation of Material Property Errors

Objective: To predict macroscopic properties from MLIP-driven simulations.

- Property Selection: Choose relevant properties (e.g., lattice parameter

a₀, bulk modulusB, cohesive energy). - Simulation Setup: Use the MLIP within a molecular dynamics (MD) or Monte Carlo (MC) engine (e.g., LAMMPS, ASE).

- Protocol Execution:

- For

a₀: Perform variable-cell relaxation at 0K. - For

B: Fit an equation of state (e.g., Birch-Murnaghan) to energy-volume curves. - For

E_coh:E_coh = (E_crystal - N * E_atom) / N, whereE_atomis the energy of an isolated atom.

- For

- Error Reporting: Compute absolute or relative error against the DFT-derived reference property.

Visualization of Benchmarking Workflow

Diagram 1: MLIP Benchmarking and Metric Calculation Workflow (100 chars)

The Scientist's Toolkit: Essential Research Reagents & Software

Table 2: Key Research Reagent Solutions for MLIP Benchmarking

| Item Name | Category | Function & Explanation |

|---|---|---|

| VASP / Quantum ESPRESSO | Reference Data Generator | First-principles electronic structure codes to generate the "ground truth" training and test data. |

| ASE (Atomic Simulation Environment) | Python Library | Facilitates the setup, execution, and analysis of DFT calculations and atomistic simulations with MLIPs. |

| LAMMPS | Simulation Engine | High-performance MD code with broad support for MLIPs via interfaces (e.g., mliap). Used for property prediction. |

| SGDML / sGDML | Force-Field Model | A specialized MLIP for molecular systems, providing accurate forces for benchmarking. |

| MACE / Allegro / NequIP | Graph Neural Network MLIPs | State-of-the-art, equivariant MLIP architectures that set modern performance benchmarks. |

| OCP / CHGNet | Pre-trained MLIPs | Broad-coverage models for catalysis and materials, useful as baselines. |

| Phonopy | Property Calculator | Calculates vibrational properties (phonons) from force constants for error validation. |

| pymatgen | Materials Analysis | Python library for analyzing structural data, calculating materials properties, and managing datasets. |

1. Introduction within Thesis Context This protocol provides a standardized framework for executing Molecular Dynamics (MD) simulations using Machine Learning Interatomic Potentials (MLIPs). Within the broader thesis on MLIP benchmarking, this document serves as the foundational application note, detailing the precise steps for simulation setup, execution, and initial validation across common computational frameworks (LAMMPS, ASE). Adherence to this protocol ensures consistency, reproducibility, and comparability of results, which are critical for subsequent performance analysis and validation studies.

2. Core Software & Integration Pathways MLIPs are typically implemented via interfaces or wrappers within established MD codes. The workflow involves preparing the atomic system, selecting and configuring the MLIP, and running the simulation through the chosen engine.

Diagram Title: MLIP Simulation Software Integration Pathways

3. Research Reagent Solutions: Essential Software Toolkit

| Tool Name | Primary Function | Key Notes for Protocol |

|---|---|---|

| LAMMPS | High-performance MD engine. | Primary platform via pair_style mlip or pair_style pace. Use stable release (e.g., 2Aug2023). |

| Atomic Simulation Environment (ASE) | Python library for atomistic modeling. | Provides flexible calculator interface for various MLIPs. Ideal for prototyping and complex workflows. |

MLIP Implementation (e.g., mlip-2, pacemaker, mace-torch) |

The core MLIP library/package. | Must be compiled/installed with compatibility for LAMMPS or ASE. Version pinning is critical. |

libtorch or jax |

Backend for neural network inference. | Required by many MLIPs. Match the version specified by the MLIP developers. |

| DeePMD-kit | Software stack for DeePMD models. | Enables pair_style deepmd in LAMMPS. A widely used MLIP framework. |

nequip or allegro packages |

Implementations of E(3)-equivariant MLIPs. | Typically run via ASE or through dedicated LAMMPS interfaces. |

phonopy, fit3 |

Validation tools. | Used for calculating phonon spectra or elastic constants to check MLIP stability post-simulation. |

4. Detailed Experimental Protocol: A Standardized Run

4.1. System Preparation & MLIP Selection

- Input Generation: Prepare an initial atomic configuration (POSCAR,

xyz, LAMMPSdatafile). Ensure periodic boundary conditions are correctly defined. - Model Acquisition: Obtain the MLIP model file (

.pt,.pth,.pb,.json/.yaml+.pth). Record the model's training data domain and intended chemical species.

4.2. Simulation Setup in LAMMPS

This protocol uses the mlip pair style (example for mlip-2).

Table 1: Key Parameters for a Typical MLIP-MD Run

| Parameter | Typical Value (Example) | Purpose & Consideration |

|---|---|---|

| Time Step | 0.5 - 1.0 fs | Lower than classical MD (0.5-1 fs) due to stiffer potentials. |

| Neighbor Cutoff | Model-defined (e.g., 5.0 Å) | Must match the model's training cutoff. Use neighbor skin (~2.0 Å) for list building. |

| Minimization Tol. | 1.0e-6 (etol), 1.0e-8 (ftol) |

Crucial to relax high-energy configurations before dynamics. |

| Thermostat | Nosé-Hoover (npt, nvt) |

Use moderate damping (100-500 fs) for smooth coupling. |

| Production Length | 10 - 1000 ps | Depends on property; start short for stability testing. |

4.3. Simulation Setup in ASE This protocol provides a complementary approach using Python scripting.

5. Validation & Stability Checks Protocol Prior to production, conduct short validation runs as per benchmarking thesis guidelines.

Diagram Title: Pre-Production MLIP Simulation Validation Checks

Table 2: Quantitative Stability Metrics for Validation Run (Example Output)

| Metric | Acceptable Range | Out-of-Range Indicates |

|---|---|---|

| Total Energy Drift (NVE) | < 1 meV/atom/ps | Potential instability or insufficient training. |

| Temperature Std. Dev. (NVT) | < 5% of target | Inadequate thermostatting or forces. |

| Max Atomic Displacement | < Cutoff radius/2 | Unphysical configurations or "atoms flying". |

| Mean Absolute Force | Consistent with training set | Model operating far from its training domain. |

This application note details a structured benchmarking protocol for a Machine Learning Interatomic Potential (MLIP) applied to small organic molecules and protein fragments. The work contributes to a broader thesis on establishing standardized, rigorous, and reproducible benchmarking practices for MLIPs in computational chemistry and drug development. The goal is to evaluate the potential's accuracy, computational efficiency, and transferability beyond its training domain.

Key Experiments & Quantitative Benchmarking

Accuracy on Quantum Chemistry Datasets

A critical test is the MLIP's ability to reproduce high-level ab initio quantum chemistry (QC) reference data for molecular properties.

Protocol 1.1: Single-Point Energy and Force Calculation

- Input Preparation: Curate a benchmark set of 500 diverse conformations for 50 small molecules (e.g., from ANI-1x, COMP6, or QM9 datasets). Include protein fragment dipeptides (e.g., ACE-ALA-NME).

- Reference Calculation: Perform DFT (ωB97X/6-31G*) single-point energy and force calculations for each conformation using software like ORCA or Gaussian. Record total energy (Eh), per-atom forces (Eh/Å).

- MLIP Inference: Using the trained MLIP (e.g., MACE, NequIP, ANI), evaluate the energy and forces for each conformation.

- Error Metric Calculation: Compute Mean Absolute Error (MAE) and Root Mean Square Error (RMSE) for energies (normalized per atom) and force components across all atoms.

Table 1: Energy and Force Error Metrics vs. DFT

| Molecule Class | # Conformers | Energy MAE (meV/atom) | Energy RMSE (meV/atom) | Force MAE (meV/Å) | Force RMSE (meV/Å) |

|---|---|---|---|---|---|

| Alkanes (C<10) | 100 | 1.8 | 2.5 | 24 | 38 |

| Functionalized Organics | 250 | 3.2 | 4.9 | 41 | 65 |

| Dipeptide Fragments | 150 | 5.7 | 8.1 | 68 | 102 |

| Overall | 500 | 3.6 | 5.2 | 44 | 70 |

Molecular Dynamics Stability and Property Prediction

Assess the MLIP's performance in finite-temperature simulations and its prediction of macroscopic properties.

Protocol 2.1: Microcanonical (NVE) Stability MD

- System Setup: Solvate a small molecule (e.g., aspirin) or a protein fragment (e.g., ACE-(ALA)5-NME) in a cubic water box with ~1000 TIP3P water molecules using MD engine (OpenMM, LAMMPS).

- Equilibration: Run 100 ps of NPT simulation at 300 K, 1 bar using a classical force field (GAFF2/TIP3P) to equilibrate density.

- Production Run: Switch to the MLIP for the solute, keeping water classical. Run a 1 ns NVE simulation from the equilibrated state.

- Analysis: Monitor total energy drift. A stable potential should show minimal drift (< 0.1% over 1 ns). Analyze solute root-mean-square deviation (RMSD) to assess structural integrity.

Protocol 2.2: Thermodynamic Property Calculation

- Setup: Create a periodic box of 200 pure organic molecule (e.g., ethanol) copies.

- Simulation: Using the MLIP, run a 2 ns NPT simulation (300 K, 1 bar) with a Langevin thermostat and barostat.

- Property Calculation:

- Density: Average over the final 1 ns.

- Enthalpy of Vaporization (ΔHvap): Calculate as ΔHvap = Egas - Eliq + RT, where energies are from separate gas and liquid phase simulations.

Table 2: Predicted Thermodynamic Properties vs. Experiment

| Property | Molecule | MLIP Prediction | Experimental Value | % Error |

|---|---|---|---|---|

| Density (g/cm³) | Ethanol | 0.781 | 0.789 | -1.0% |

| ΔHvap (kJ/mol) | Ethanol | 42.1 | 42.3 | -0.5% |

| Density (g/cm³) | Acetone | 0.784 | 0.790 | -0.8% |

| ΔHvap (kJ/mol) | Acetone | 31.2 | 31.0 | +0.6% |

Transferability and Rare Event Sampling

Evaluate performance on tasks outside the direct training distribution.

Protocol 3.1: Torsional Potential Energy Scan

- Selection: Choose a central rotatable bond in a molecule not prominently featured in training (e.g., a drug-like molecule).

- Scan: Rotate the dihedral angle in 15° increments, minimizing the geometry at each step with the MLIP.

- Reference: Perform a relaxed scan at the DFT (B3LYP-D3/6-31G) level.

- Comparison: Plot and compare energy profiles. Calculate MAE of relative torsion energies.

Experimental Workflow Diagram

Title: MLIP Benchmarking Workflow

MLIP Energy Evaluation Pathway

Title: MLIP Architecture and Training

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for MLIP Benchmarking

| Item / Solution | Function in Benchmarking | Example / Note |

|---|---|---|

| Reference QC Datasets | Provides ground-truth data for accuracy tests. | ANI-1x, COMP6, SPICE, QM9. Critical for error metric calculation. |

| MLIP Software | Framework for potential energy evaluation and MD. | MACE, NequIP, Allegro, CHGNET. Chosen based on system symmetry. |

| MD Simulation Engine | Performs dynamics and sampling using the MLIP. | LAMMPS, OpenMM with custom interface (e.g., TorchMD-Net). |

| Quantum Chemistry Code | Generates high-fidelity reference data. | ORCA, Gaussian, PSI4. Level of theory (e.g., DLPNO-CCSD(T)) must be specified. |

| Analysis & Visualization Suite | Processes trajectories and calculates metrics. | MDAnalysis, VMD, Matplotlib, pandas. For RMSD, density, energy drift. |

| Classical Force Field Parameters | Baseline for comparison of speed/accuracy. | GAFF2 (organics), CHARMM36 (proteins). Highlights MLIP's value proposition. |

| Curated Benchmark Molecule Set | Standardized test for transferability. | Created from drug fragments (e.g., from PDB) & challenging conformations. |

Diagnosing and Fixing Common MLIP Pitfalls: Overfitting, Extrapolation, and Failure Modes

Within the framework of developing robust Machine Learning Interatomic Potential (MLIP) benchmarking protocols, overfitting represents a primary challenge. An MLIP that overfits its training data fails to generalize to unseen atomic configurations, compromising its predictive reliability in molecular dynamics simulations for drug discovery. This document provides application notes and detailed experimental protocols for identifying overfitting and implementing two cornerstone mitigation strategies: regularization and early stopping, contextualized for MLIP development and validation.

Identifying Overfitting: Key Metrics and Protocols

Protocol 2.1: Train-Validation-Test Split for MLIPs

- Objective: To establish independent datasets for model training, hyperparameter tuning, and final unbiased evaluation.

- Methodology:

- Data Curation: Assemble a diverse dataset of atomic structures (e.g., molecules, crystals, surfaces) with corresponding reference energies and forces from ab initio calculations (e.g., DFT).

- Stratified Splitting: Partition the dataset into three subsets:

- Training Set (70-80%): Used to optimize the model's weights (e.g., neural network parameters).

- Validation Set (10-15%): Used during training to monitor performance on unseen data and to make decisions about hyperparameters (e.g., regularization strength, early stopping point).

- Test Set (10-15%): Used only once, after model selection and training are complete, to provide a final estimate of generalization error. This set must remain untouched during all development phases.

- Crucial Consideration: Ensure splits preserve the distribution of key chemical/structural features (e.g., bond types, coordination numbers) to avoid bias.

Protocol 2.2: Monitoring Learning Curves

- Objective: To visually diagnose overfitting by comparing model performance on training vs. validation sets across training epochs.

- Methodology:

- During model training, log the chosen loss function (e.g., Mean Squared Error for energy/forces) for both the training and validation sets at regular intervals (e.g., every epoch).

- Plot the learning curves: epochs (x-axis) vs. loss (y-axis), with two lines for training and validation loss.

- Diagnosis: A diverging gap where training loss continues to decrease while validation loss plateaus or increases is the hallmark of overfitting.

Mitigation Protocol: Regularization Techniques

Regularization modifies the learning algorithm to discourage complexity, promoting simpler models that generalize better.

Protocol 3.1: L1/L2 Weight Regularization

- Objective: Penalize large weight magnitudes in the MLIP's neural network.

- Methodology:

- Modify the loss function (L) by adding a penalty term Ω(w) for the weights (w).

- L2 Regularization (Weight Decay): Ω(w) = λ Σ ||w||². Encourages small, distributed weights.

- L1 Regularization: Ω(w) = λ Σ |w|. Promotes sparsity by driving some weights to zero.

- Implementation: The regularization strength (λ) is a key hyperparameter. Tune λ using the validation set performance (Protocol 2.1).

Protocol 3.2: Dropout for Atomic Neural Networks

- Objective: Prevent complex co-adaptations of neurons by randomly dropping a fraction of units during training.

- Methodology:

- For each training batch and layer, randomly deactivate a fraction p (e.g., 0.1-0.5) of neurons and their connections.

- During validation or testing, all neurons are active, but their outputs are scaled by (1-p) to maintain expected output magnitude.

- MLIP Consideration: Apply dropout to dense hidden layers of the atomic neural network, but typically not to the final energy/force output layer.

Table 1: Comparison of Common Regularization Techniques for MLIPs

| Technique | Primary Mechanism | Key Hyperparameter | Pros for MLIPs | Cons for MLIPs |

|---|---|---|---|---|

| L2 Regularization | Penalizes sum of squared weights. | Decay rate (λ) | Stabilizes training; widely supported. | Does not promote sparse models. |

| L1 Regularization | Penalizes sum of absolute weights. | Decay rate (λ) | Creates sparse, interpretable networks. | May be too aggressive for force accuracy. |

| Dropout | Randomly drops neurons during training. | Dropout rate (p) | Robust ensemble effect; reduces overfitting. | Increases training variance; longer training. |

| Noise Injection | Adds Gaussian noise to training data/features. | Noise magnitude (σ) | Simulates larger dataset; improves robustness. | Can slow convergence if poorly tuned. |

Mitigation Protocol: Early Stopping

Protocol 4.1: Implementing Early Stopping

- Objective: Halt training when performance on the validation set stops improving, preventing the model from memorizing training data.

- Detailed Methodology:

- Before training, define:

- Patience (P): Number of epochs to wait after the last improvement (e.g., 50-100).

- Delta (Δ): Minimum change in monitored metric to qualify as an improvement (e.g., 1e-5 in validation loss).

- Checkpointing: Always save a copy of the model parameters when a new best validation score is achieved.

- During training (after each epoch): a. Evaluate the model on the validation set. b. If the validation loss decreases by > Δ, save the model checkpoint and reset the wait counter. c. If the validation loss does not improve for P consecutive epochs, stop training. d. Restore the model parameters from the best saved checkpoint.

- Final Step: Evaluate the final, checkpointed model on the held-out test set (Protocol 2.1) for the final performance report.

- Before training, define:

The Scientist's Toolkit: MLIP Regularization Research Reagents

Table 2: Essential Materials and Software for Protocol Implementation

| Item Name | Function/Description | Example/Tool |

|---|---|---|

| Ab Initio Dataset | Reference data for training and validation. High-quality energies and forces. | Quantum Espresso, VASP output processed via ASE. |

| MLIP Framework | Software with built-in support for regularization and validation. | AMPTorch, DeepMD-kit, SchNetPack, MACE. |

| Automatic Differentiation Library | Enables gradient-based optimization and loss function customization. | PyTorch, JAX, TensorFlow. |

| Hyperparameter Optimization Suite | Systematically tunes λ, p, and early stopping parameters. | Optuna, Ray Tune, Weights & Biases Sweeps. |

| Checkpointing Utility | Saves and restores model state during training. | PyTorch Lightning ModelCheckpoint, custom callbacks. |

| Visualization Library | Generates learning curves and diagnostic plots. | Matplotlib, Seaborn, TensorBoard. |

1. Introduction & Application Notes Within Machine Learning Interatomic Potential (MLIP) benchmarking protocols, extrapolation refers to making predictions for atomic configurations, chemistries, or phases that reside outside the convex hull of the training data manifold. This is a critical failure mode, as MLIPs can produce dangerously confident but physically implausible results, compromising reliability in drug development (e.g., protein-ligand binding energy prediction) and materials discovery. These notes outline protocols for recognizing and mitigating extrapolation.

2. Quantitative Risk Indicators & Detection Metrics The following table summarizes key quantitative indicators used to flag potential extrapolation in MLIPs.

Table 1: Metrics for Extrapolation Detection in MLIPs

| Metric Category | Specific Metric | Threshold Indicator (Typical) | Interpretation |

|---|---|---|---|

| Uncertainty Quantification | Predictive Variance (Ensemble) | > 2-3x mean training variance | High uncertainty suggests OOD query. |

| Calibration Error | Expected vs. observed error mismatch | Poor calibration often correlates with extrapolation. | |

| Data-Distance Measures | Mahalanobis Distance (in latent space) | Percentile > 95-99% of training distribution | Query is far from the training data centroid. |

| k-Nearest Neighbor Distance | Distance >> max training k-NN distance | Local data sparsity detected. | |

| Model Internals | Neural Network Activation Statistics | Significant deviation from training norms | Hidden layer patterns are novel. |

| Kernel Function Value (for kernel-based MLIPs) | Value below a defined cutoff | Similarity to training data is insufficient. |

3. Experimental Protocols for Benchmarking Extrapolation Robustness

Protocol 3.1: Systematic Leave-Cluster-Out Validation

- Objective: To evaluate model performance on systematically excluded domains (e.g., specific molecular functional groups, crystal phases).

- Methodology:

- Cluster training data using a relevant descriptor (e.g., SOAP, CM, Morgan fingerprints).

- Iteratively hold out all data from one or more clusters as the test set.

- Train the MLIP on the remaining data.

- Evaluate the model on the held-out cluster. Quantify errors (MAE, RMSE) and uncertainty metrics (variance).

- Correlate error explosion with distance metrics from Table 1.

Protocol 3.2: Progressive Domain Shift Stress Test

- Objective: To measure model degradation as queries move further from the training domain.

- Methodology:

- Define a reaction coordinate (e.g., bond strain, torsion angle, lattice constant).

- Generate configurations along this coordinate, starting within and moving outside the training range.

- Compute reference energies (DFT, CCSD(T)) for these points.

- Predict energies/forces using the MLIP and its built-in uncertainty estimator.

- Plot error and uncertainty versus the reaction coordinate to identify the "extrapolation cliff."

4. Visualization of Workflows and Relationships

MLIP Extrapolation Detection Workflow (94 chars)

Data Density and Prediction Risk Regions (85 chars)

5. The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Extrapolation Research in MLIPs

| Item / Solution | Function in Research |

|---|---|

| Uncertainty-Aware MLIPs (e.g., Ensemble, Bayesian NN, Deep Evidential) | Provides inherent predictive variance as a primary signal for OOD detection. |

| High-Dimensional Descriptors (e.g., Smooth Overlap of Atomic Positions - SOAP) | Enables meaningful distance metrics between atomic environments for density estimation. |

| Conformal Prediction Frameworks | Generates statistically rigorous prediction intervals with guaranteed coverage under data exchangeability. |

| Active Learning Loop Software (e.g., FLARE, ChemFlow) | Automates the detection of uncertain points and iterative addition to training data to reduce extrapolation. |

| Ab Initio Reference Databases (e.g., QM9, Materials Project, OC20) | Provides ground-truth data for creating controlled train/test splits and stress-testing benchmarks. |